When we talk about RTSP, it is actually not only the RTSP protocol itself, but we will actually inevitably talk about the following protocols.

- RTP (Real-time Transport Protocol): the protocol really used to transmit audio-video, generally based on the UDP protocol, with special attention to the fact that it uses an even number of ports.

- RTCP (Real-time Control Protocol): sister protocol to RTP, which provides quality of service (QoS) feedback by periodically sending statistical information, it uses an odd number of ports and is RTP port + 1.

- SDP (Session Description Protocol): a protocol of the application layer, which can also be described as a format, the same as HTML, JSON, etc. (briefly mentioned in today’s article).

Here I believe you can see some of the content, such as the fact that the RTSP protocol itself is not responsible for playback, but only for interactive control, RTP and RTCP is the real video transmission.

Here we will expand the details of the content, and will use practical examples to illustrate.

A detailed introduction to several protocols

When developing for the Web, we often use various HTTP packet capture tools, but as an aspiring engineer, you should learn to use WireShark, a powerful and free network packet capture analysis tool that allows us to see exactly what kind of protocols we are using every day.

Open WireShark (skip the installation process, I believe you can handle it yourself), select the network port your computer is using, for example, Linux may be eth0, Mac is en0, double-click it and you can enter the packet capture.

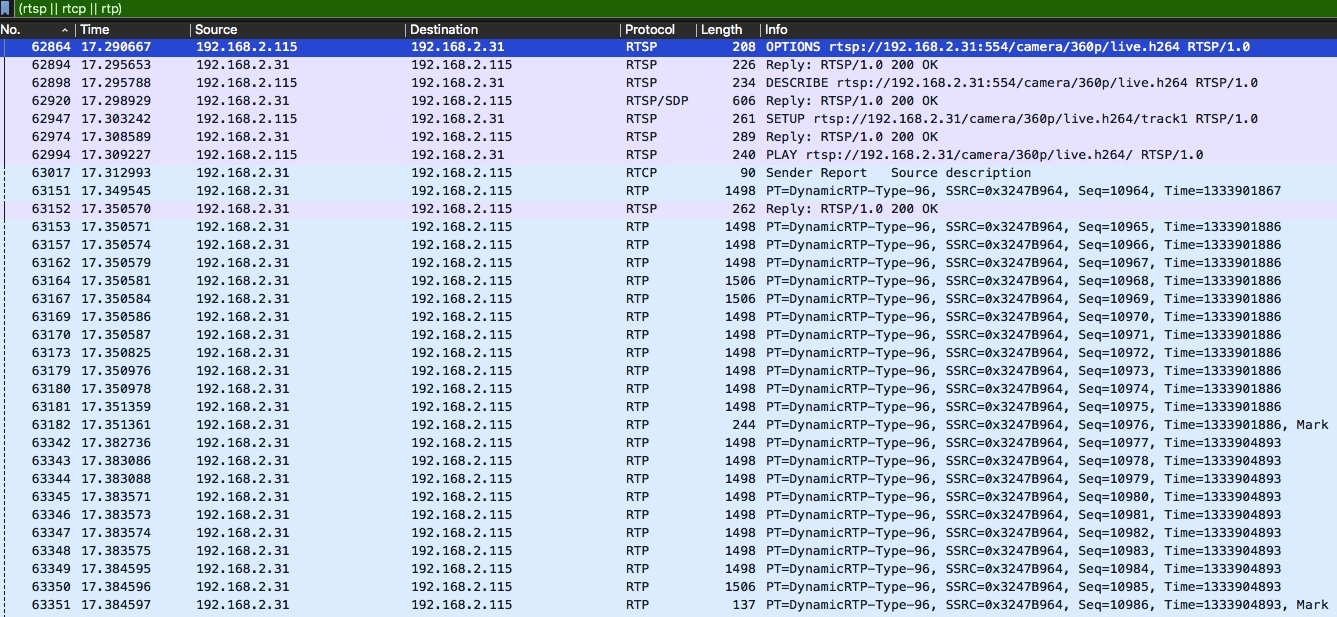

Next, in WireShark’s filter field, fill in rtsp || rtcp || rtp so that we can filter out some irrelevant protocols.

Before proceeding, you also need to prepare an RTSP server, which I did here with a webcam, and a local RTSP player, like the one I use for VLC. If you don’t have one available at the moment, you can also build a temporary one, see rtsp-simple-server for more information.

Of course, there are clients, we can play RTSP video sources directly with various players such as VLC, or you can use the command line, for example ffplay for FFMpeg.

|

|

If you are using VLC like me: click Open Media -> Network, then fill in the URL with the video source, click Open and it will start playing, after a few seconds click Stop.

overall communication process overview

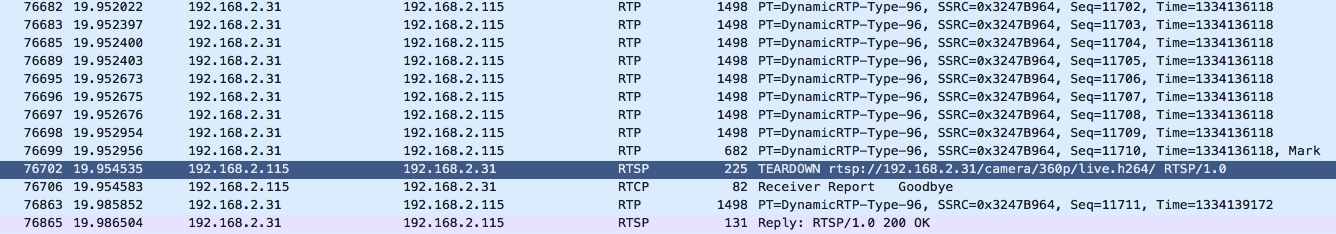

The following two pictures is my use of VLC play and stop the network communication between the capture packet, which 192.168.2.31 is the webcam, and 192.168.2.115 is my computer, that is, the player is located on the computer.

Parts to note.

- this time the pause (PAUSE) is ignored, as well as others such as GET_PARAMETER, SET_PARAMETER.

- before the reply to the PLAY request, we see an RTCP as well as an RTP request, which indicates that the playback source has already started transmitting playback data before the reply.

- some packets in the subsequent ongoing RTP with a Mark character, which is due to packet splitting caused by oversized video data.

RTSP protocol

Next, let’s go through the actual packet capture to illustrate it in detail.

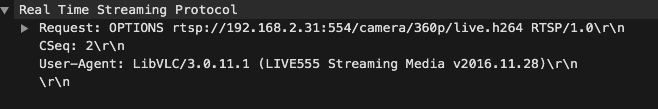

OPTIONS request

First, the client will send a very similar to the HTTP protocol RTSP protocol to 192.168.1.100 port 554, is an OPTIONS request, that is, a list of requests available for this source, note that CSeq, each RTSP request will take, so that the server response will correspond with the client one by one (which is not HTTP).

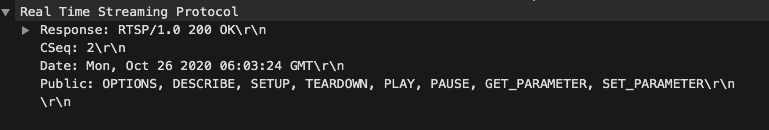

Then the server will respond.

As above, the server tells the client that it can use several requests such as DESCRIBE, SETUP, TEARDOWN, PLAY, PAUSE, etc.

DESCRIBE request

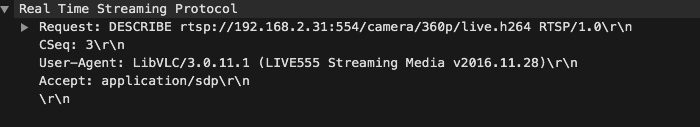

Now, the client sends a DESCRIBE request.

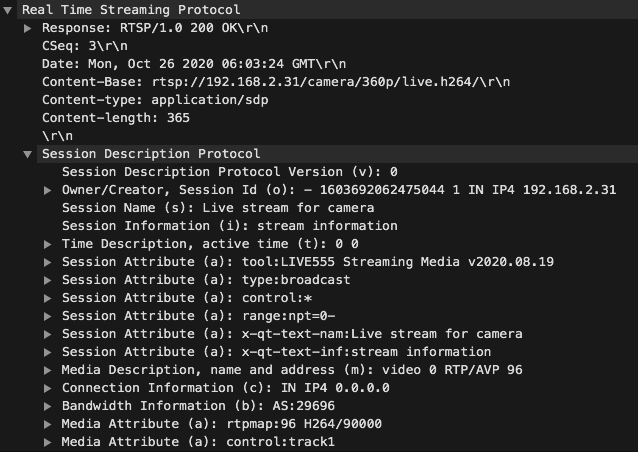

The server will respond with content in SDP format.

A very friendly part of WireShark is that it will show you the description of the protocol, SDP can not read? It will tell you clearly, and for a detailed explanation of the SDP protocol see the description on the wiki.

Note the line rtpmap:96 H264/90,000, which indicates that the video source is encoded in H264 and the sampling frequency is 90,000.

As above, the server will show that the actual video content of this source is in MP4 format, with video on channel 0 and audio on channel 1.

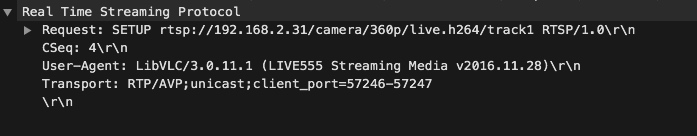

SETUP request

Now we get to the SETUP step, which is equivalent to initialization (if the source has both audio and video, then it needs to SETUP twice).

Here is the explanation of Transport.

RTP/AVP: indicates RTP A/V Profile, in fact, UDP is omitted after it, if it is RTP/AVP/TCP, it means RTP uses TCP for transmission.unicast: indicates unicast, to differentiate from multicast, which is transmitted one-to-one instead of one-to-many.client_port=57246-57247: indicates that the client has opened ports 57246 and 57247 for RTP and RTSP data transmission respectively.

Next is the server side response.

Note here the Session, which is taken with the next playback control requests and is used to distinguish between the different playback requests.

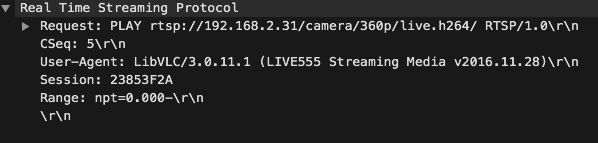

PLAY request

Next is the official request.

Where Range indicates the range of playback times, and npt=0.000- in the above figure indicates that this is a live video feed.

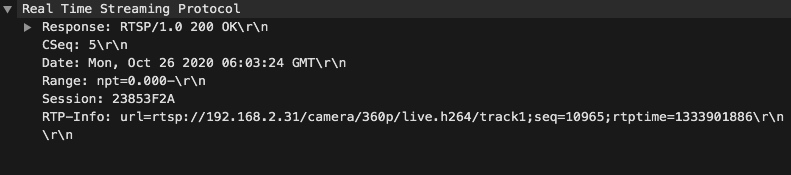

The server will respond with a header with RTP-Info, where the seq and rtptime information are used to indicate the RTP packet information.

If you pay attention to the overview above, you’ll see that the server is sending RTP and RTCP data for real playback as soon as it receives the PLAY request.

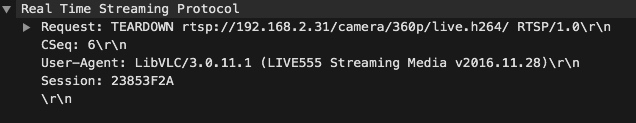

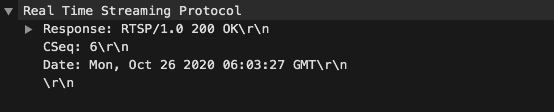

TEARDOWN request

That is, stop the playback request, which is very simple to do, so I won’t go into details here.

RTCP Protocol

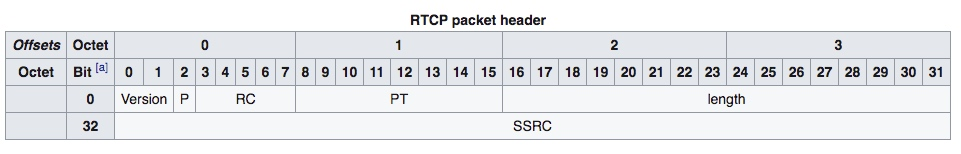

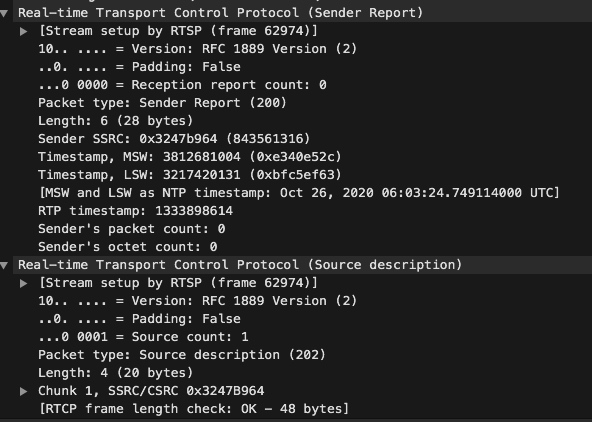

In contrast to the text protocol of RTSP, RTCP is a binary protocol with the following protocol header.

Version: version number, consistent with the version number in RTP, currently 2.P (Padding): used to indicate whether the RTP packet contains padding bytes (for example, encrypted RTP packets are used).RC (Reception report count): statistical information, receivedPT (Packet type): the type of the packet, currently there are sender report (SR), receiver report (RR), SDES (source description), BYE (end), APP (application customization).Length: the length of the current packet.SSRC: synchronization source identifier.

Taking the actual example, we will see that the server accepts the PLAY request and sends an RTCP Sender Report immediately afterwards.

The point to note is that it has two timestamps, one is the NTP (Network Time Protocol) timestamp, with 8 bytes to represent the absolute time, and the other is the RTP timestamp, which is the relative time, can be used to calculate the time of the RTP packet, the specific calculation rule is to divide the difference between the two RTP timestamps by the video sampling frequency, and then add The absolute time here is the absolute time of the current RTP packet. (Don’t know the sampling frequency? Go back and look at the response to the DESCRIBE request for RTSP above.)

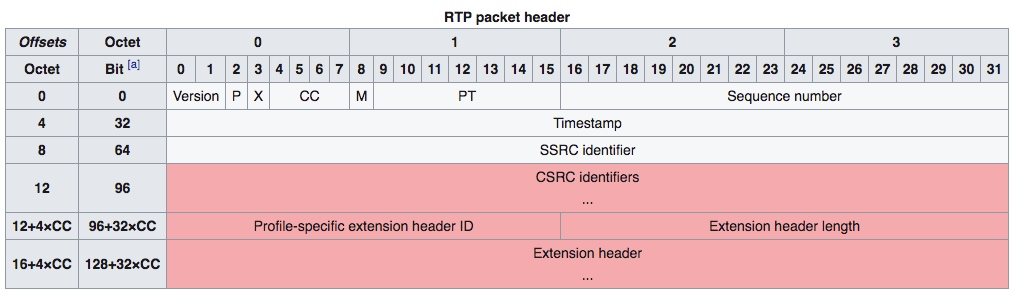

RTP Protocol

RTP is also a binary protocol, and its protocol header is as follows.

Version: Version number, same as RTCP; 2.P (Padding): same as RTCP.X (Extension): indicates whether there is an extension packet header.CC (CSRC count): number of CSRCs.M (Marker): marker bit, e.g. to mark the boundary of a video frame.PT (Payload type): packet content type, there are many specific types, you can see rfc3551 if you want to understand.Sequence number: Sequence number, to prevent packet loss and packet disorder.timestamp: timestamp, related to RTCP timestamp above.SSRC: synchronization source identifier.CSRC: Contribution source identifier.headerextension: expandable packet header.

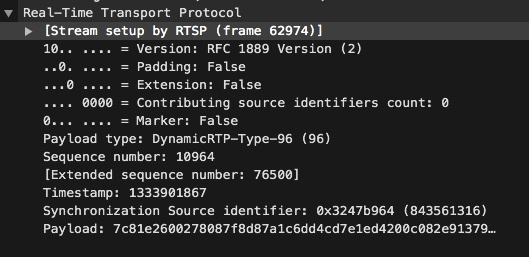

Let’s pick the first RTP packet and look at it. We can see that the content type is 96, which is H264.

Summary

The RTSP protocol itself is not complicated, so let’s talk about the practice of RTSP in the next article.