1. Chaos Mesh

Chaos Mesh is a cloud-native chaos engineering platform that orchestrates chaos in a Kubernetes environment, allowing users to simulate real-world anomalies in development testing and production environments, helping them to identify potential system problems.

Chaos Mesh is open-sourced by PingCAP and originated as the core testing platform of TiDB, inheriting a lot of TiDB’s existing testing experience at the beginning of the release. At the same time, Chaos Mesh is designed mainly for Kubernetes scenarios, and can be quickly deployed in the Kubernetes cluster under test without modifying the deployment logic of the system under test (SUT).

Chaos Mesh defines a large number of CRDs in Kubernetes, and users can create/edit/delete these objects to enable fault orchestration.

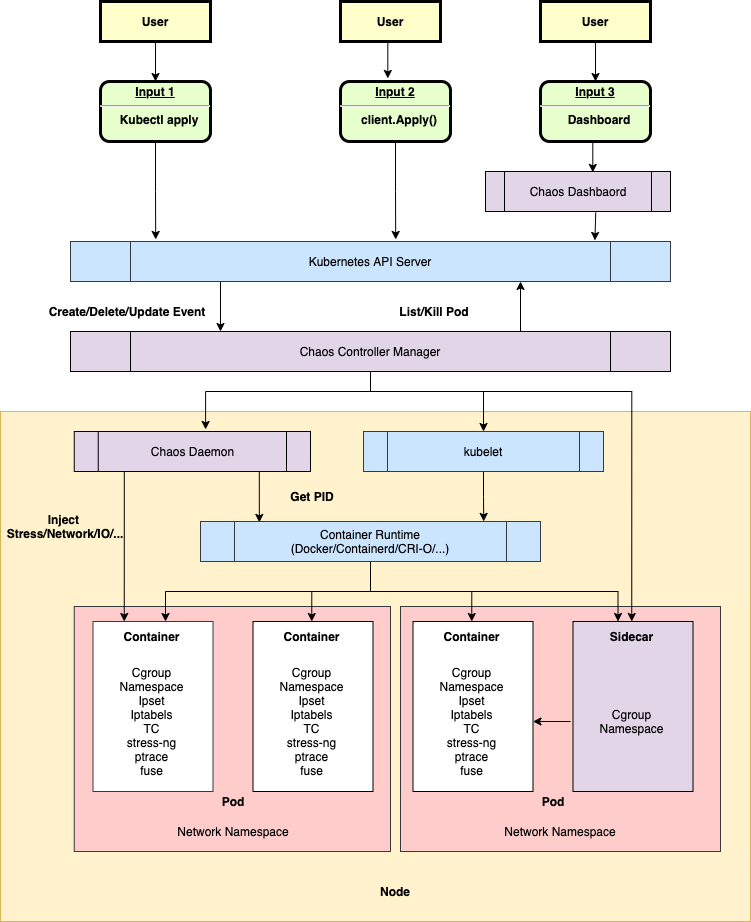

Chaos Mesh consists of the following three main components.

- Chaos Dashboard: a visual component that allows users to manipulate and observe chaos experiments through this interface. Chaos Dashboard also provides RBAC permission management mechanism.

- Chaos Controller Manager: The core logic component of Chaos Mesh, mainly responsible for the scheduling and management of chaos experiments. This component contains several CRD Controllers, such as Workflow Controller, Scheduler Controller, and various fault types of Controllers.

- Chaos Daemon: The main execution component of Chaos Mesh. Chaos Daemon runs as DaemonSet and has Privileged privileges by default (can be turned off). This component mainly interferes with specific network devices, file systems, kernels, etc. by hacking into the target Pod Namespace.

2. Quick Start

1. Helm-based Deployment

Chaos Mesh officially provides the Helm package for quick installation on Kubernetes.

|

|

Chaos Mesh officially provides a clear versioning plan: each version is supported for six months, with a new version released every three months, and the current compatibility relationship between Chaos Mesh and Kubernetes can be found on the official website.

2. Injecting Faults

The most important feature of Chaos Mesh compared to other Chaos tools is that it describes faults based on custom CRDs. The following example defines a NetworkChaos type fault that causes a 10ms network delay for a Pod with a specified label.

|

|

3. Fault Types

Chaos Mesh supports the injection of different types of faults in Kubernetes Pods and physical machines.

1. Kubernetes Pods

Kubernetes Pods can inject the following types of failures.

- PodChaos simulates a scenario where the specified Pod or container fails, including the following scenarios.

- Pod Failure: Injects a failure into the specified Pod, making the Pod unavailable for a period of time (the official documentation mentions that injecting this type of failure is achieved by replacing the image).

- Pod Kill: Kills the specified Pod. In order to ensure that the Pod can be restarted successfully, ReplicaSet or a similar mechanism needs to be configured.

- Container Kill: Kills the specified container located in the target Pod.

- NetworkChaos Simulates network failure scenarios in a cluster, including the following.

- Partition: network disconnection, partitioning.

- Net Emulation: used to simulate bad network state, such as high latency, high packet loss, packet disorder, etc. (relies on linux kernel having NET_SCH_NETEM module, Centos requires additional installation of kernel-modules-extra).

- Bandwidth: Used to limit the bandwidth of communication between nodes.

- IOChaos Simulates a file system failure scenario, including the following.

- latency: adds a delay to the file system call

- fault: make the file system call return an error

- attrOverride: modify file attributes

- mistake: make the file read or write to the wrong value

- DNSChaos is used to simulate an incorrect DNS response, such as returning an error when a DNS request is received, or returning a random IP

- random: The DNS service returns a random IP address

- error: the DNS service returns an error

- TimeChaos is used to simulate time-shifted scenarios. Note that TimeChaos only affects processes with PID

1in the PID namespace of the container, and sub-processes of PID1. For example, processes started bykubectl execwill not be affected. - KernelChaos By using BPF 20171213180356.hsuhzoa7s4ngro2r@destiny/T/) to inject I/O or memory-based faults on the specified kernel path. Although the KernelChaos injection can be set to one or several Pods, the performance of other Pods on the host to which they belong can be affected somewhat, since all Pods share the same kernel. Enabling KernelChaos requires the following preparations.

- Kernel failure requires Linux kernel version >= 4.18

- Boot kernel configuration entry CONFIG_BPF_KPROBE_OVERRIDE

- Turn the bpfki.create option on during installation

- HTTPChaos simulates a scenario where the HTTP server fails during a request or response. The underlying call to the chaos-tproxy service implements request interception based on iptables-extension and currently does not support HTTPS. HTTPChaos supports the following scenarios.

abort: interrupt the server side connectiondelay: inject a delay for the target processreplace: replace part of the request or response messagepatch: add extra content to the request or response message

- JVMChaos simulates the JVM via Byteman application faults, mainly supporting the following types of faults.

- Throwing custom exceptions

- Triggering garbage collection

- Adding method delays

- Specifying method return values

- Setting the Byteman profile to trigger faults

- Increasing JVM Stress

2. Physical Machines

Chaosd is a chaos engineering test tool provided by Chaos Mesh (requires a separate download and deployment) for injecting faults on physical machine environments and providing fault recovery capabilities.

Chaosd offers the following core benefits.

- Ease of use: Simple Chaosd commands can be entered to create chaotic experiments and manage them.

- Rich fault types: Fault injection is provided at different levels and types of physical machines, including process, network, pressure, disk, host, etc., and more features are being extended.

- Support multiple modes: Chaosd can be used both as a command line tool and as a service to meet the usage requirements of different scenarios.

You can use Chaosd to simulate the following types of failures.

- process: Fault injection to a process. Note that when using Systemd to manage processes, Systemd may automatically resume service after the process exits.

- kill: Send

SIGKILL,SIGTERM,SIGSTOPsignals to the specified process. - stop: sends

SIGSTOPsignal to the specified process

- kill: Send

- network: fault injection to the physical machine’s network, supporting operations such as increasing network latency, packet loss, and corrupt packets. The scenario of fault injection is similar to container, but supports richer customization such as specifying devices.

- Pressure: Injects pressure on the CPU or memory of the physical machine.

- cpu

- mem

- disk: Fault injection to the physical machine’s disk, supporting operations such as increasing read/write disk load and filling the disk.

- host: Fault injection to the physical machine itself, supports shutdown and other operations.

- shutdown: Simulates host shutdown shutdown process

- JVM: Simulates a JVM application failure via Byteman.

- Time: Simulates time-shifted scenarios.

- File: Chaosd simulates file failure scenarios, including adding a file, writing a file, deleting a file, modifying file permissions, renaming a file, replacing file data, etc.

1. PhysicalMachine objects

Chaoctl users can register any physical machine as a PhysicalMachine object in a Kubernetes cluster via Chaosctl, regardless of whether the node is a node in the Kubernetes cluster.

Users can run the Chaosd Server service on the test node, and Chaos Mesh communicates with each node’s Chaosd Server service over HTTP/HTTPS and injects the corresponding type of failure to the specified node based on the PhysicalMachineChaos object set by the user.

It is officially recommended that Chaos Mesh and Chaosd Server should communicate with each other based on HTTPS, and users can use chaosctl to generate Chaosd certificates.

|

|

The following command starts the Chaosd Server service.

Creates a PhysicalMachine object based on HTTP access.

2. Injecting physical machine faults

ChaosMesh allows physical machine failures to be injected in three ways.

- Injecting a fault on the current physical machine via the chaosd command line tool

- Running the chaosd service in Server mode, where users inject faults by sending HTTP requests

- Injecting faults into a given node by defining a PhysicalMachineChaos object on Kubernetes

4. fault scheduling

1. Defining Fault Ranges

Users in Chaos Mesh can set Selectors in the Chaos object to limit the range of faults that can occur. The following types of Selectors are currently supported.

- Label Selector: Specify the Labels that the experiment target Pod needs to have.

- Expression Selector: Specify a custom set of Labels rules to qualify the experiment target Pod.

- Annotation Selector: Same as Label Selector, but for the Annotation attribute of the Pod.

- Field Selector: Specify the Fields of the Pod that is the target of the experiment, e.g., directly specify the Pod’s name or nodeIP.

- PodPhase Selector: Specify the phase of the Pod that is the target of the experiment, the supported phases include Pending, Running, Succeeded, Failed, Unknown

- Node Selector: Limit the scope of fault injection according to the Label of the Node object of the Pod running node.

- Node List Selector: Specify the Node to which the experiment target Pod belongs.

- Pod List Selector: Specify the namespace and Pod list of the experimental target Pod, i.e. filter by namespace and Pod name.

- Namespace Selectors: Specify the namespace where the experiment target occurs.

- Physical Machine List Selector: Filter based on the imported PhysicalMachine name

Chaos Mesh supports namespace protection. The protection feature is based on a “whitelist” mechanism, where the user needs to add the following annotation to the Namespace that is allowed to inject faults.

|

|

When the Pod filtered above is running on a Namespace that is not authorized to inject faults, then the fault injection will fail.

The namespace protection mechanism is turned off by default and turned on by the following configuration.

|

|

2. Chaos Mesh Task Flow

Chaos Mesh does not inject only one single fault in chaos testing, Chaos Mesh supports user-defined Workflow objects to orchestrate multiple exceptions or custom tasks.

The Workflow type is relatively simple, as follows.

Each object of the templates list, represents a node in the task flow. These nodes include the following types.

- EmbedChaos: EmbedChaos nodes that represent the injection of some kind of fault at the specified range.

- Task: Task node represents a custom task. task supports full corev1.Container definition, user can define container image, start command, mount volume, use probe, working directory, etc. ChaosMesh will create a single container Pod based on user definition. task container ends, you can define ConditionalBranch determines whether to continue with other processes.

- Logical nodes.

- Serial: Runs the other template specified in the children list serially

- Parallel: Runs the other templates specified in the children list in parallel

- Suspend: Suspend the workflow for a specified time.

- Schedule: inject timed scheduling faults, which can also be generated separately by defining Schedule objects (ref)

- StatusCheck: Based on the return value of the HTTP request, determines whether to terminate or continue the Taskflow based on the request return code.

Task does not support Pod-related definitions, so we cannot select the Node on which the Pod will run or specify a network namespace.

FAQ

-

When using Docker as the CRI for Kubernetes, chaos-daemon uses the Docker Client version 1.40. When the Docker version installed on the physical machine is too low, there is a failure to inject. In this case, you can set the Docker API version used by chaos-daemon when installing helm by

-setting chaosDaemon.env.DOCKER_API_VERSION="1.40". -

If you configure

controllerManager.enableFilterNamespace=true, you need to restart the chaos-mesh related containers to make the configuration take effect.