1. Container-based Serverless cannot support the shape of next-generation applications

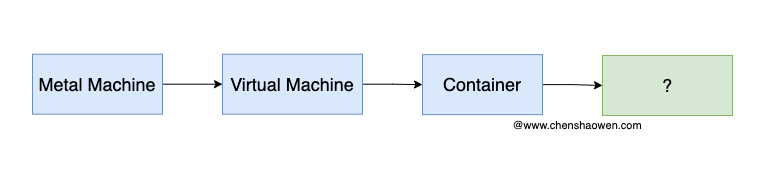

As shown above, we are experiencing a change in runtime state.

From bare metal machines to virtual machines, applications are no longer limited by the number of local servers, server room stability, with better resiliency and availability.

From virtual machines to containers, applications are no longer limited by the operating system, configuration drift, with better portability and scalability.

What’s the next runtime? Many articles say Serverless, but Serverless itself is not a runtime state, but a way of using a runtime state, a software architecture. Here is a typical Serverless system traffic diagram.

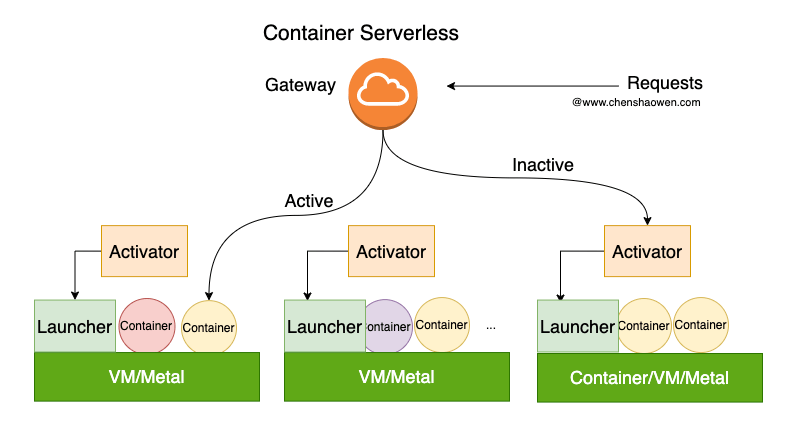

After Container Serverless receives the traffic, there are two cases:

- Active, there is already a Ready container, forward the traffic to the container directly

- Inactive, there is no Ready container, you need to forward the traffic to Activator first, Activator will cold start a container, and then forward the traffic to the container

This approach is consistent with Kubernetes’ Pod, Service, and Ingress traffic forwarding approach, except that it adds the concept of an Activator to carry cold start traffic.

As for the resiliency, it also leverages the capabilities of Kubernetes, with the help of some HPA, KPA, and KEDA resiliency policies.

Kubernetes can be used in three ways:

- Fully managed, where master and worker are handed over to the vendor.

- Semi-hosted, where the master is handed over to the vendor and the workers are maintained by themselves

- Self-built, where you build your own Kubernetes cluster based on IaaS

With a fully managed Kubernetes, such as EKS, it is easy to achieve the resiliency of Serverless.

This form of Serverless is not revolutionary, it is just a change in usage.

The other is the FaaS form of Serverless, where the granularity of focus is on functions, not containers, and usually requires an external BaaS service to support its state storage.

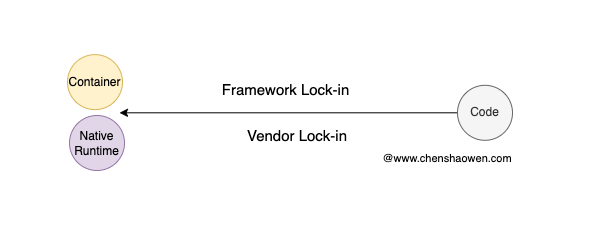

As shown above, there are two forms of FaaS Serverless:

- Container

- Native Runtime

FaaS in container form will leverage the power of Kubernetes, while FaaS in Native Runtime has limited language support, typically interpreted languages such as TypeScript, JavaScript, Python, etc.

More critical is that the FaaS form of Serverless is too customized. Either bound to the vendor’s FaaS service Vendor Lock-in, or bound to the platform’s application Framework, can only use the specified language, framework, components, the limitations are great. In some segments, there may be some opportunities, such as offline computing, data processing, data analytics, etc.

In general, Container Serverless is not disruptive, and FaaS Serverless is not generic enough to support the next application form change.

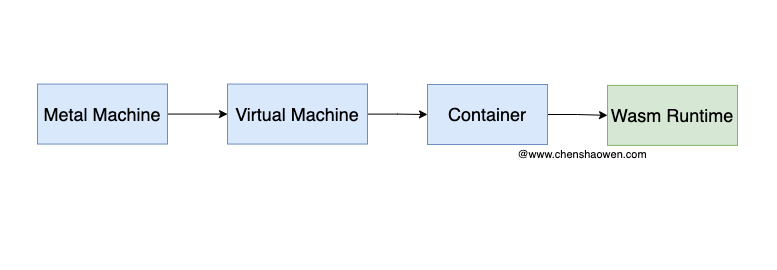

2. The future king is WebAssembly

WebAssembly is a new runtime that is a binary format that runs in browsers, Node.js, Deno, WASI, and other environments.

In fact, WebAssembly can be run on a WebAssembly runtime, system interface, as long as it is implemented. if the operating system integrates the WebAssembly runtime, then WebAssembly can be run on the operating system. webAssembly has the opportunity to become the new post-Container WebAssembly has the opportunity to become the new delivery format after Container.

WebAssembly Serverless has no Scale To Zero or cold start issues, and offers significant cost and performance benefits.

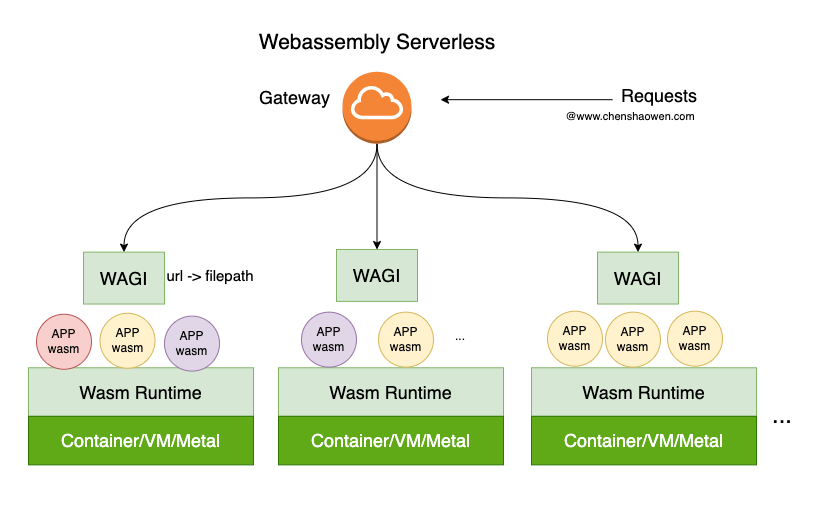

With WAGI, HTTP requests can be converted into WebAssembly calls. The runtime is no longer directly a Container, but a WebAssembly Runtime, and it doesn’t matter if the underlying layer is a Container, VM, or Metal, WebAssembly is free from these limitations and can even be an IoT device. All that is needed is for these devices to form a huge pool of compute and storage.

WebAssembly Serverless will not have Vendor Lock-in, and code written in any language that compiles to WebAssembly will run on WebAssembly Serverless.

3. front-end back-end, back-end lightweight

The current mainstream computing power is concentrated in the back-end, the front-end is a simple UI layer, the front-end computing power is very little, just simple rendering, event processing, etc..

But there are some changes in recent years.

- The front-end arithmetic power is getting stronger and stronger, and the performance of cell phones and computers is getting better and better

- Front-end engineering capabilities are getting stronger and stronger, and can replace the back-end to complete many tasks, such as document rendering (WPS online), image processing (PS, CAD), video processing, etc.

- Back-end computing power is getting more and more costly, and more machines are needed

- Back-end manpower is very expensive, and back-end salaries are generally much higher than front-end

So I drew the following diagram.

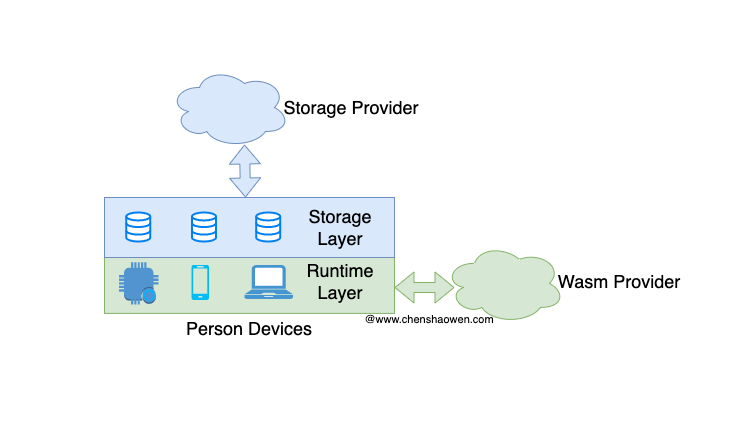

Compute and storage are pushed down to the front-end, where the front-end includes IoT, cell phones, computers, browsers and other terminals close to the user. The trend of the back-end is lightweight, only to provide some basic BaaS services can be.

This is consistent with the idea of Serverless = FaaS + BaaS, but FaaS in the front-end, BaaS in the back-end. That’s what I mean by front-end back-end and back-end lightweight.

The front-end starts to become heavy, providing operational arithmetic; the back-end is lightweight, providing only basic services, or even just static file distribution capabilities.

As shown above, the backend only provides an access portal, after the frontend is loaded and the WebAssembly is downloaded, it has nothing to do with the backend. You don’t even need to develop a separate backend, you can use CDN network directly.

4. Distributed applications will be easier to develop

The previous talk about the shape of the next generation of applications, so what is the shape of the next generation of applications? Distributed Applications

There is a good example in the blockchain field, which is DApp application. dApp has two characteristics.

- decentralized

- Distributed

Decentralized means that there is no centralized server, and distributed means that the data of the application is distributed on multiple nodes.

The biggest problem of the current web world is centralization. The traffic, attention, and data on the Internet are concentrated in a few companies, and with that, wealth is concentrated in the hands of a few people. This is the source of many conflicts.

The emergence of distributed applications can solve these problems.

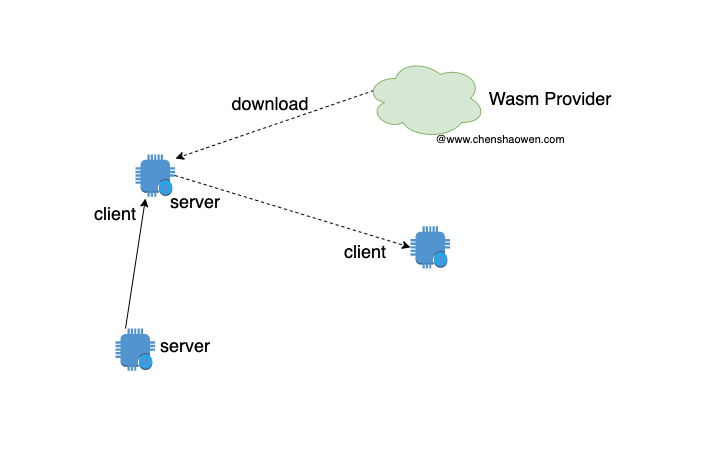

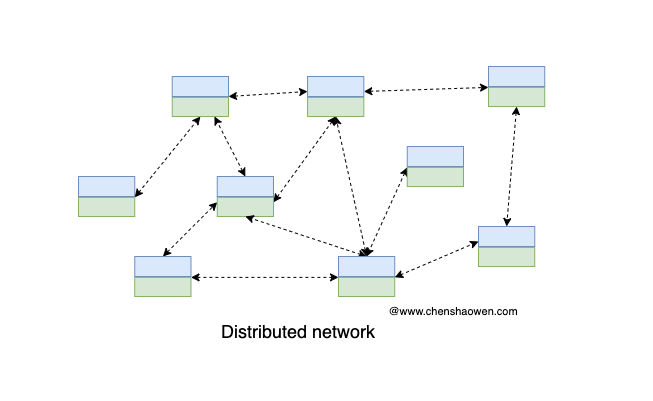

As shown above, each device is both a consumer and a producer. Each device can provide computing power, storage, and network, and can also consume computing power, storage, and network.

It is at this point that the data is the user’s own, rather than being controlled by a centralized server.

And with enough such a large number of nodes, a whole new distributed network can be formed, and applications built on this basis are the future-oriented ones.