I. WebSocket

WebSocket is a two-way communication protocol that uses the HTTP/1.1 protocol in the handshake phase (HTTP/2 is not supported at this time).

The handshake process is as follows.

-

First the client initiates a special HTTP request to the server with the following message header.

1 2 3 4 5 6 7 8GET /chat HTTP/1.1 // 请求行 Host: server.example.com Upgrade: websocket // required Connection: Upgrade // required Sec-WebSocket-Key: dGhlIHNhbXBsZSBub25jZQ== // required,一个 16bits 编码得到的 base64 串 Origin: http://example.com // 用于防止未认证的跨域脚本使用浏览器 websocket api 与服务端进行通信 Sec-WebSocket-Protocol: chat, superchat // optional, 子协议协商字段 Sec-WebSocket-Version: 13 -

If the server side supports this version of WebSocket, it will return a 101 response with the following response header.

Once the handshake is complete, the next TCP packets are all frames of the WebSocket protocol.

As you can see, the handshake here is not a TCP handshake, but a handshake inside the TCP connection, from HTTP/1.1 upgrade to WebSocket.

WebSocket provides two protocols: unencrypted ws:// and encrypted wss:// . Because it uses the HTTP handshake, it uses the same ports as HTTP: ws is 80 (HTTP) and wss is 443 (HTTPS)

In Python programming, an asynchronous WebSocket client and server can be implemented using websockets. WebSocket support is also provided by aiohttp.

Note: If you search for Flask’s WebScoket plugin, the first result you get is most likely Flask-SocketIO. But Flask-ScoektIO uses its own proprietary SocketIO protocol and is not a standard WebSocket, it just happens to provide the same functionality as a WebSocket.

The advantage of SocketIO is that the protocol is supported as long as the Web side uses SocketIO.js. The pure WS protocol, on the other hand, is only supported by newer browsers. For non-Web client cases, a better choice might be to use Flask-Sockets.

JS API

|

|

II. HTTP/2

HTTP/2 was standardized in 2015 with the main purpose of optimizing performance. Its features are as follows.

- Binary protocol: HTTP/2 uses binary format for message headers instead of text format. It is compressed using a specially designed HPack algorithm.

- Multiplexing: This means that HTTP/2 can reuse the same TCP connection, and the connection is multiplexed so that multiple requests or responses can be transmitted at the same time.

- In contrast, HTTP/1.1’s long connections can also reuse TCP connections, but only serially, not “multiplexed”.

- Server Push: The server can push resources directly to the client, so that when the client needs the files, they are already on the client. (The push is hidden from the Web App and is handled by the browser)

- HTTP/2 allows to cancel a certain data stream being transferred (by sending RST_STREAM frames) without closing the TCP connection.

- This is one of the benefits of the binary protocol, the possibility to define data frames for multiple functions.

It allows the server to push resources to the client’s cache. When we visit sites like Taobao, we often find that many requests have a header that says “provisional headers are shown”, which usually means that the resources were loaded directly from the cache, so the request wasn’t sent at all. Looking at the Size column of Chrome Network, this field is usually from disk cache or from memroy cache for such requests.

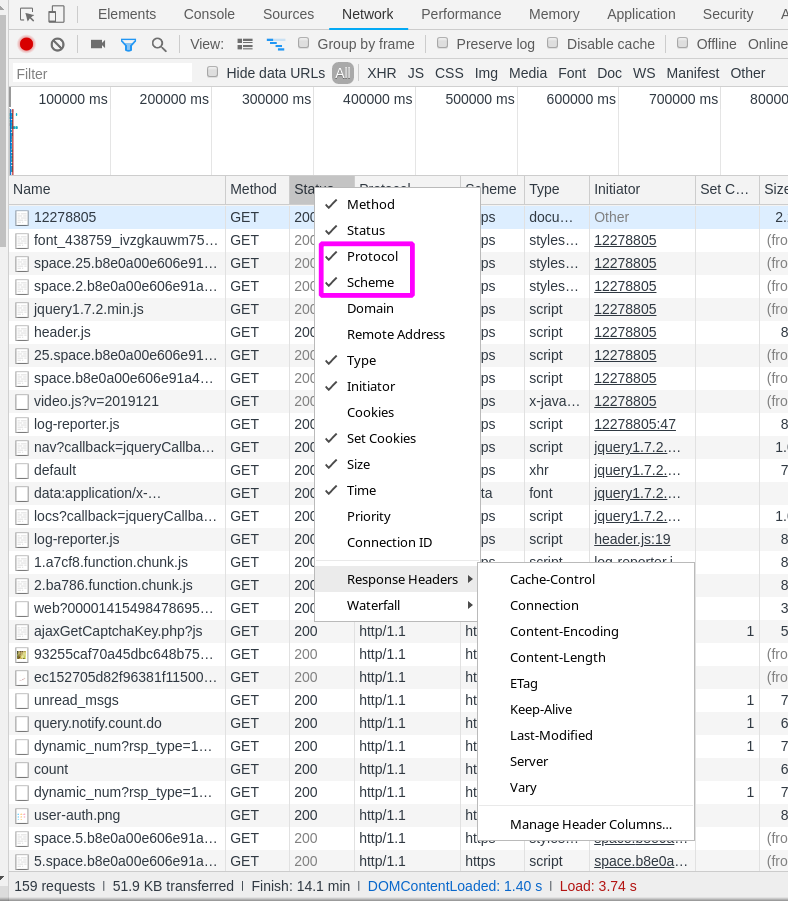

Chrome can see what protocol the request is using by looking at.

2019-02-10: Using Chrome, the mainstream sites are basically already partially using HTTP/2, Zhihu, bilibili, GIthub use the

wssprotocol, and many sites use SSE (format likedata:image/png;base64,<base64 string>) And many sites use HTTP/2 + QUIC, the new name for the protocol is HTTP/3, which is a UDP-based HTTP protocol.

SSE

Server Side Push Event is a feature that pushes information over HTTP long connections. It starts with the browser establishing an HTTP long connection to the server, which then continuously pushes messages to the browser over this long connection.JS API is as follows.

|

|

Specific implementation

Upon receiving an SSE request (HTTP protocol) from the client, the server returns the following response headers.

As for the body section, SSE defines four types of information: 1.

data: data fieldevent: custom data typeid: data idretry: maximum interval time, reconnect if timeout

body Example.

|

|

Comparison of WebSocket, HTTP/2 and SSE

- Encrypted or not.

- WebSocket supports plaintext communication

ws://and encryptedwss://. - While the HTTP/2 protocol does not require encryption, mainstream browsers only support HTTP/2 over TLS.

- SSE is the HTTP protocol used to communicate, supporting http/https

- WebSocket supports plaintext communication

- Message push.

- WebSocket is a full-duplex channel that can communicate in both directions. And messages are pushed directly to the Web App.

- SSE can only serially push data from the server to the Web App in one direction.

- HTTP/2 also supports Server Push, but the server can only actively push resources to the client cache! It does not allow data to be pushed to the Web App itself running in the client. Server pushes can only be handled by the browser and do not populate the server data in the application code, which means the application does not have an API to get notified of these events.

- To push data to the Web App in near real-time, HTTP/2 can be used in conjunction with SSE (Server-Sent Event).

WebSocket is useful in areas where near real-time two-way communication is required. And HTTP/2 + SSE is suitable for presenting real-time data.

Also in the case of non-browser clients, it is possible to use HTTP/2 without encryption.

requests to see the HTTP protocol version number

The HTTP version number of the response can be obtained from resp.raw.version.

But requests uses HTTP/1.1 by default, and does not support HTTP/2. (But this is not a big problem, HTTP/2 is just a performance optimization, using HTTP/1.1 is just a little slower.)

III. gRPC Protocol

gRPC is a remote procedure call framework that uses protobuf3 by default for efficient serialization of data and service definition, and HTTP/2 for data transfer. The protocol discussed here is gRPC over HTTP/2.

Currently gRPC is mainly used in microservice communication, but because of its superior performance, it is also well suited for scenarios such as gaming and loT that require high performance and low latency.

In fact, in terms of protocol sophistication alone, gRPC basically surpasses REST:

- Using binary for data serialization, which is more traffic efficient and faster than json for serialization and deserialization.

- protobuf3 requires the api to be fully and clearly defined, while the REST api can only be defined by the programmer himself.

- gRPC officially supports code generation from api definitions, while REST api requires third-party tools such as openapi-codegen.

- Supports 4 communication modes: one-to-one (unary), client-side flow, server-side flow, and dual-side flow. More flexible

Only the current gRPC support for broswer is still not very good, if you need to access the api through the browser, then gRPC may not be your cup of tea. If your product is only intended for controlled clients like apps, consider gRPC.

For applications that need to serve both the browser and the APP, you can also consider that the APP uses the gRPC protocol, while the browser uses the HTTP interface provided by the API gateway to perform HTTP - gRPC protocol conversion on the API gateway.

gRPC over HTTP/2 Definition

See the official document gRPC over HTTP/2 for a detailed definition.

Here are a few brief points.

- gRPC completely hides the semantics of HTTP/2 itself, such as method, headers, path, etc. This information is completely invisible to the user.

- Requests are uniformly POST, and the response status is uniformly 200. HTTP status codes in the response are completely ignored as long as the response is in the standard gRPC format.

- gRPC defines its own status code, a fixed format path, and its own headers.