SSL protocol is an important part of modern network communication, which provides data security at the transport layer. In order to facilitate your understanding, this article will start with the basics of cryptography, and then move on to a detailed explanation of the SSL protocol principles, processes and some important features, and finally will expand on the differences between the national SSL protocols, security and the key new features of TLS 1.3.

Due to the limitation of space and personal knowledge, this article will not cover too much detail. In particular, this article will not cover the specific principles of the algorithm, nor the actual code implementation. Instead, we will try to understand the basic principles and processes in a visual way, such as with diagrams.

Foundations of cryptography

Classical Ciphers

The history of cryptography goes back a long way, as early as the Roman Republic, when Julius Caesar is said to have used the Caesar Code to communicate with his generals.

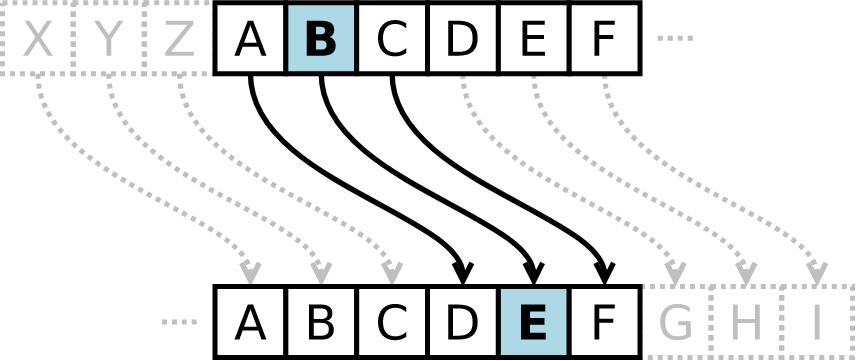

Caesar’s cipher

The Caesar password is a simple shift operation. Caesar keys are very easy to crack, just try the key from 0 to 25 using brute-force cracking.

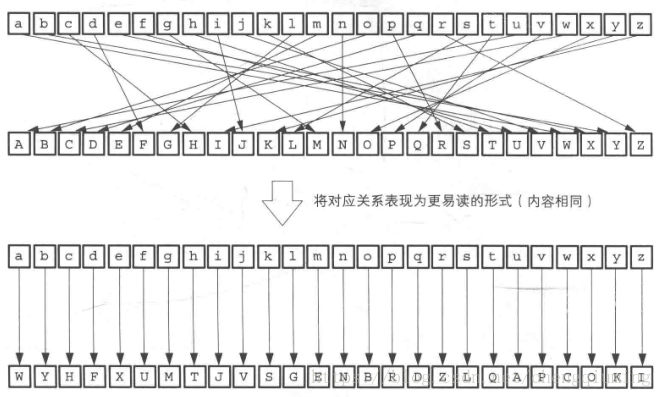

Simple substitution of passwords

Simple substitution passwords with a random mapping relationship between the plaintext alphabet and the ciphertext alphabet. This way the key space is 26! ~= 4 * 10^26, which is no longer possible to find the correct key using brute force cracking. But it can be deciphered using frequency analysis.

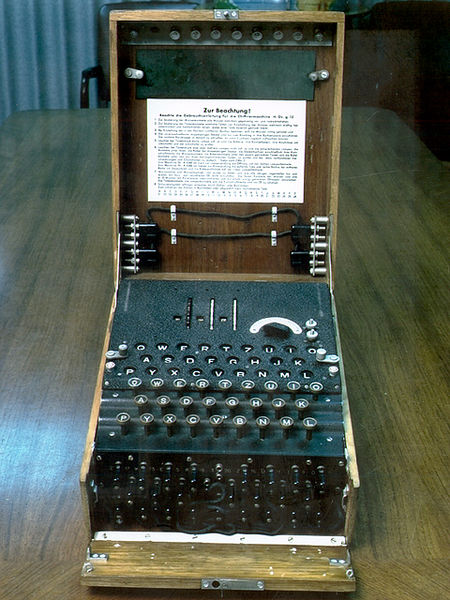

Enigma cipher machine

A series of rotor-mechanical encryption and decryption machines used in Germany during World War II. Despite the high security of this machine, Allied cryptographers succeeded in deciphering a large number of messages encrypted by this machine.

Main weaknesses.

- The communication password is entered twice in succession and encrypted

- Communication codes are artificially selected

- IDF codebooks must be distributed

Symmetric passwords

Block ciphers and stream ciphers

The above ciphers actually belong to the category of symmetric ciphers, and symmetric encryption algorithms can be divided into two types of ciphers, block cipher and stream cipher.

- Block cipher: Only blocks of a specific length can be processed at a time. The length of a block is called the block length (group length)

- Stream cipher: A class of cryptographic algorithms that process data streams continuously. It generally encrypts and decrypts in units of 1 bit, 8 bits, or 32 bits.

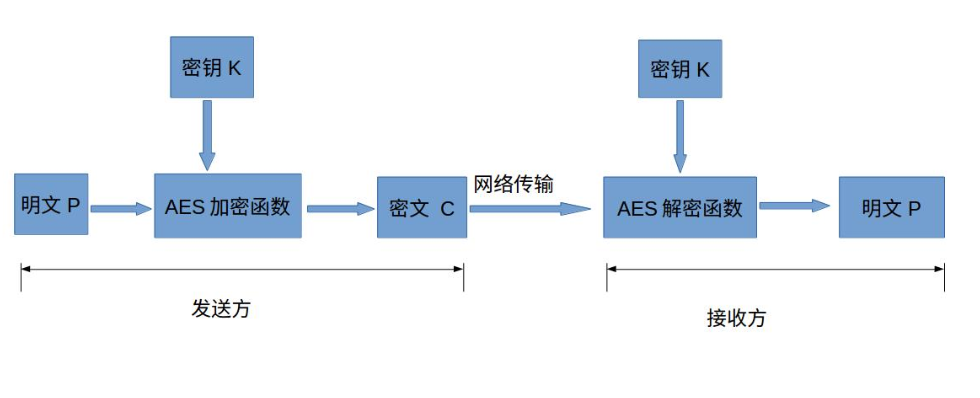

AES (Advanced Encryption Standard)

AES is one of the most commonly used symmetric algorithms today. A symmetric encryption algorithm, as the name implies, is encrypted and decrypted using the same key . The sender uses the key K to encrypt the plaintext P to get the ciphertext C. Then the ciphertext C is sent to the receiver, who uses the same key K to decrypt the ciphertext to get the plaintext P.

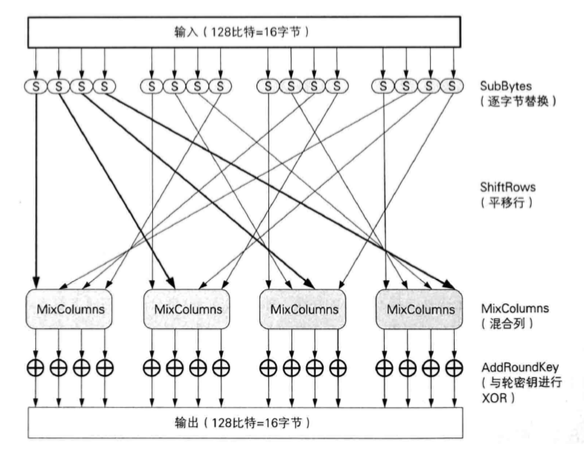

AES uses the Rijndael algorithm, and the following diagram shows the operation of a round in Rijndael encryption.

Each round performs byte substitution, row shifting, column blending and rounds of key dissimilarity, allowing the input bits to be fully obfuscated. The encryption of a block will go through many rounds of operations to finally get the ciphertext.

Since all 4 rounds of operations are reversible, the decryption is an opposite process.

Pattern of block cipher

Mode

The block cipher algorithm can only encrypt a fixed length block, but the plaintext we need to encrypt may exceed the block length of the block cipher, so we need to iterate over the block cipher algorithm in order to encrypt a long block of plaintext. The method of iterating between blocks is called mode of the packet cipher.

Commonly used modes are: ECB, CBC, OFB, CFB, CTR, etc. For the sake of space, we will only introduce ECB, CBC and CTR.

ECB mode

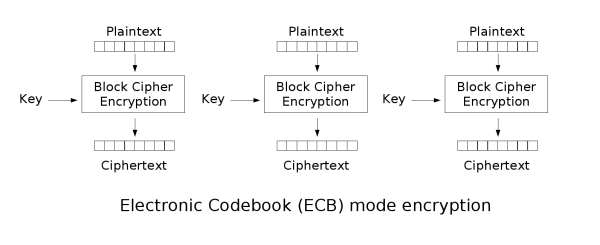

ECB is the simplest mode, where each block is encrypted independently. The result of encrypting the plaintext group becomes a ciphertext group directly after encryption. When the content of the last plaintext group is less than the group length, it needs to be padded with some specific data (padding).

The ECB mode has a very significant disadvantage: the same plaintext blocks are encrypted into identical ciphertext blocks; therefore, it does not hide the data patterns very well. In some occasions, this method cannot provide strict data confidentiality.

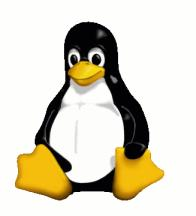

For example, in the penguin picture below, after encrypting it with ECB mode to get the middle picture, the outline of the picture can still be clearly seen, which cannot protect the data confidentiality well. And after encrypting with other modes, we get the result as shown in the third figure, and the obvious features can no longer be seen.

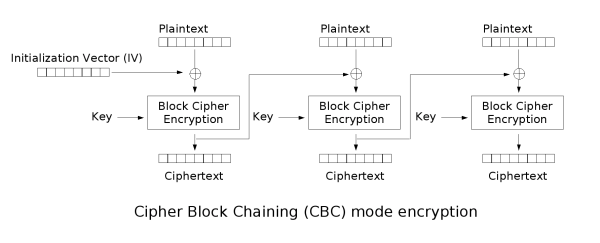

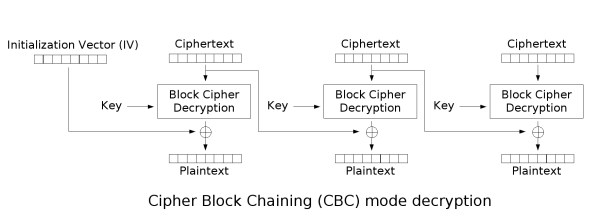

CBC mode

In CBC mode, each plaintext block is first dissociated with the previous ciphertext block and then encrypted. So each plaintext block depends on all the previous plaintext blocks. Also, to ensure the uniqueness of each message, the initialization vector (IV) needs to be used in the first block.

CBC is the most common working mode in TLS1.2 era. It does not have the problem of the same original text encrypted as the same ciphertext in ECB mode, but it also results in less parallel computing capability than ECB mode.

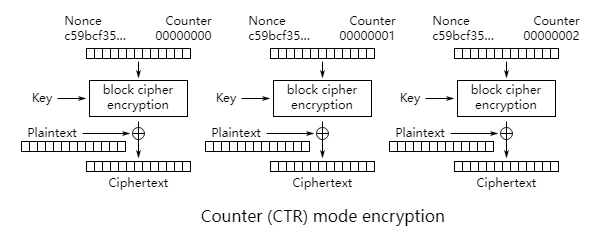

CTR mode

Counter mode actually converts block encryption into stream encryption, it encrypts a counter that accumulates one by one, and then XORs the encrypted bit sequence with the plaintext to get the ciphertext.

The counter acts similarly to IV in CBC mode, ensuring that the same plaintext will be encrypted into different ciphertexts. The CTR mode has the following advantages over CBC and other modes: it is very suitable for parallel computation, and the corresponding bits in the wrong ciphertext will only affect the corresponding bits in the plaintext.

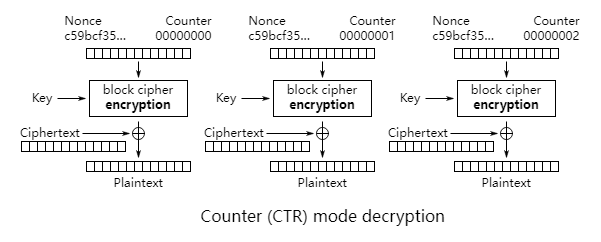

AEAD (Authenticated Encryption with Associated Data)

All those previous models have been found to have different procedural drawbacks or problems. In the era of TLS1.3, only the AEAD type of encryption mode is retained. AEAD adds the function of authentication along with encryption, and the commonly used ones are GCM, CCM, and Ploy1305.

GCM (Galois/Counter Mode)

The G in GCM refers to GMAC (we will talk about MAC later), and C refers to the CTR counter mode we mentioned earlier. The upper right part of the figure below is the Counter Mode encryption above, and the remaining part is the GMAC. the final result contains the initial counter value, the encrypted ciphertext and the MAC value.

Public key ciphers (asymmetric ciphers)

When using symmetric encryption, you are bound to run into the problem of key distribution (key exchange). The use of pre-shared keys has limitations and a secure way to hand over the keys to each other is needed. This leads to public key ciphers.

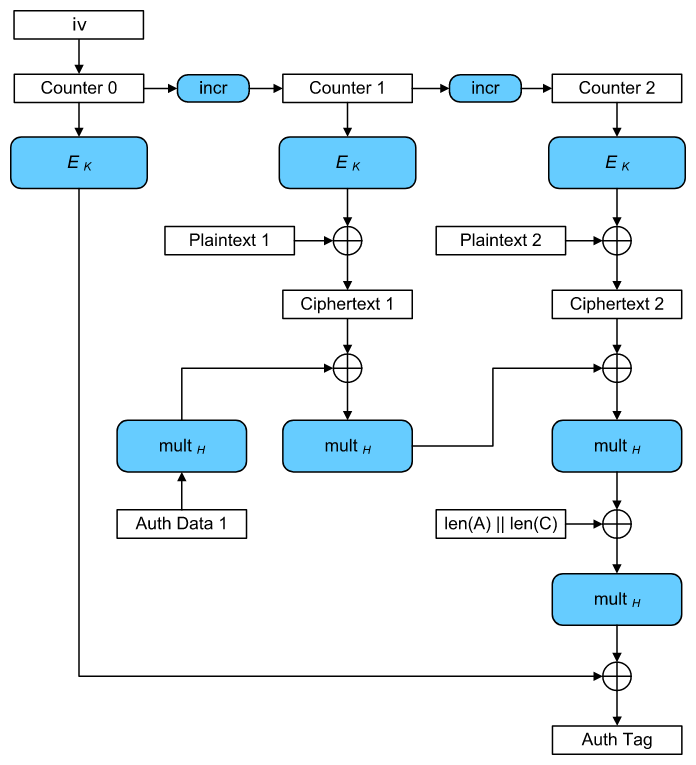

Public Key Cryptographic Algorithms (RSA, National Security SM2)

The most commonly used public key algorithm is the famous RSA, while the SM2 algorithm is used in the national secret. The public key cipher has two keys, one of which is the public key, which can be distributed, and the other is the private key, which needs to be kept strictly by oneself. For example, if Bob wants to send a message to Alice, Bob encrypts the message with Alice’s public key and sends it to Alice, who then decrypts it with her private key.

Diffie-Hellman key exchange

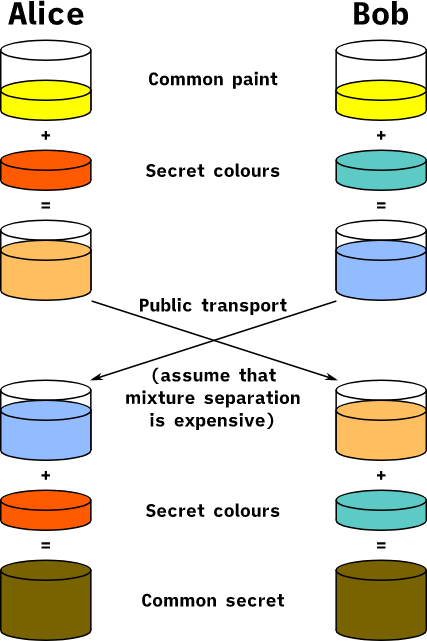

Another commonly used public key cipher is the DH class of algorithms, which can be explained graphically in the following diagram.

First both parties negotiate an identical base color (algorithm parameter), then each generates its own private color (equivalent to the private key) and obtains the corresponding public color (equivalent to the public key) by mixing. Then both parties exchange their public colors and mix them with their private key colors to finally negotiate an identical color (i.e., the exchanged key). An eavesdropper cannot generate the same key even if he gets this information exchanged between the two parties, the difficulty of solving the discrete object problem ensures the security of the DH algorithm.

ECDH and ECDHE

ECDH is an elliptic curve-based DH algorithm, which is basically the same as DH in principle, mainly replacing the modulo power operation on finite fields with the dot product operation on elliptic curves. Compared with DH algorithm, it is faster and more difficult to be inverted.

DH and ECDH both use a fixed key, once the key is leaked, all previous cipher messages are broken. ECDHE provides forward security, it uses a temporary key each time and generates a session key based on this temporary key for key exchange. Even if this temporary key is compromised, it only affects the messages of the current SSL session.

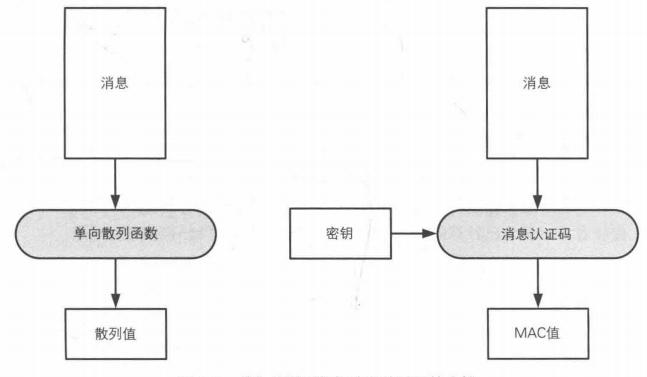

One-way hash function (Hash)

The previous symmetric key and public key cipher solves the problem of confidentiality of message transmission, so that the message we transmit is not eavesdropped. But the problem of integrity has not been solved, and the message may be " tampered " in the middle. So it’s the turn of one-way hash functions to come into play.

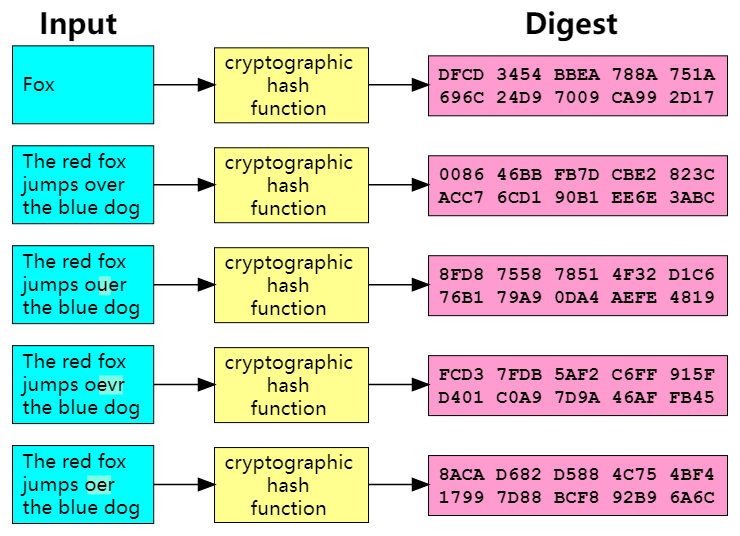

The one-way hash function can calculate a fixed-length hash value (digest value) based on the input message, and this hash value can be used as a fingerprint of the message to check the integrity of the message. By modifying any 1 bit of the original message, the final generated hash value may be completely different.

The ideal hash function has the following properties.

- deterministic: the same message always produces the same hash value

- any given message can quickly calculate the hash value

- unidirectional: the message cannot be back-calculated from the hash value

- Weak collision resistance: it is difficult to find a message that can generate a given hash value

- Strong collision resistance: it is difficult to find messages that can generate two identical hashes

- A small change in a message can cause a large change in the hash value

Message Authentication Code (MAC)

While the one-way hash function guarantees the integrity of a message, a clever attacker can tamper with the message along with its hash value without the receiver being able to identify it. So there is also a need for authentication of the message, with traditional authentication methods such as handwritten signatures, stamps, handprints, IDs, passphrases (which are actually a shared key), etc. In the cryptographic domain, authentication can be performed by means of message authentication codes. The message authentication code has an additional shared key to authenticate the message compared to the one-way hash function. The attacker cannot forge the MAC value because he does not have this key.

Problems with MAC

- MAC requires a shared key just like symmetric ciphers, so there are also problems with key distribution.

- Unable to prove to third parties

- Unable to prevent denial

Digital signatures (RSA, ECDSA)

To solve the MAC problem, digital signatures were introduced again.

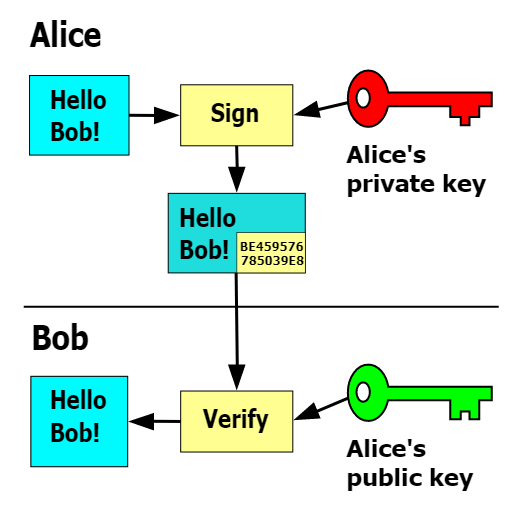

Digital signatures also belong to the category of public key ciphers. The difference from before is that it uses a private key for encryption (the operation is called signing) and anyone can decrypt it with a public key (the operation is called signature checking). Since the public key is public, it solves the problem of third-party certification. And because the private key is only possessed by the person himself, the person without the private key cannot in fact generate this ciphertext, so it can also prevent denial. So all three problems of MAC can be solved by digital signature.

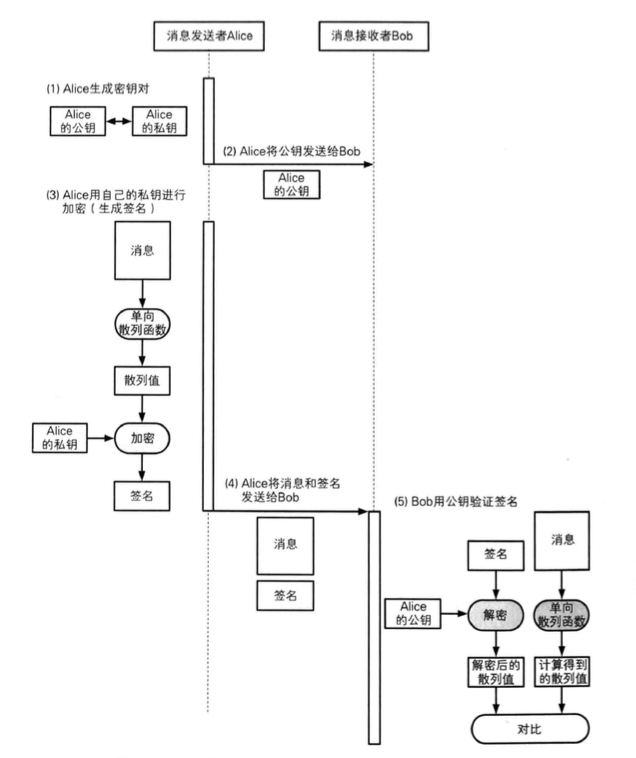

This is usually used in conjunction with a hash function to ensure integrity and speed up the process. As shown in the figure below, Alice sends a message to Bob by sending him her public key, then she computes a hash of the message and encrypts the hash with her private key to get the signature of the message. Then she sends the initial message and the signature to Bob, who receives it and performs the same hash calculation on the message, decrypts the signature data with Alice’s public key to get the decrypted hash value, and then compares the two calculated hashes to verify the validity of the signature.

Digital Certificates, CA

So far, our public key ciphers, digital signatures address confidentiality, integrity, the ability to authenticate, and prevent denial. But it’s all based on one premise: the public key belongs to the real sender. If the public key is forged, then all of this is lost. The aforementioned digital signature can only guarantee that the other party has the private key corresponding to the public key, but it cannot authenticate the identity of the public key owner itself.

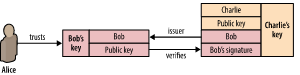

In order to solve this problem, digital certificates were created. The solution is to have a trusted third party sign the public key. This trusted third party is generally called Certificate authorities (CA).

Let’s see what the actual certificate looks like. Where Subject is the information of the certificate owner, Issuer is the information of its issuer, Subject Public Key Info is the actual public key information, and the RSA 2048-bit key is used here. The certificate is finally the signature of the CA with its own private key. Anyone who owns the CA’s certificate (including the public key) can verify this certificate, thus verifying the identity of the public key owner. Validity is the validity period of this certificate.

|

|

In actual use, multi-level certificate chains are usually used, with each level of certificate issued by the CA at the previous level and checked and signed by the certificate of the CA at the previous level. The final Root CA certificate can only be issued by itself, i.e., it signs its own public key with its own private key, otherwise it is infinitely recursive.

So a digital certificate is actually a chain of trust transferring the authentication of many individuals to a few CAs, which can reduce the risk of man-in-the-middle attacks. From public key ciphers and certificates this leads to Public Key Infrastructure (PKI) : This is the general term for a set of specifications and specifications that are developed to enable more effective use of public keys.

Hybrid Cryptosystems

So with public-key ciphers, is there no need for symmetric ciphers? Although public-key ciphers solve the key distribution problem of symmetric ciphers, they are several orders of magnitude slower than symmetric ciphers in terms of computational speed. Here are the results of testing RSA1024 and AES128 with openssl speed. AES128 (same block size) is roughly 1200 times faster than RSA1024 signature and 70 times faster than check-sign.

Because symmetric and asymmetric ciphers have their own advantages and disadvantages, they are usually combined in practical applications, using asymmetric ciphers to complete the key exchange, then generating session keys as keys for symmetric ciphers, and using symmetric ciphers to encrypt and decrypt messages.

Random Number Generator

Up to now, we have solved many problems, including confidentiality, integrity, authentication, and anti-repudiation, but there is actually one big problem we have not solved: namely, how do we generate our session key? All the security of a secure algorithm should be based on the security of its key, and if our key can be easily cracked or predicted, then everything we’ve built falls apart. So the random number generator plays a crucial role here.

Random numbers also play an important role in anti-replay attacks, where the attacker saves the eavesdropped data and sends it to the receiver later as it is to achieve his specific attack purpose. The common means to prevent replay are the use of serial numbers, timestamps, random numbers, etc.

Here is a question left for your consideration. What is the use of replay since the attacker can neither decrypt the message nor tamper with it because of confidentiality and integrity protection?

The ideal random number generator has the following properties.

- Randomness: no statistical bias, a completely jumbled sequence of numbers

- Unpredictability: the next occurrence of a number cannot be inferred from past series

- Irreproducibility: the same series cannot be reproduced unless the series itself is preserved

Key derivation function key derivation function

KDF can be used to extend key material into longer keys or to obtain keys in the desired format, the PRF algorithm is used in TLS 1.2 and the HKDF algorithm is used in TLS 1.3.

SSL/TLS protocol in detail

What is SSL/TLS protocol

Well, with the previous cryptographic foundation, we can formally enter the introduction of the TLS protocol. While the previous ones are basically independent algorithms or components that combine several algorithms, the SSL/TLS protocol is a finished cryptographic protocol based on these underlying algorithmic primitives and components that are finally put together.

SSL is known as Secure Sockets Layer, which was designed by Netscape as a secure transport protocol primarily for the Web to provide confidentiality, authentication, and data integrity protection for network communications. Today, SSL has become the industry standard for secure Internet communications.

The initial versions of SSL (SSL 1.0, SSL 2.0, SSL 3.0) were designed and maintained by Netscape. From version 3.1 onwards, the SSL protocol was officially taken over by the Internet Engineering Task Force (IETF) and renamed TLS (Transport Layer Security), which has evolved to TLS 1.0, TLS1.1, TLS1.2 and TLS1.3. TLS 1.0, TLS1.1, TLS1.2. At present, the mainstream is still TLS1.2, but TLS1.3 will soon be the trend.

| Protocol | Published | Status |

|---|---|---|

| SSL 1.0 | Unpublished | Unpublished |

| SSL 2.0 | 1995 | Deprecated in 2011 (RFC 6176) |

| SSL 3.0 | 1996 | Deprecated in 2015 (RFC 7568) |

| TLS 1.0 | 1999 | Deprecated in 2020 (RFC 8996)[8][9][10] |

| TLS 1.1 | 2006 | Deprecated in 2020 (RFC 8996)[8][9][10] |

| TLS 1.2 | 2008 | |

| TLS 1.3 | 2018 |

The main security objectives that the SSL/TLS protocol can provide include the following.

- Confidentiality : Preventing third-party eavesdropping with the help of encryption

- Authentication: Authentication of server-side and client-side identities with the help of digital certificates to prevent identity forgery

- Integrity: safeguard data integrity and prevent message tampering with the help of Message Authentication Code (MAC)

- Anti-Replay : Prevents replay attacks

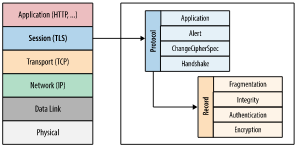

Protocol layering

I believe you are already familiar with the TCP/IP 5-layer model. The TLS protocol, as its name suggests (Transport Layer Security), is used to secure the transport layer. It is located above the transport layer and below the application layer.

The SSL/TLS protocol has a highly modular architecture, which is internally divided into many sub-protocols: Handshake protocol, Alert protocol, ChangeCipherSpec protocol, Application protocol. They are all based on the Record protocol at the bottom, and the Record layer protocol is responsible for identifying different upper layer message types and segmented encryption authentication of messages, etc.

- Handshake protocol: including negotiation of security parameters and algorithm suites, server authentication (client authentication optional), key exchange

- Application protocol: used to transmit application layer data

- ChangeCipherSpec protocol: a message indicating that the handshake protocol has been completed

- Alert protocol: an error alert for some exceptions in the handshake protocol, divided into two levels: fatal and warning. fatal type errors will directly break the SSL connection, while warning level errors will generally continue the SSL connection, but will only give an error warning

The SSL/TLS protocol is designed as a two-phase protocol, divided into Handshake Phase and Application Phase.

Handshake Phase: Also known as the Negotiation Phase, the main goal of this phase is to negotiate the security parameters and algorithm suite we have already mentioned, authentication (based on digital certificates), and key exchange to generate keys for subsequent encrypted communications.

Application phase: Both parties use the keys negotiated in the handshake phase to communicate securely.

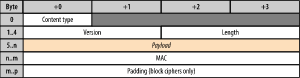

SSL record

The format of the SSL record layer packet is similar to that of the IP or TCP layers below it. All data exchanged over an SSL session is encapsulated into frames in the following format. The record layer protocol is responsible for identifying the different message types, as well as segmentation, compression, message authentication and integrity protection, encryption, etc.

A typical record layer workflow (packet cipher algorithm) is as follows.

- Record layer receives data from the application layer

- Chunking of the received data

- Compute the MAC or HMAC using the negotiated MAC key and add it to the record block

- Encrypt the logged data using the negotiated Cipher Key

When the encrypted data reaches the receiving end, the other side does the opposite: decrypts the data, verifies the MAC, reorganizes the data and hands it to the application layer.

All this work is done by the SSL layer and is completely transparent to the upper layer applications.

For messages in the handshake phase, the payload is plaintext, so of course there is no MAC or Padding. all other messages have a ciphertext payload.

For stream encryption algorithm, there is no padding after it. for block encryption algorithm records, there is an optional IV field before the payload depending on the algorithm used.

For AEAD algorithm , there is no MAC and Padding field after it because authentication is already included in the algorithm. payload is preceded by an external nonce field.

Algorithm Suites CipherSuites

Before we dive into the handshake process, let’s understand the concept of algorithm suites. Earlier in the Cryptography Basics section we have learned about various algorithms, including authentication algorithms, key exchange algorithms, symmetric cryptographic algorithms, and algorithms for integrity authentication.

TLS 1.2-

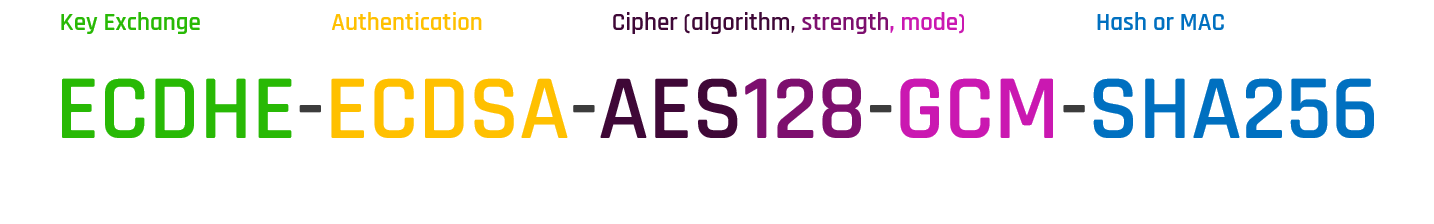

A Algorithm Suite is a combination of these algorithm types used in an SSL connection and contains the following components.

- Key Exchange (Kx)

- Authentication (Au)

- Encryption (Enc)

- Message Authentication Code (Mac)

Common algorithm suite types such as TLS_ECDHE_RSA_WITH_AES_128_CBC_SHA256, TLS_RSA_WITH_AES_256_GCM_SHA384, TLS_ECDH_ECDSA_WITH_AES_256_CBC_SHA384, ECC _SM2_WITH_SM4_SM3 (national secret), ECDHE_SM2_WITH_SM4_SM3 (national secret).

Taking ECDHE-ECDSA-AES128-GCM-SHA256 as an example, the preceding ECDHE denotes the key exchange algorithm, ECDSA denotes the authentication algorithm, and AES128-GCM denotes the symmetric encryption algorithm, where 128 denotes the block length, GCM is its mode, and SHA256 denotes the hash algorithm. For AEAD since message authentication and encryption have been merged together, the last SHA256 only represents the algorithm of the key derivation function, while for the traditional algorithm suite where data encryption and authentication are separated, it also represents the algorithm of MAC.

The details of each algorithm suite can be viewed with the following command: openssl ciphers -V | column -t | less.

TLS 1.3

For TLSv1.3, because the key exchange and authentication algorithms have been separated from the algorithm suite, the algorithm suite represents only the encryption algorithms and key derivation functions. The reason for this separation is that the number of supported algorithms has increased, resulting in a large number of algorithm suites after multiplication.

You can check which TLS 1.3 algorithm suites are currently supported with the following command: openssl ciphers -V | column -t | grep 'TLSv1.3' .

SSL handshake

Finally we get to the core handshake protocol part. As mentioned earlier, the SSL handshake does several things: first you have to discuss what algorithm suite to use, then you have to authenticate each other as needed (based on digital certificates), and finally you have to exchange keys based on the chosen algorithm suite to generate the keys used for subsequent encrypted communications. (This section describes the situation for TLS 1.2 and previous versions)

The following diagram shows the process of establishing a complete SSL handshake:

The first is the 3 TCP handshake to establish the TCP connection, then the client initiates the SSL handshake. A complete SSL handshake consists of two interactions, the first of which is to complete the selection of the algorithm suite. The second interaction is to complete the authentication and key exchange. Once these are negotiated, the SSL secure channel is established. Subsequent application data is then encrypted and transmitted over the secure channel.

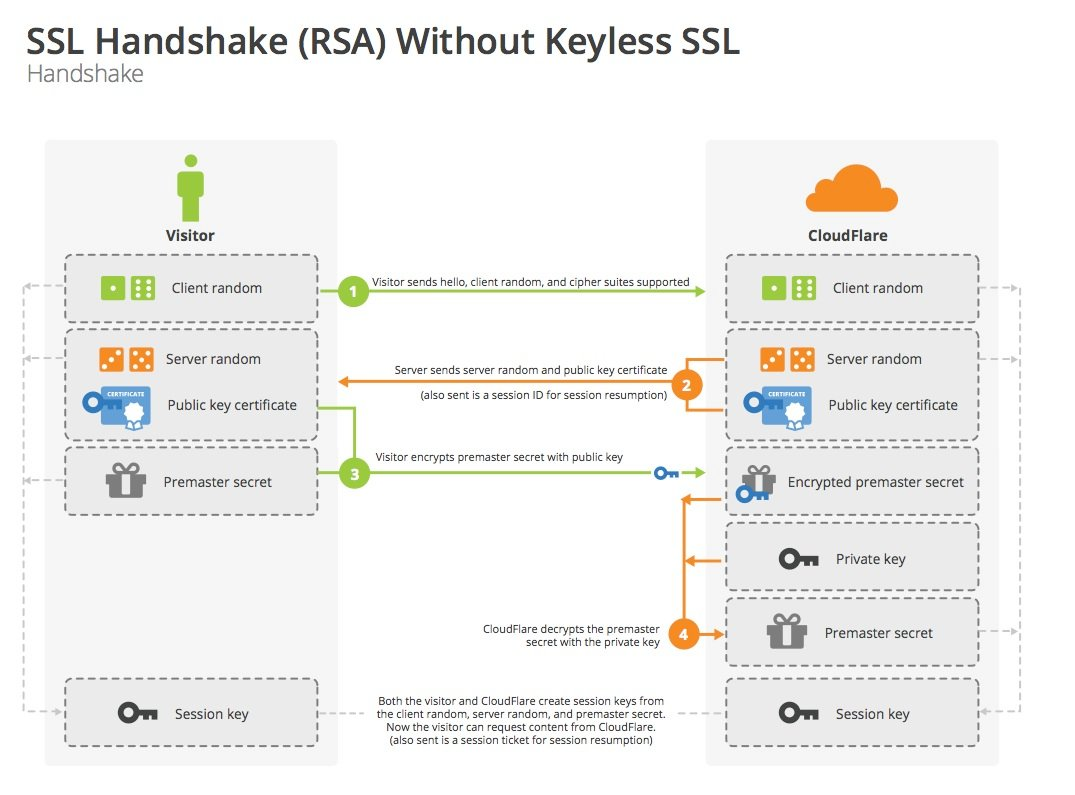

Key Exchange Process - RSA

The key exchange process based on RSA is as follows.

Let’s simulate the negotiation process.

- Client: Hi, server side, I support these algorithms on my side, here’s my random number for this time.

- Client: Okay, let’s see, let’s go with this algorithm suite, this is my random number this time, this is my certificate, you use the public key in this certificate to encrypt the premaster key.

- Client: Wait a minute let me check the certificate, well, it’s indeed a server-side certificate. This is the premaster key encrypted with the public key in the certificate. (Use two random numbers + the pre-master key to calculate the master key, and then generate the session key.) Okay, OK on my end.

- Server side: received. (Decrypt the pre-master key with the private key, calculate the master key using two random numbers + the pre-master key, and then generate the session key.) Okay, OK on my side too.

- Client: This is the encrypted application data….

- Server side: This is the encrypted application data….

Note: The above process is a one-way authentication (the server does not verify the client’s identity), if the server also needs to verify the client’s identity, it will send Certificate Request message in the first interaction, and the client will accordingly send its own Certificate and CertificateVerify messages in the second interaction. messages to the server.

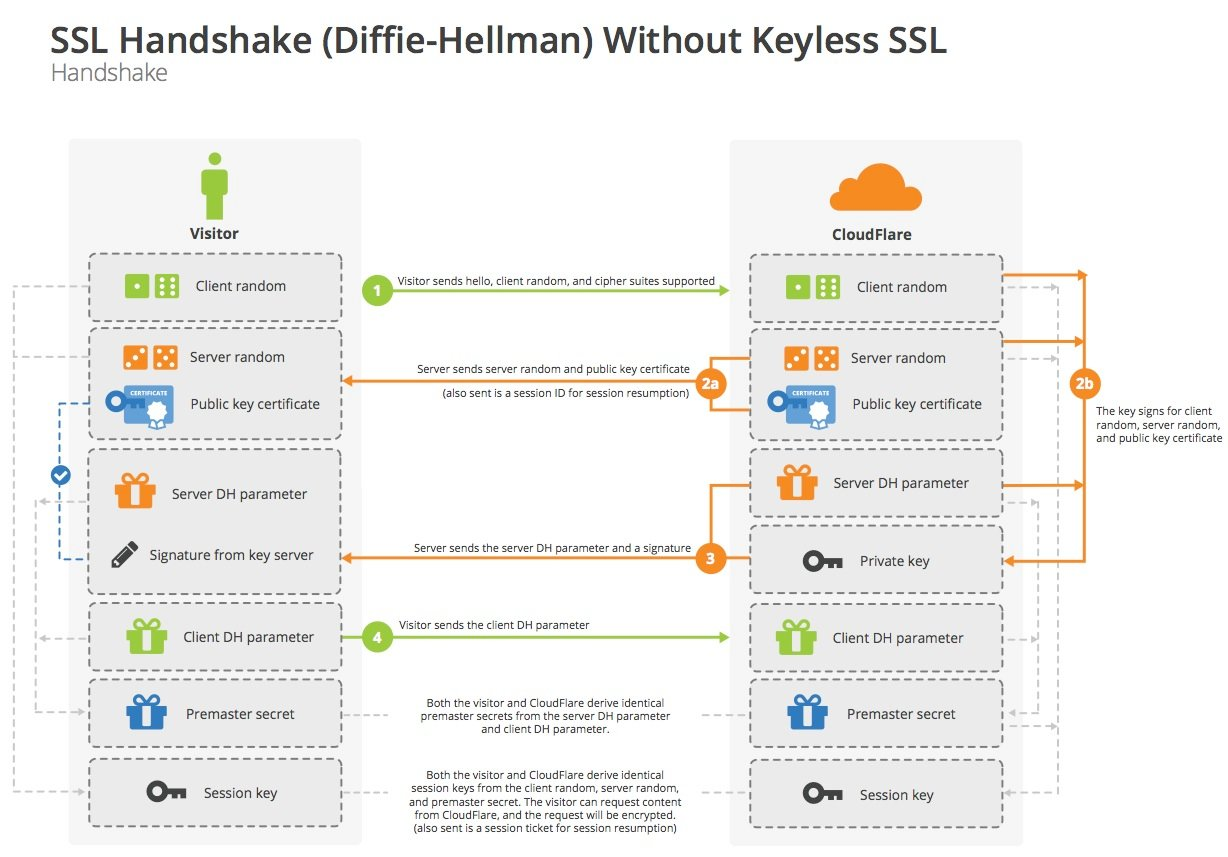

Key exchange process - DH

The DH-based key exchange process is as follows.

Let’s simulate the negotiation process as well.

- Client: Hi, server, I support these algorithms on my side, here’s my random number for this time.

- Server side: Okay, let’s see, let’s go with this algorithm suite, this is my random number for this time, and give you my certificate. I’ll use this DH parameter on my side, and this is the corresponding signature.

- Client: Wait a minute I’ll check the certificate and signature, well, it’s indeed a server-side certificate, and the signature is fine. This is the DH parameter on my side. (Use DH parameters to derive the pre-master key, then use two random numbers + pre-master key to calculate the master key, and then generate the session key.) Okay, OK on my side.

- Server side: received. (Use the DH parameter to derive the premaster key, then use two random numbers + the premaster key to calculate the master key, and then generate the session key.) Okay, OK on my side too.

- Client: This is the encrypted application data….

- Server side: This is the encrypted application data….

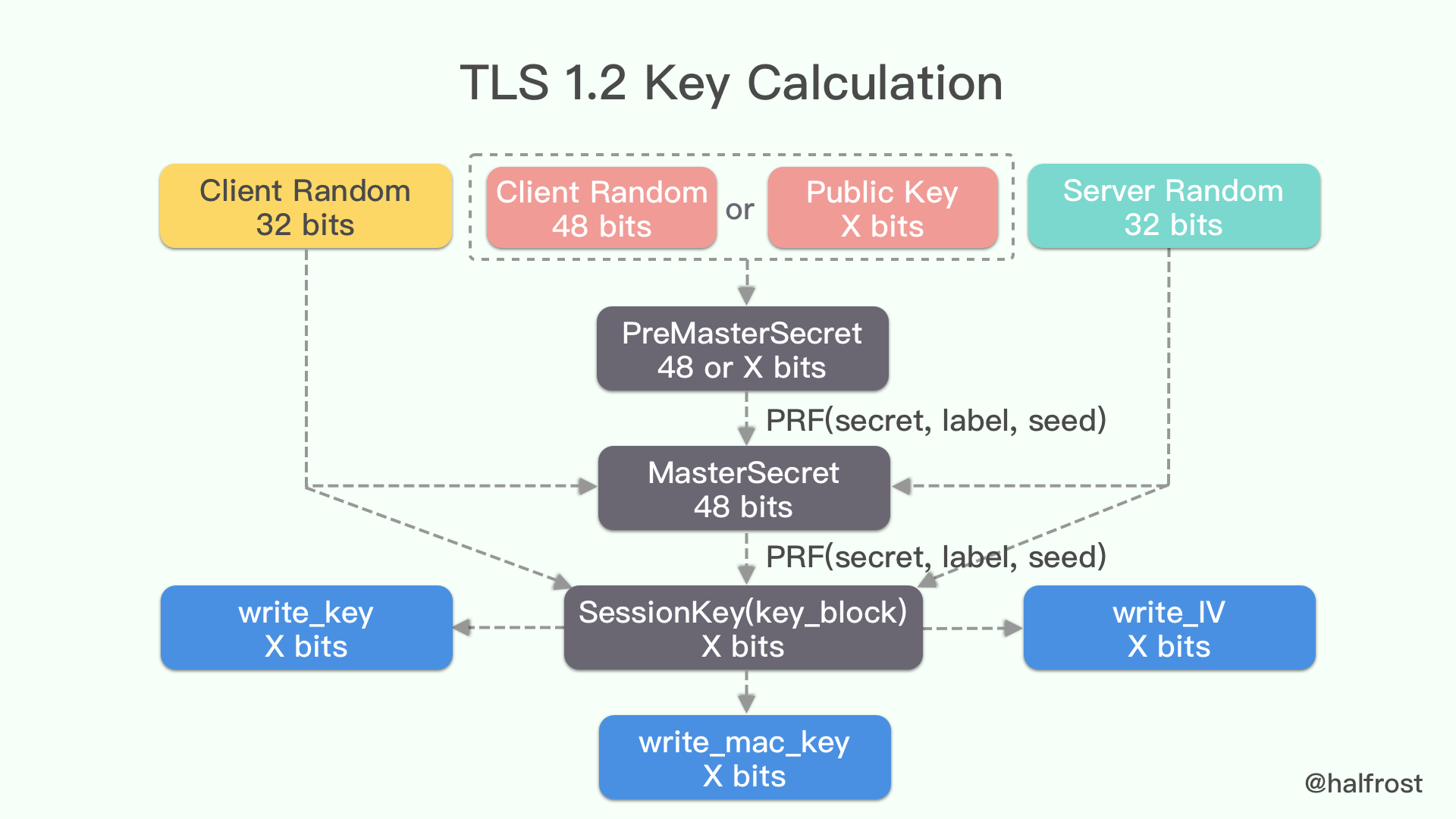

Key generation

Through the first interaction between ClientHello and ServerHello, and the key exchange process, both the client and server get the client random number, server random number and the pre-master key. The next step is to use these to calculate the master key.

Once the master key is obtained, it is then expanded into a sequence of secure bytes.

Then it is sliced into MAC key, symmetric encryption key and IV respectively.

|

|

If you draw a diagram, it looks like the following.

Session Reuse

The SSL handshake introduces an additional two interactions and CPU-intensive algorithmic operations. Is there any way to optimize the performance of the SSL handshake, which is very performance intensive for every connection? Obviously improving hardware performance and software performance are both effective methods. In fact, SSL takes this into account at the protocol level by providing a “session reuse” feature. After the previous SSL connection is established, both parties can save the SSL session. Session reuse requires only one SSL handshake interaction and does not require authentication or key exchange, thus significantly reducing the latency and computational overhead of the process.

In fact, if a browser is initiating multiple connections to the same site, it will typically wait for the first SSL handshake to complete before initiating additional connections, so that the other connections can reuse the previous Session.

There are two mechanisms for Session reuse, Session IDs and Session tickets.

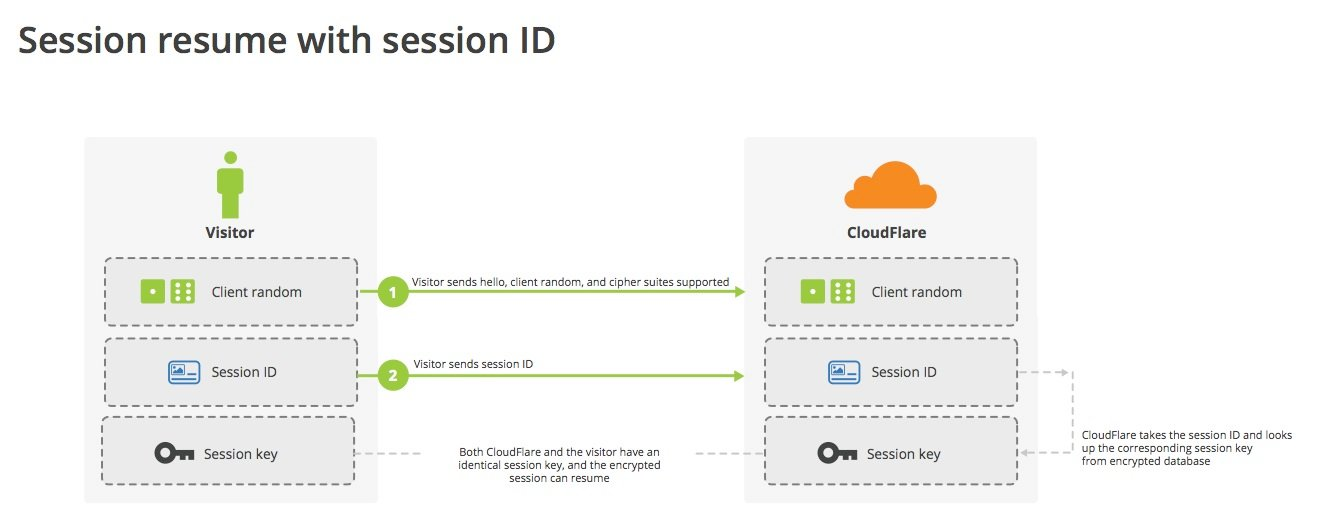

Session IDs

Let’s look at the session reuse process when using Session IDs. As you can see from the flowchart of the previous key exchange, the server sends the Session ID of the session to the client in the Server Hello message. After completing the handshake, the server will save the session.

The client can then restore the session to establish an SSL connection. This is done by including the Session ID to be recovered in the ClientHello message, then the server will look up the corresponding session based on the Session ID, and if everything is OK, it will recover the session key and other information based on the saved session. If the server does not support session reuse, or if the Session ID is not found, or if the session has expired, then it will degrade to a full SSL handshake.

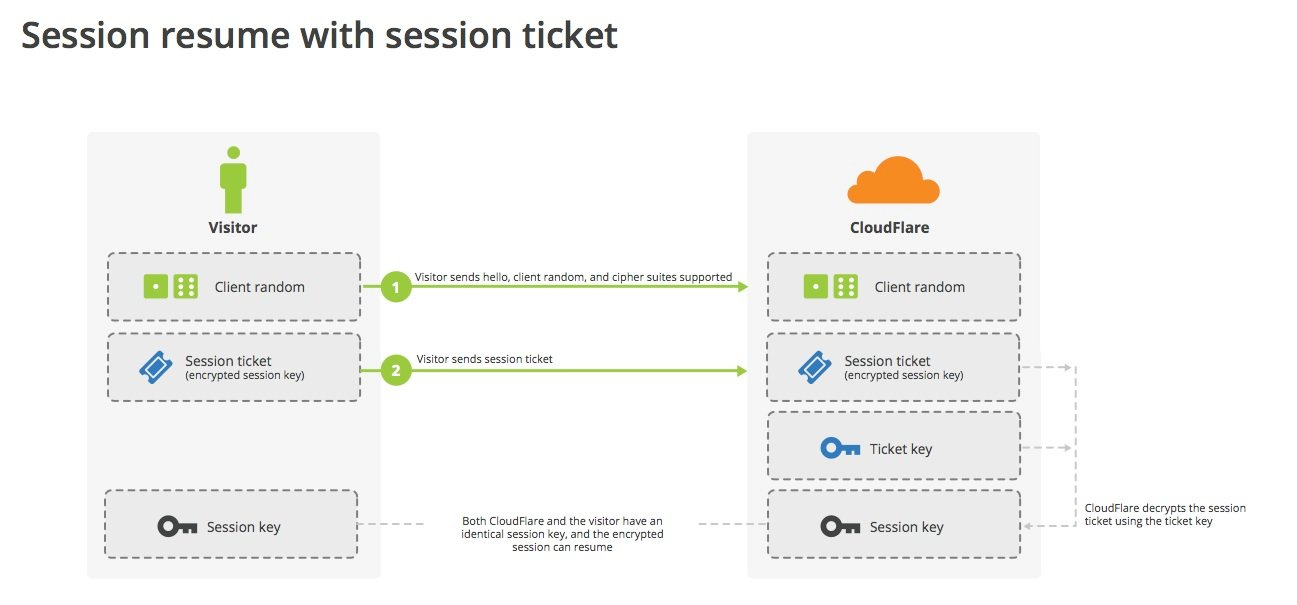

Session Ticket

The Session ID mechanism requires the server to save each client’s session cache, which causes several problems on the server side: additional memory overhead, the requirement for session saving and elimination policies, and then the challenge of sharing the session cache in a high-performance manner for sites with multiple servers.

The Session Ticket mechanism was proposed to solve the Session ID problem. Instead, if the client claims that it supports session tickets, the server sends the client a New Session Ticket message, which contains the encrypted data related to the session, with the encryption key known only to the server.

The client saves the session ticket, and when it needs to resume the session, it will bring the session ticket in the SessionTicket extension of the ClientHello message, and the server will decrypt the session data after receiving it to resume the last session.

So the session ticket mechanism is a kind of stateless reuse for the server, which does not require the server to save the session cache, and of course there is no problem of multi-server synchronization.

Certificate revocation (blacklisting)

When we introduced the certificate, we have seen that the certificate has a validity period, and it cannot be verified outside the validity period. But what should we do if we want to let the certificate expire within the validity period? For example, if a company employee leaves, or if the private key is leaked, it is impossible to take back the issued certificate.

So we introduce certificate revocation, CA can revoke a certificate and perform additional blacklist verification when verifying the validity of the certificate. There are several mechanisms for blacklist validation as follows.

Certificate Revocation List (CRL)

CRL, or Certificate Revocation List, is a list of revoked certificates maintained by CAs, which contains the information of revoked certificates. The verifying party needs to download this list for blacklist verification.

The drawback of CRL is also obvious: the certificate verifier must download this list, and the downloaded list may not be synchronized with the actual CA authority’s list. If a certificate has actually been revoked but is not in the local list, it may pose a security risk.

Online Certificate Status Protocol (OCSP)

OCSP, or Online Certificate Status Protocol. It performs blacklist verification by online request, without downloading the whole list, but only sending the serial number of the certificate to the CA for verification. Deploying OCSP also introduces certain requirements for CAs, which need to build a high-performance server to provide authentication services. If the server hangs, then all the blacklist validation will be considered as passed, which has some security risks.

OCSP Stapling

OCSP Stapling is an extension of OCSP standard, the main goal is to improve performance and security. The certificate owner itself sends requests to OCSP server periodically. The OCSP response is time-stamped and signed directly by the CA.

OCSP Stapling improves the overall performance, on the one hand, the certificate verifier does not need to directly request CA’s server to query the status, on the other hand, the pressure on CA’s OCSP server is reduced.

Server Name Indication (SNI)

When multiple Servers are deployed on a site (equivalent to multiple domains mapped to one IP), different Servers may need to use different certificates. The problem is how to know which host to access in the SSL handshake phase (not yet in the HTTP phase, can’t use the HOST field in the request header), so as to decide to use the corresponding certificate?

SNI is designed to solve this problem by adding SNI to the ClientHello extension so that the server can know which host it needs to access and choose the appropriate certificate.

GMSSL Protocol Differences

GMSSL is modified from TLS1.1, and in general there is not much difference with TLS protocol. See GMT 0024-2014 SSL VPN Technical Specification for more details.

Protocol Number

The protocol numbers of TLSv1.0, TSLv1.1, TLSv1.2, TLSv1.3 are 0x0301, 0x0302, 0x0303, 0x0304 respectively.

And the version number of State Secrets is 0x0101.

Algorithm suite

There are several algorithm suites defined, such as ECC_SM4_SM3 and ECDHE_SM4_SM3. ECDHE_SM4_SM3 requires bi-directional authentication.

The key exchange process for ECC_SM4_SM3 is similar to the RSA key exchange process, where the client encrypts the premaster key with the server’s public key and sends it to the server. The key exchange process of ECDHE_SM4_SM3 is similar to the ECDHE key exchange of normal TLS, where the pre-primary key is derived by both the client and the server. The authentication of both ECC_SM4_SM3 and ECDHE_SM4_SM3 is done by signature/checking of SM2.

Dual certificate system

Certificate Message

SMIT SSL uses a dual certificate system: one signature certificate and one encryption certificate. The signing certificate is used for authentication and the encryption certificate is used for key exchange. When sending a Certificate message, two certificates should be sent at the same time, the format is the same as the standard TLS message format, the first certificate is the signing certificate and the second certificate is the encryption certificate.

ECC_SM4_SM3 key exchange

Because of the dual certificate system, it is slightly different in the SSL state machine. The key exchange process for ECC_SM4_SM3 is as follows: the server sends a Certificate message followed by a ServerKeyExchange message (which is different from the RSA key exchange), the ServerKeyExchange contains a signature value, which is signed by the private key (the signing private key) corresponding to the server’s signing certificate. The signature is computed by the private key (signature private key) corresponding to the server-side signature certificate, and the content of the signature includes the random numbers in ClientHello and ServerHello as well as the encryption certificate.

After the client verifies the certificate and signature, it encrypts the pre-master key using the server-side encryption certificate and sends it to the server, which then decrypts the pre-master key by its own encryption private key.

ECDHE_SM4_SM3 key exchange

The key exchange process for ECDHE_SM4_SM3 is as follows: after the server sends a Certificate message, it also sends a ServerKeyExchange message, the ServerKeyExchange contains a signature value, and the signature is calculated by the private key (signing private key) corresponding to the server’s signing certificate . The content of the signature is different from that of ECC_SM4_SM3, including the random numbers in ClientHello and ServerHello and the server-side ECDH parameters (curve, public key). The key derivation method of ECDHE is also different from that of TLS. TLS only needs the temporary public key of the other party and its own temporary private key to participate in the calculation, while the State Secret needs the temporary public key and fixed public key of the other party (i.e. the public key in the encryption certificate) and its own temporary private key and fixed private key (i.e. the encryption private key) to participate in the calculation. Therefore, the national secret ECDHE must be a two-way authentication, because the server side also needs to use the client’s encryption certificate when the key derivation is performed.

After verifying the certificate and signature, the client sends its own certificate to the server, and then generates its own temporary key according to the ECDH parameter information in ServerKeyExchange, and then performs key derivation with its own encryption key, the temporary public key and encryption public key of the server to get the pre-master key. The server side also uses its own temporary key, encryption key, client’s temporary public key and encryption public key to perform key derivation to get the pre-master key.

Because it is a two-way authentication, the client needs to send a CertificateVerify message after sending the ClientKeyExchange message, signed by all the handshake messages that have been exchanged so far, starting from the ClientHello message.

Security

Common Attacks

Some are for protocol design vulnerabilities, others are for implementation bugs, often used in combination with degradation attacks. For space reasons, only two representative renegotiation attacks and Heartbleed are highlighted here, for the rest of the attacks you can refer to rfc7457 - Summary of Known Attacks

renegotiation attacks

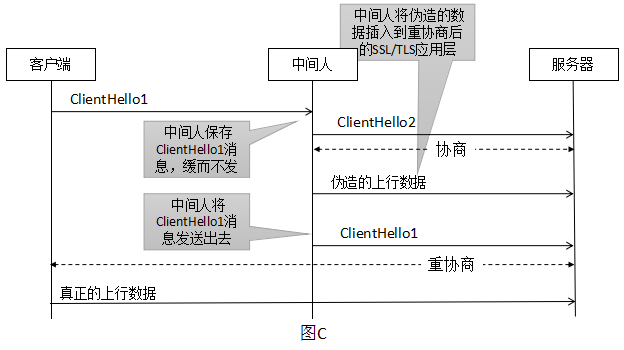

The man-in-the-middle successfully inserts his forged data before the real data of the user without hijacking and decrypting the SSL/TLS connection. The man-in-the-middle, if he understands the APP protocol (e.g. HTTPS), will carefully construct incomplete data to make the server’s APP program think a sticky packet has occurred, put the data on hold and continue to wait for the subsequent data to come up. For example, the attacker first sent the following “half” request.

Later, when the client sends over the real request

The APP program splices the request, and the real request header is blocked, but it keeps the user’s cookie information, thus using the user’s cookie to access the website content. The server side will think that the request sent in front is sent by the real client.

This vulnerability is caused by what the client thinks is the first negotiation but the server thinks is a renegotiation, and the lack of correlation between the first negotiation and the renegotiation. The workaround is to disable renegotiation or use secure renegotiation. Secure renegotiation adds a secure renegotiation flag and an associativity check to confirm the first negotiation and renegotiation, thus ensuring that man-in-the-middle attacks can be identified and rejected and renegotiation secured.

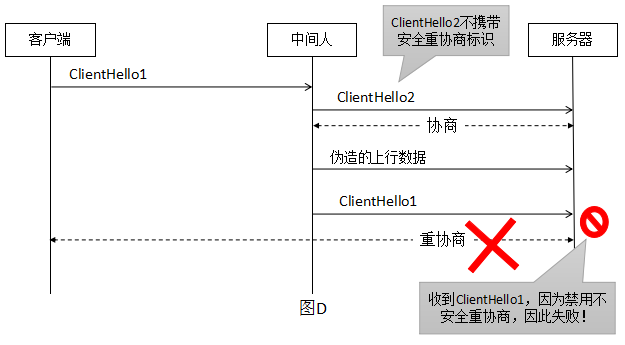

Let’s see how security renegotiation is secured, for the previous case where ClientHello2 does not carry a security renegotiation representation inside.

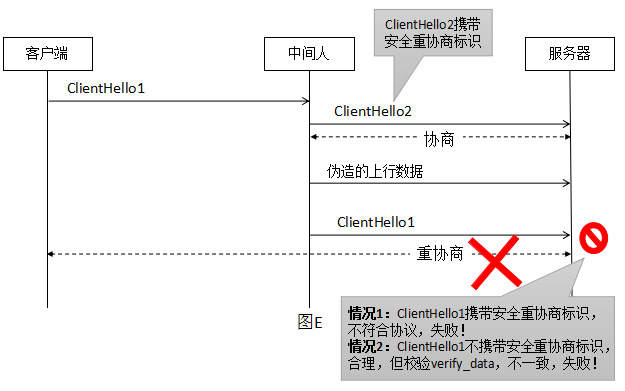

For the previous case of ClientHello2 carrying a secure renegotiation representation inside.

In either case, the middleman is guaranteed to be untouchable. And the hallmark of this security renegotiation is the provision of a new extension renegotiation_info . Since SSLv3/TLS 1.0 does not support extensions, an alternative approach is provided by adding TLS_EMPTY_RENEGOTIATION_INFO_SCSV(0xFF) to the list of algorithm suites, which is not a real algorithm suite but only serves as an identifier.

The process of security renegotiation is as follows.

- During the first SSL handshake when the connection is established, both parties notify each other of their support for secure renegotiation via the

renegotiation_infoextension or theSCSVsuite - Then after the handshake, both client and server record

client_verify_dataandserver_verify_datain theFinishmessage respectively. - When renegotiating, client includes

client_verify_datainClientHelloand server includesclient_verify_dataandserver_verify_datainServerHello. For victims, if these data are not carried in the negotiation, the connection cannot be established. And since the Finished message is encrypted, the attacker cannot get the values of client_verify_data and server_verify_data.

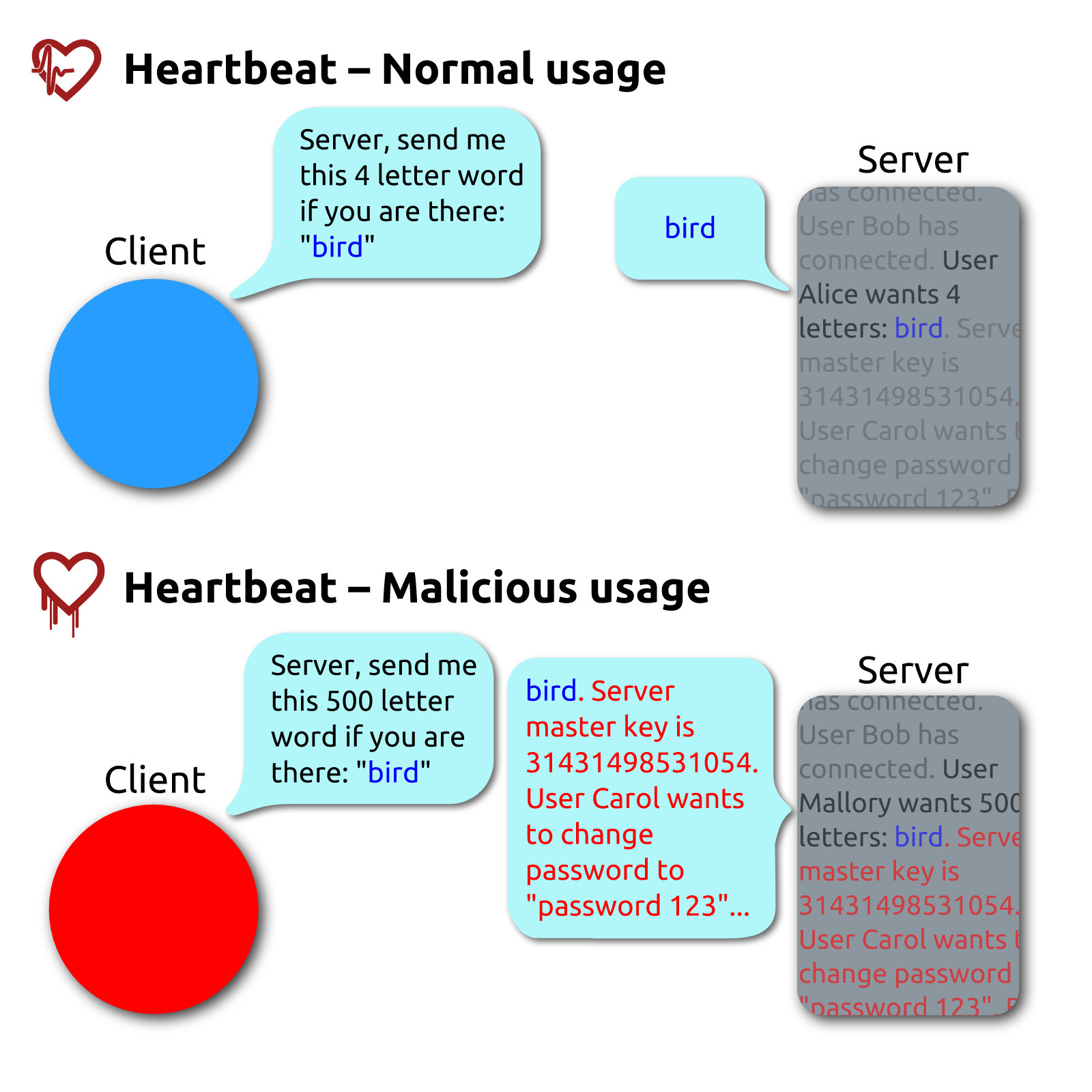

Heartbleed

Heartbleed, This is a bug in the implementation of the OpenSSL library, not in the TLS protocol itself, and is caused by the implementation of the TLS heartbeat extension not performing proper input validation (lack of bounds checking), which is also named after heartbeat. The failure to perform boundary checking resulted in more data being read than was allowed.

This bug is currently having a very widespread impact, with surveys showing that many sites are still exposed to this attack years after the vulnerability was announced. This bug warns us that even if the protocol is secure, the implementation can still introduce security problems. Security is like a barrel, where the overall security depends on the shortest board.

CRIME and BREACH attacks

Both attacks are based on compression algorithms, which can decrypt certain information by changing the request body and comparing the length of the ciphertext after being compressed.

CRIME is able to decrypt session cookies by running JavaScript code in the victim’s browser and listening to HTTPS transmission data at the same time, mainly for TLS compression.

The Javascript code attempts to brute force the cookie value one by one. The man-in-the-middle component is able to observe the ciphertext of each cracked request and response, looking for differences, and once one is found, he communicates with the Javascript performing the crack and continues to crack the next one.

BREACH attack is an upgraded version of CRIME attack, the attack method is the same as CRIME, the difference is that BREACH utilizes not SSL/TLS compression, but HTTP compression. So to defend against BREACH attack you must disable HTTP compression.

BEAST attack

Prior to TLS version 1.1, the IV of the next record was a direct use of the ciphertext of the previous record. the BEAST attack exploits this, where the attacker controls the victim to send a large number of requests and guesses critical information using predictable IVs. The solution is to deploy TLS 1.1 or higher.

RC4 attack

Based on the security of the RC4 algorithm, RC4 is currently insecure and should be disabled.

POODLE Attack

is an SSL 3.0 design vulnerability that uses non-deterministic CBC-padding, making it easier for a man-in-the-middle attacker to obtain plaintext data via a padding-oracle attack.

Downgrade attack (version fallback attack)

Trick a server into using a lower version of the insecure TLS protocol, often used in combination with other attacks. Removing backward compatibility is usually the only way to prevent degradation attacks.

Forward security

Without forward security, once the private key is leaked, not only future sessions will be affected, but all past sessions will be affected as well. A patient hacker can first save the previously intercepted data first, and once the private key is leaked or cracked, he can crack all the previous ciphertexts. This is called intercept today, crack tomorrow .

One of the implementations of TLS is to generate session keys by using a temporary DH key exchange, and one cipher at a time ensures that even if a hacker goes to great lengths to crack the session key this time, only this communication will be attacked, and the previous historical messages will not be affected. This we already talked about in part 1.

But even if a temporary DH key exchange is used, the session management mechanism on the server side affects the forward security. In the previous section on session reuse we talked about session ticket, whose protection is entirely dependent on symmetric encryption, so a long valid session ticket key prevents the implementation of forward security.

In practice, the temporary DH key exchange class algorithm suite should be used in preference, the validity of session should not be set too long, and the key of session ticket should be changed frequently.

TLS 1.3 New Features

TLS 1.3 is a huge change from TLS 1.2, with the main goals being maximum compatibility, enhanced security, and improved performance.

The following are the major differences compared to TLS 1.3.

- Symmetric encryption algorithm retains only the AEAD class of algorithms, separating the key exchange and authentication algorithms from the concept of algorithm suites

- 0-RTT mode has been added

- Removed static RSA and DH key negotiation (all public key-based key exchanges now provide forward security)

- All handshake messages after

ServerHelloare now encrypted - Key derivation function redesigned, KDF replaced with standard

HKDF - The handshake state machine has been significantly refactored, cutting out redundant messages such as

ChangeCipherSpec - Use a unified PSK model, replacing the previous Session Resumption (including Session ID and Session Ticket) and the earlier TLS version of the PSK-based (rfc4279) algorithm suite

A few key features are introduced here

Key Exchange Modes

TLS 1.3 proposes 3 modes of key exchange.

- (EC)DHE

- PSK-only (pre-shared symmetric key)

- PSK with (EC)DHE A combination of the first two with forward security

1-RTT handshake

As mentioned earlier, the full TLS 1.2 handshake has 2 RTTs, the first RTT is ClientHello/ServerHello and the second RTT is ServerKeyExchange/ClientKeyExchange. The reason why two RTTs are needed is that TLS 1.2 supports a variety of key exchange algorithms and different parameters, which all rely on the first RTT to negotiate out. ECDH P-256 or X25519. So simply let the client cache what key exchange algorithm the server used last time, and merge KeyExchange directly into the first RTT. if the server finds that the algorithm sent up by the client is not correct, then tell it the correct one and let the client retry. (This introduces the HelloRetryRequest message). This basically has no side effects, and it’s down to 1-RTT.

The full handshake flow for TLS 1.3 is as follows.

|

|

The handshake process can be divided into three phases.

- Key exchange: Shared key material is established and encryption parameters are selected. All messages after this phase are encrypted.

- Server-side parameters: Establish other handshake parameters, such as whether the client needs authentication, application layer protocol support

- Authentication: Authentication, provide key confirmation and handshake integrity

Reuse and PSK

PSKs for TLS can be established directly out-of-band or through the session of the previous connection. Once a handshake is complete, the server sends the client a PSK id corresponding to the key derived from the initial handshake. (This corresponds to the Session ID and Session Tickets of TLS 1.2 and earlier, both of which are deprecated in TLS 1.3).

PSK can be used alone or in combination with (EC)DHE key exchange to provide forward security.

The reuse and PSK handshake flow is as follows.

|

|

The identity of the server in this case is authenticated by PSK, so the server does not send Certficate and CertificateVerify messages. When the client proposes reuse via PSK, the key_share extension should also be provided to allow the server to fall back to a full handshake if reuse is rejected.

0-RTT handshake with side effects

When the client and server share a PSK (either obtained externally or through the preceding handshake), TLS 1.3 allows the client to send data (early data) on the first flight. The client uses the PSK to authenticate the server and encrypt the early data.

The 0-RTT handshake flow is as follows, with the addition of the early_data extension and the 0-RTT application data on the first flight compared to the 1-RTT handshake with PSK reuse. After receiving the Finished message from the server, an EndOfEarlyData message is sent to indicate the replacement of the encryption key later.

|

|

The data security of 0-RTT is weak.

- 0-RTT data has no forward security because its encryption key is purely derived from PSK

- Application data in 0-RTT can be replayed across connections (regular TLS 1.3 1-RTT data is replay-proof via random numbers on the server side)

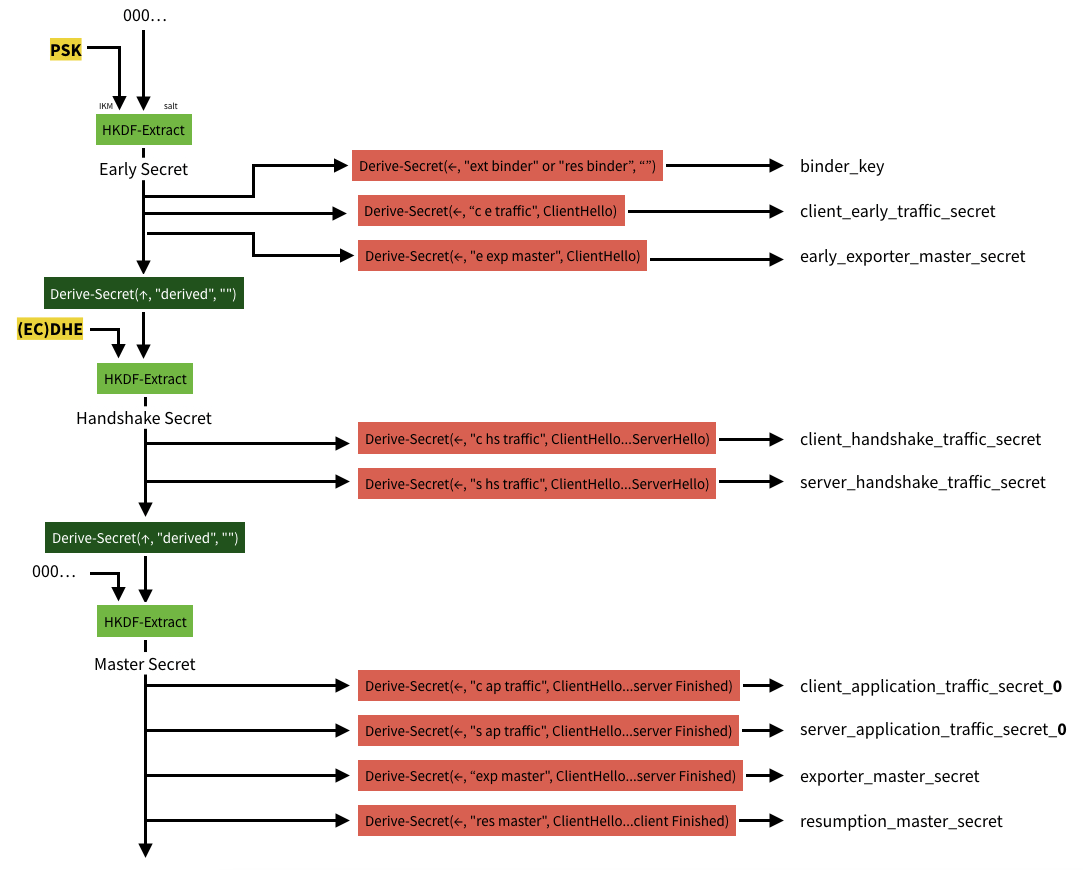

Key derivation process

The key derivation process uses the HKDF-Extract and HKDF-Expand functions, and the following functions

where HkdfLabel indicates

No matter which key exchange mode is given to go through the whole process below, when there is no corresponding input key material (IKM), the corresponding position is replaced with a 0-value string of Hash length. For example, if there is no PSK, Early Secret is HKDF-Extract(0, 0).

where exporter_secret is the export key for other user-defined purposes. resumption_master_secret is used to generate tickets client_early_traffic_secret is used to derive the early-data key for 0-RTT, *_handshake_traffic_secret is used to derive the encryption key for handshake messages , *_application_traffic_secret_N for deriving the encryption key for application messages.

Common Implementations

OpenSSL: very popular open source implementation, largest amount of code, worst written?

LibreSSL: also a fork of OpenSSL, OpenBSD project

BoringSSL: a fork of OpenSSL, mainly used in Google’s Chrome/Chromium, Android and other applications

JSSE (Java Secure Socket Extension): Java implementation

NSS: a library originally developed by Netscape, now mainly used by browsers and client software, for example Firefox uses the NSS library (developed by Mozilla).

go.crypto: Go language implementation