Why Federation v1 was deprecated

Federation v1 entered Beta in Kubernetes v1.6, but it was deprecated until Kubernetes v1.11 or so. The reason for the deprecation was because the development team felt that the practice of cluster federation was more difficult than expected and that there were many issues that the v1 architecture did not take into account, such as

- Control plane components can affect the overall cluster efficiency due to problems that occur.

- Inability to be compatible with the new Kubernetes API resources.

- Inability to effectively manage permissions across multiple clusters, such as not supporting RBAC.

- Federation level settings and policies rely on the Annotations content of API resources, which makes for poor resiliency.

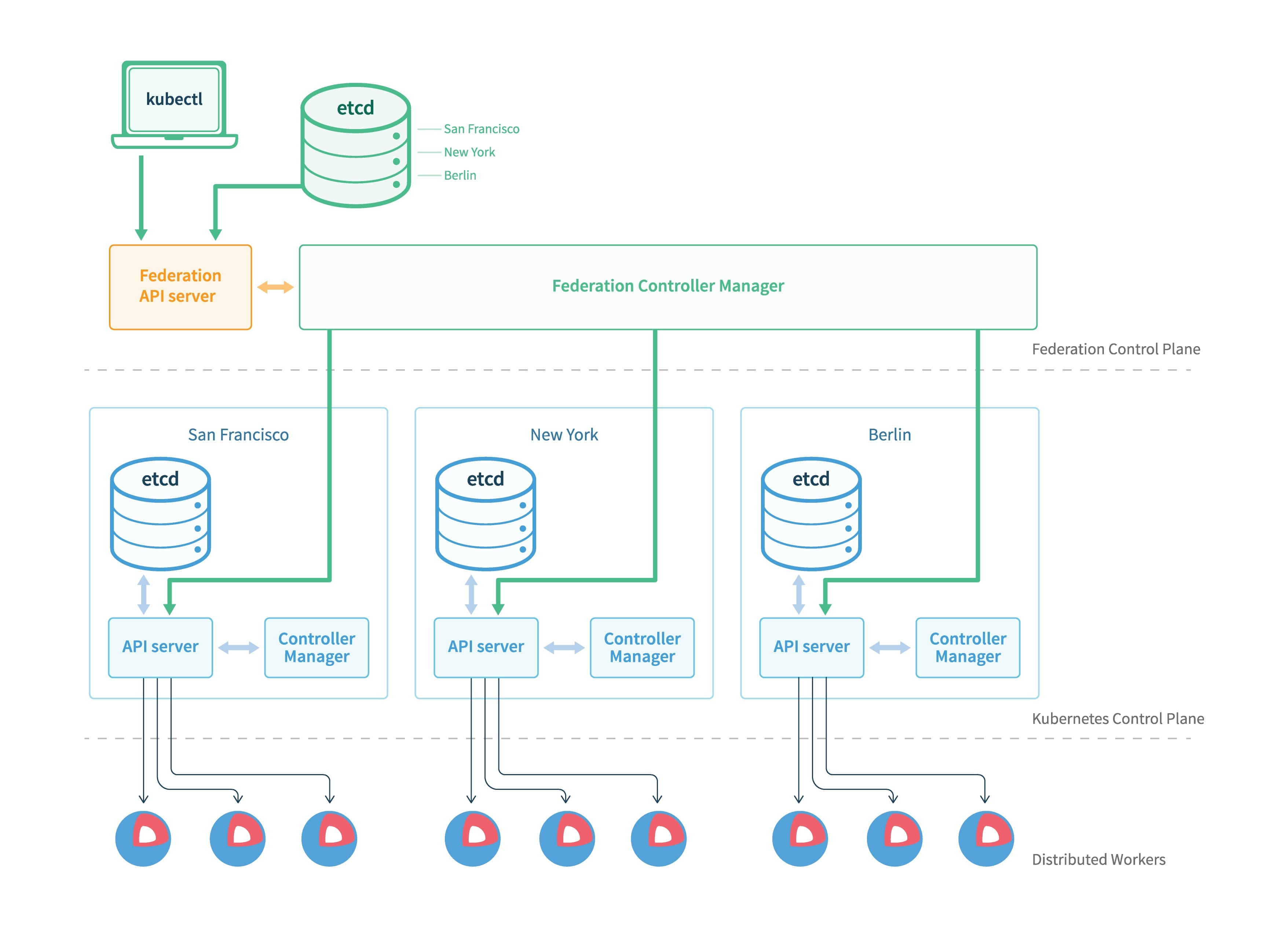

In terms of Federation v1 architecture, Federation mainly consists of API Server, Controller Manager and external storage etcd.

The Federation API Server basically replicates the Kube Api Server, providing a unified resource management portal to the outside world, but only allows the use of the Adapter to expand supported Kubernetes resources.

Controller Manager coordinates the state between different clusters and manages all Kubernetes member clusters by communicating with Api Server of member clusters.

The overall architecture of Federation v1 is similar to that of Kubernetes itself, and manages member clusters as a resource. However, because v1 was not designed to be flexible in adding new Kubernetes resources and CRDs, new Adapters had to be added every time a new resource was created.

The original resource design was very inflexible, and the RBAC support problem made it impossible to manage permissions for multiple cluster resources, so it was aborted, and a valuable lesson was learned for v2.

Federation v2 Design

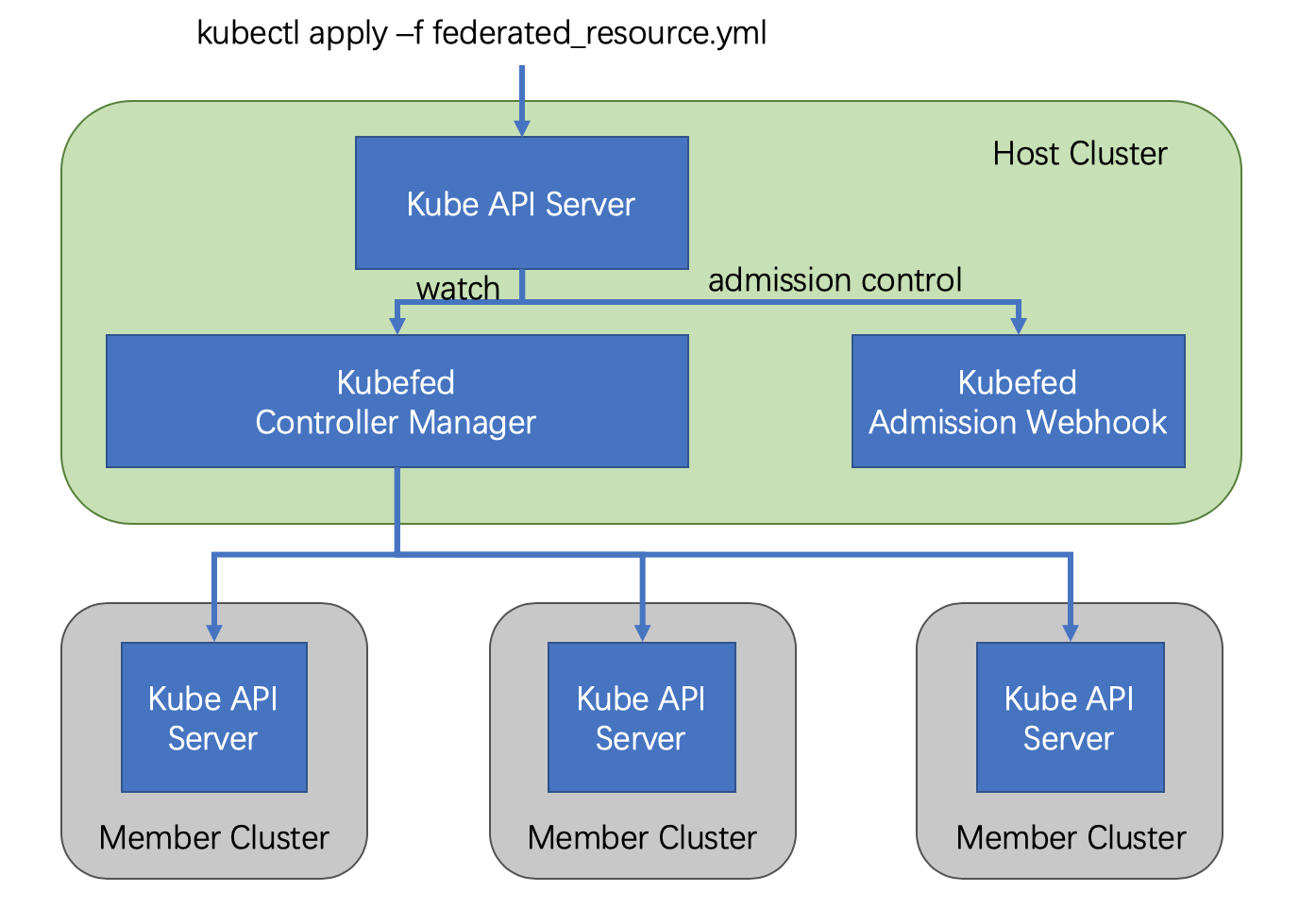

Federation v2 implements the overall functionality with CRD by defining multiple custom resources (CRs), thus eliminating the API Server of v1, but also introducing the concept of Host Cluster.

Basic concepts

- Federate: Federate is a group of Kubernetes clusters that are linked and provide a common cross-cluster deployment and access interface to them

- KubeFed: Kubernetes Cluster Federation, providing users with cross-cluster resource distribution, service discovery, and high availability

- Host Cluster: Deploys the Kubefed API and allows Kubefed Control Plane

- Cluster Registration: Enables member clusters to join the host cluster via

kubefedctl join. - Member Cluster: A cluster registered as a member through the KubeFed API and managed by KubeFed, a Host Cluster can also be a Member Cluster

- ServiceDNSRecord: Records Kubernetes Service information and makes it accessible across clusters via DNS

- IngressDNSRecord: Records Kubernetes Ingress information and makes it accessible across clusters via DNS

- DNSEndpoint: A custom resource that records Endpoint information (of ServiceDNSRecord/IngressDNSRecord)

Architecture

Although the design of Federation v2 has been changed significantly and the API Server has been omitted, the overall architecture has not changed much and when the Federation Control Plan is deployed it consists of two components.

admission-webhook provides access control, controller-manager handles custom resources and coordinates state across clusters.

How it works

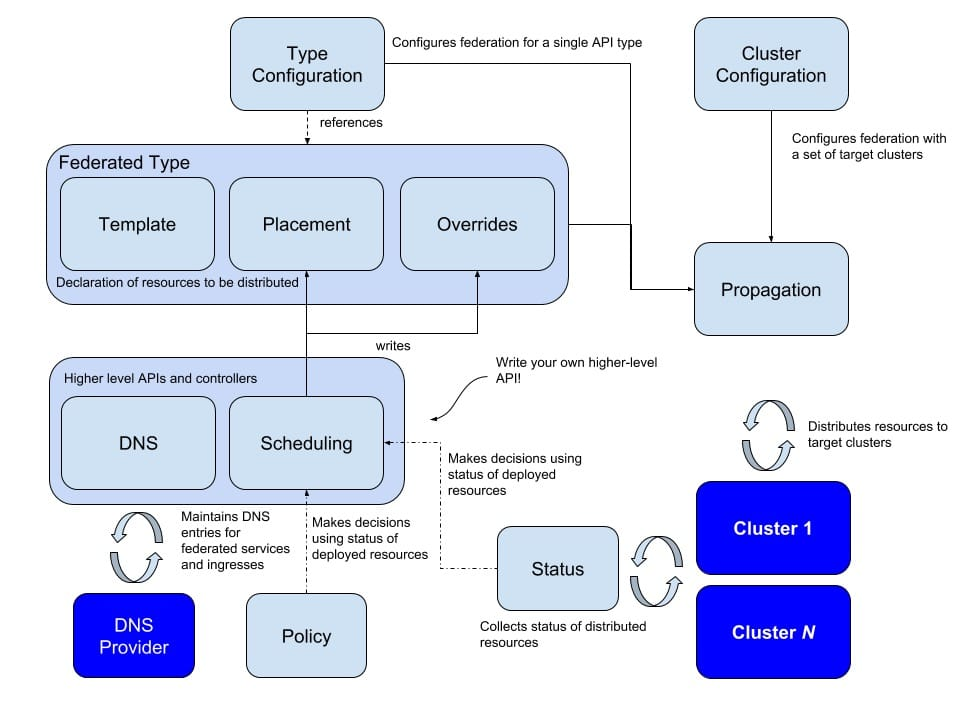

In terms of logic, Federation v2 is divided into two main parts: configuration and propagation.

The design of configuration clearly learns from v1, and configures a lot of content that will change. configuration contains two main configurations

- Type configuration: This describes the type of resources that will be hosted by the federation.

- Cluster configuration: to store the API authentication information of the federated cluster

For the Type configuration, federate v2 has gone to great lengths to include three key sections.

- Templates to describe the resources to be federated

- Placement to describe the clusters that will be deployed

- Overrides to allow overriding of some resources of some clusters

The above basically completes the definition of resources and provides a resource description for propagation. In addition, Federation v2 also supports defining deployment policies and scheduling rules for more granular management.