Today I received a work order from my R&D colleague, the content is: I need to copy the files to the server and request help to install the scp program.

I tried to execute scp command on that server, but the result is -bash: scp: command not found, but ssh is working fine. In this case the openssh-clients were deleted.

The scp command is based on the ssh protocol, so why bother with scp when you can use ssh?

We can use ssh to transfer files directly, and after learning it, we found that it is better than scp, which is more troublesome to write the path.

The first fact that many people overlook is that ssh can be executed directly on the remote host by typing a command such as ssh root@myserver.com "cat access.log" to cat the log contents of the remote file. ssh will connect the stdout of the remote command to the local stdout. You can see the live log with the command: sssh root@myserver.com "tail -f access.log" . This allows you to execute a single command locally for easy scripting or logging to local history.

Since ssh can connect stdout, it’s only natural that stdin can be connected too! For example, use this command to copy the ssh key to the server.

|

|

Transferring a file to the server.

|

|

The principle of the above command is to input the file content into stdout, connect it with ssh using a pipe, and then this stdout becomes the stdin of the remote command.

What about copying the entire folder? No problem.

|

|

If it is a file with high compression performance like logs, you can consider compressing it and then transferring it, and decompressing it from stdin on the remote side. And directly connect the input and output through the pipeline, compressing the file generated in the middle will not take up any space at all!

|

|

If you want to specify the remote destination folder, you can use the C parameter of tar to specify it, e.g. to remotely unpack it under /tmp.

|

|

Note that binary files like images and videos are already compressed, so if you use tar z to compress them again, you won’t save much transfer size, but will use up CPU for nothing.

Useful tip: If you back up MySQL to another machine every day, but do not take up space on the local machine, write the Crontab script as follows

|

|

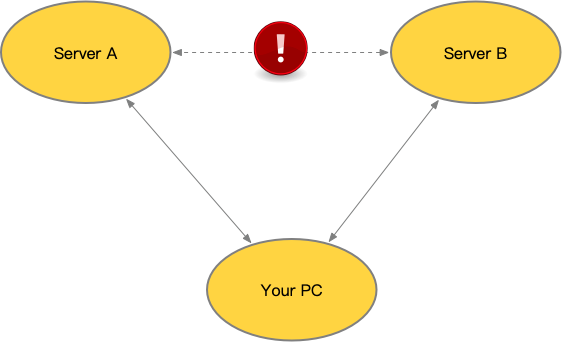

Suppose one machine A is in one network environment and another machine B is in another network environment, and they don’t interoperate with each other. But your computer (or fortress) can log in to both machines with ssh, so how can you copy files from Server A to Server B?

Use two ssh!

|

|

Understand that ssh can connect to stdin and stdout, and the possibilities are endless! And you can put all your scripts locally, so you don’t have to put some locally and some on the remote machine to execute them via ssh.

Oh yeah, ssh is an encryption protocol, so you will see the CPU usage rise during the transfer, because it is encrypting and (remote server) decrypting. You need to take this into account when using it. scp commands are based on ssh, so they will have the same problem.

nc is based on tcp plaintext transfer, so if you don’t need to encrypt and transfer more content, you can consider using this.

Execute nc on the Server side to listen to the port and output the input to nc to a file.

|

|

The file to be sent on the Client side is then entered into this port on the server.

|

|