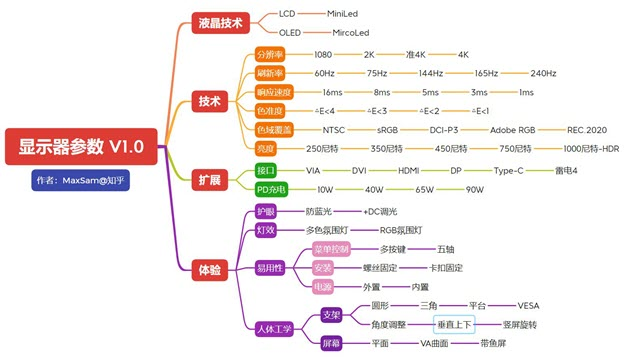

When buying a laptop, usually only focus on CPU and memory, SSD, appearance and what not, usually less attention to the monitor. Also some of the terms in the propaganda about the monitor also unknown feeling, but is it true? So took the time to do some collection and collation.

The size of the monitor

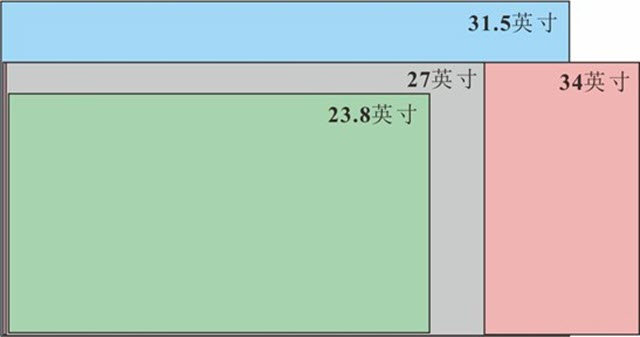

The general laptop screen size is more fixed several mainstream sizes, generally 13.3 inches, 14 inches and 15.6 inches (1 inch = 2.54 cm), where the size refers to the length of the screen diagonal.

Since the size of the screen identified by the diagonal is used, it is not the larger the screen size, the larger the area, but also the aspect ratio of the screen needs to be taken into account. For example, the ultra-wide aspect ratio greater than 21:9, in fact, the area is relatively small.

Early 4:3 screen

The monitor comes from the TV, the TV display content from the broadcast signal source, the content of the signal source comes from the impact recording equipment, the premise of the image recording equipment is dependent on the lens, the lens is positive circular, the range of optical information that can be recorded should also be positive circular. But the positive round information content is obviously not in line with the aesthetic habits of the general public. Recording equipment in consideration of this, the need to give as much as possible to take advantage of the full image information ratio program. Concerned about the lens should know that usually the edge of the lens imaging sharpness is not as good as the center point. Before the invention of television, there existed image recording devices, cameras and their extensions, using a recording medium of negative film. In fact, the size of the negative does vary, such as the common 8mm negative 4.5:3.3, super 8 5.79:4.01, 135mm 3:2, 120 format 1:1, 17.5mm 1:1, and so on. As the source of information is defined, the information presentation system must follow this definition. So the original TV screen, and other screens derived from the TV screen, must comply with the source screen ratio constraints.

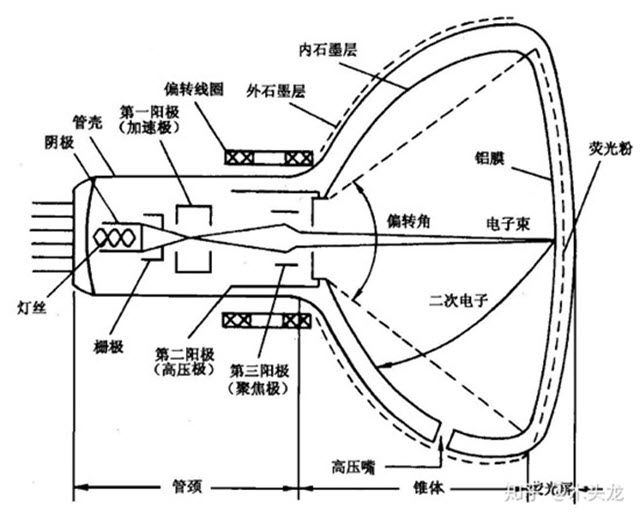

Early displays were CRT displays, or cathode ray tubes. According to Wikipedia: the use of cathode electron gun emitted electrons, under the action of anode high voltage, shot to the phosphor screen, so that the phosphor light, while the electron beam in the role of the deflecting magnetic field, for the purpose of scanning up and down, left and right movement. The early cathode ray tube can only display the intensity of light, showing a black and white picture. While the color cathode ray tube with red, green and blue three electron guns, three electron guns at the same time emitting electrons to hit the phosphor on the screen glass to show the color.

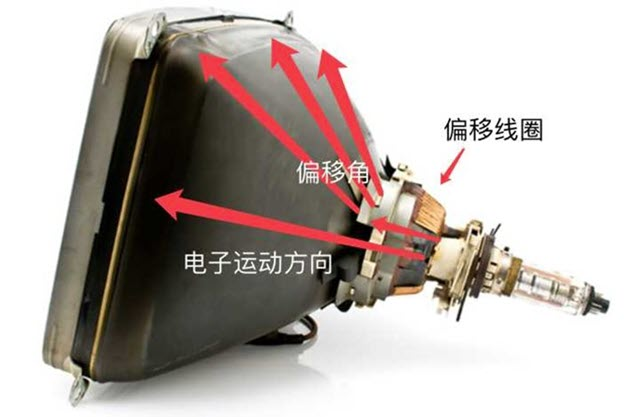

Cathode ray tube is through the electron strikes to the phosphor to display the pattern, but a frame is needed from left to right, from top to bottom, by the electron gun emitted by the electron scanning out. Electron gun scanning screen, always can not do their own left and right mechanical movement up and down, after all, a second tossed so many dozens of times must not work, and the back of the volume must also be larger than the screen can be realistic region, how ugly. So, the electron gun emitted by its front deflection coil to deflect the direction of the guide electron movement, so that it accurately hit the corresponding point of the phosphor coloring. As the more to the edge of the movement, the higher the sensitivity requirements of the deflection coil. One of the purposes of the early CRT spherical screen design is also to compensate for the sensitivity of the deflection coil to do, so that the angle of electron motion to the corners can be better adjusted, so as to get a clearer image focused on the corners.

Since the image is generated by electron beam deflection, it is clear that for a particular tube neck, the maximum deflection angle generated by the electron beam is in the 4 corners of the phosphor screen. This is why monitor sizes are measured in terms of diagonal length: for a 14" monitor, the electron beam deflection distance is 14/2 = 7". It is also easy to reason that when the back end is determined, the largest display area is vertebral shape is round - however this does not fit our reading habits. Whether from reading habits, or production, transportation, fixed and other considerations, rectangular (including square) is the most appropriate, when the diagonal length is determined, the largest display area of the rectangle is square. To sum up, after the diagonal length is determined, choosing 4:3 which is closer to the square can display more content.

Widescreen era 16:9

Two reasons why LCD monitors are popular 16:9 and 4:3 to 16:9 conversion.

- Display price competition: the same size LCD display 16:9 than 4:3 panel area is 98% smaller. This corresponds to a cost reduction of 10.98%.

- Computer into the multimedia era, more users use computers for viewing and games, widescreen has more in line with the visual ratio of the human eye, the human “global field of view”, left and right is much larger than the top and bottom, can be imagined as a flattened oval. So widescreen can improve immersion.

16:10 and 3:2 in the post-widescreen era

Return to 16:10/3:2 is the whole machine differentiation competition + computer back to the traditional office tools.

- Play games, watch movies, entertainment use, use widescreen

- Office with 3:2, 4:3, 16:10 screen, in general the more square the better.

The reason: the human “global field of view”, left and right is much larger than the top and bottom, can be imagined as a flattened oval. So a wide screen can improve immersion. And the “attention field of view”, only a small piece of the middle close to the circle.

Desktop size is large, even if it is widescreen, you can completely cover the attention span, so choose a large widescreen monitor on the line. Notebooks will not work. Screen size is limited, can only consider the screen ratio to do the article, is to take care of entertainment immersion with a widescreen, or take care of attention to productivity with a square screen.

That said, the difference in length and width of less than 2cm, in fact, for many users, may not form a big difference in experience. For manufacturers, it is more or differentiated competition.

Monitors with an aspect ratio greater than 21:9

Claimed to be the largest width the field of view can see. It is also still a niche product due to its relatively high price.

How to choose the screen size?

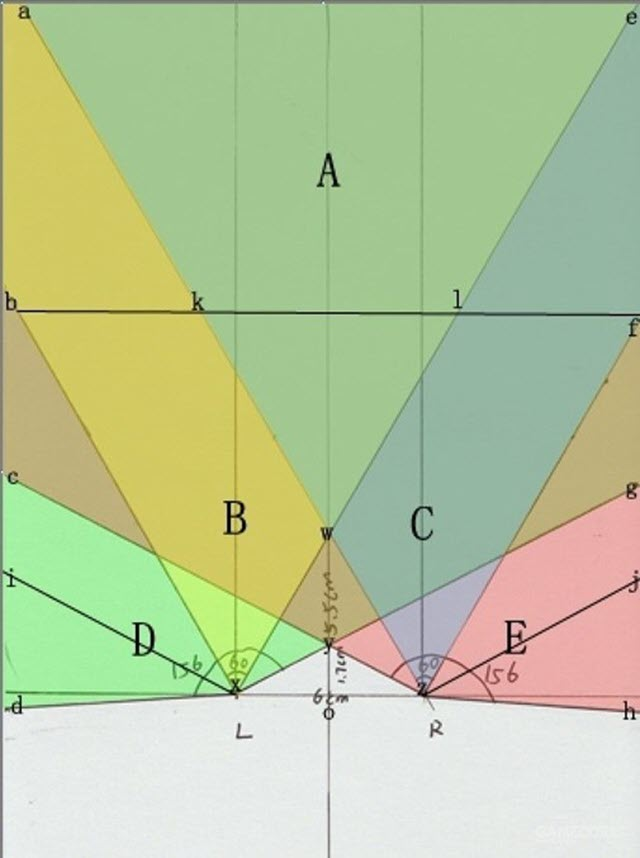

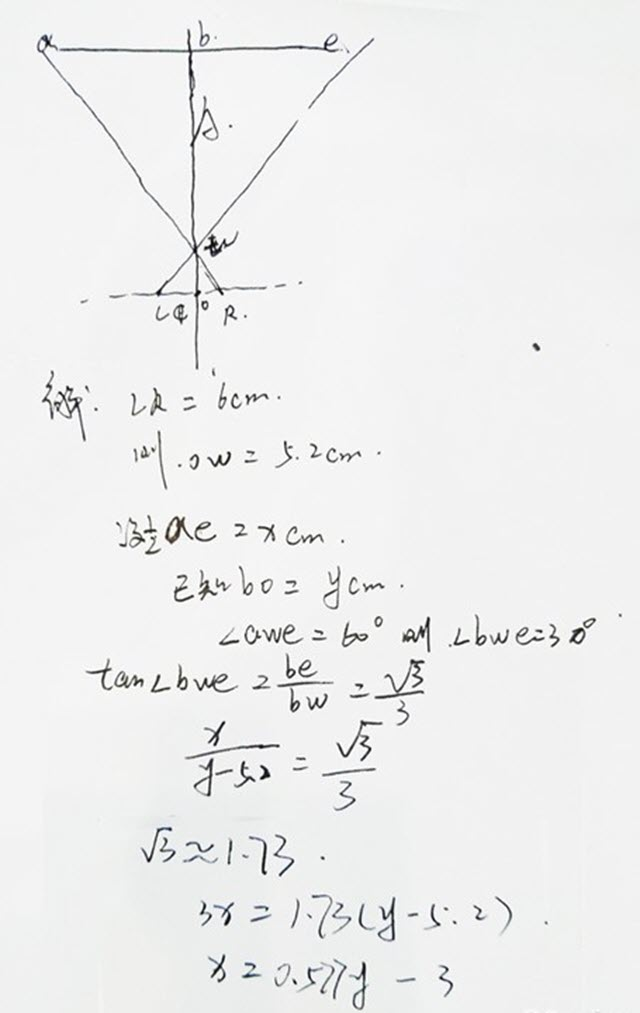

In fact, about the size, the first thing to understand the comfort zone of the human eye, the human naked eye visual angle of degrees, usually 120 degrees, when concentrating on about one-fifth, that is, 25 degrees. The maximum horizontal viewing angle of a person’s single eye is 156 degrees, and the maximum horizontal viewing angle of both eyes is 188 degrees. A person’s field of vision overlaps at 124 degrees for both eyes and 60 degrees for the comfortable field of vision of one eye. The distance between the two pupils is almost 6~7cm, according to the above chart, A area is a more comfortable area for our eyes, that is, our eyes do not need too much substantial movement to watch the screen size, the next is a series of calculation links.

That is, half the width of the screen X = Y (distance from the screen) × 0.577 - 3, a direct conversion is: screen width = the distance between the human eye and the screen × 1.154 - 6, the unit is centimeters (cm).

If you do not want to calculate, we take a 27" 16:9 monitor here as an example, the width of the outer bezel is 62.1cm, the width of the screen panel is 59.773cmX33.622cm, if you are about 55cm away from the screen like choosing a 27" monitor will be more appropriate.

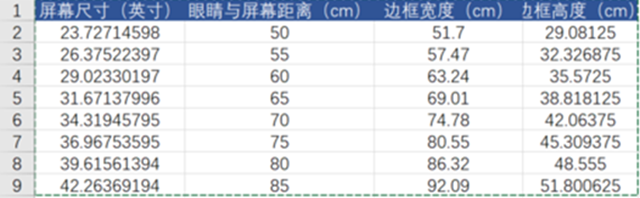

That specific monitor size how to choose it? I wrote the formula into the EXCEL table to calculate the theoretical value of the human eye and screen distance (50cm-85cm range) more appropriate screen size.

Select the monitor screen size recommendation.

| Distance from monitor (cm) | Display size (inch) |

|---|---|

| 40cm-55cm | 20 - 24 |

| 55cm-70cm | 24 - 27 |

| 70cm-80cm | 27 - 32 |

| 80cm-100cm | 34 |

| 100cm-150cm | 38 |

The actual experience, because people in the viewing monitor is a dynamic process, and people in the concentration of reading observation perspective will be narrowed, we can only focus on a very small piece of the screen, especially in the gaming game we need to quickly see the entire screen changes, which is the reason why many current e-sports players have been choosing 24-inch gaming monitor. So here I would like to give you a reference to choose the recommended size of the screen.

Resolution of the monitor

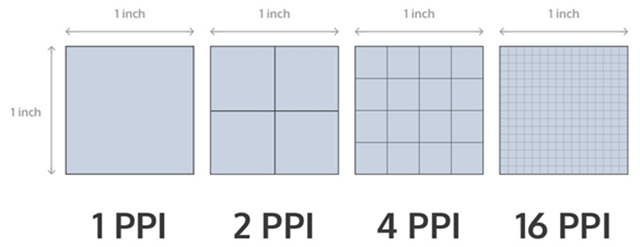

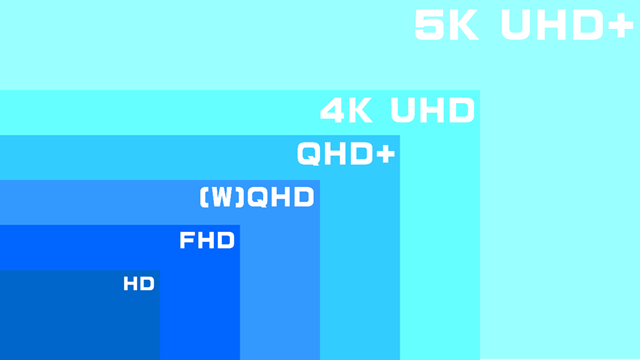

As you probably know, the current mainstream screen display is to display a complete image by putting together small blocks of color, and the so-called pixels are these small blocks of color. The resolution represents the number of pixels, where the higher-end 2K screen is actually 2560px*1440px resolution, which can be simply understood as 2560 pixels vertically and 1440 pixels horizontally. 1080p means 1920*1080 resolution, and 720p means 1280*720 resolution. The more pixels per unit area, the clearer and more detailed the screen display.

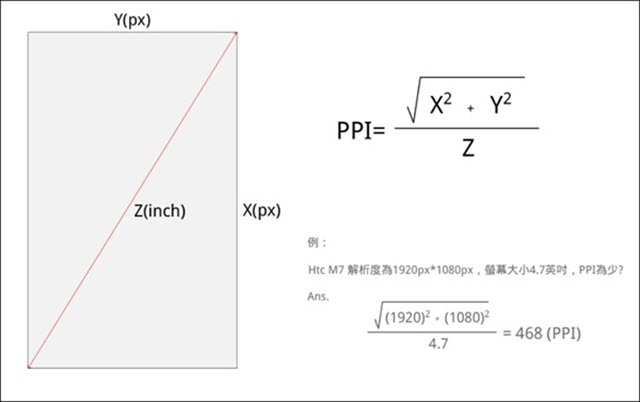

Now comes the question, does the higher resolution mean that the screen display is clearer and more detailed? The answer is no. “The more pixels per unit area, the clearer and more detailed the screen display”, the focus on the “unit of the word”. For example, the same are 1080P screen in 40-inch TV and 5.8-inch cell phones on the clarity must be different. In order to quantify this “unit area”, we have defined the concept of PPI, that is, pixel density. the higher the PPI can be regarded as the screen more clear and fine. Retina is a high resolution standard. At normal usage distance, it is imperceptible to the human eye, and the naked eye cannot distinguish individual pixel dots. All can be called Retina, called Retina display in Chinese. To calculate the pixel per inch value of a display, first determine the size (diagonal) and resolution (physical pixels) of the screen.

Resolution Name

The width and height dimensions of an electronic visual display device, such as a computer monitor, in pixels. Some combinations of width and height have been standardized (e.g., through VESA), and names and abbreviations are often used to describe their dimensions.

In a display of the same size, a higher display resolution means that the displayed photo or video content looks sharper, while the pixel pattern looks smaller.

| computer standard | resolution | ratio |

|---|---|---|

| CGA | 320×200 | 16:10 |

| QVGA | 320×240 | 4:3 |

| WQVGA | 480×272 | 16:9 |

| B&W Macintosh/Macintosh LC | 512×384 | 4:3 |

| HVGA | 480×320 | 3:2 |

| EGA | 640×350 | 64:35 |

| nHD | 640×360 | 16:9 |

| VGA 及 MCGA | 640×480 | 4:3 |

| HGC | 720×348 | 60:29 |

| MDA | 720×350 | 72:35 |

| Apple Lisa | 720×360 | 2:1 |

| SVGA | 800×600 | 4:3 |

| WVGA | 800×480 | 5:3 |

| FWVGA | 854×480 | ≈16:9 |

| qHD | 960×540 | 16:9 |

| DVGA | 960×640 | 3:2 |

| WSVGA | 1024×600 | 128:75 |

| XGA | 1024×768 | 4:3 |

| XGA+ | 1152×864 | 4:3 |

| HD | 1280×720 | 16:9 |

| WXGA | 1280×768 | 15:9 |

| WXGA | 1280×800 | 16:10 |

| SXGA | 1280×1024 | 5:4 |

| WXSGA+ | 1366×768 | 683:384 and 16:9 |

| WXGA+ | 1440×900 | 16:10 |

| SXGA+ | 1400×1050 | 4:3 |

| WSXGA | 1600×1024 | 25:16 |

| WSXGA+ | 1680×1050 | 16:10 |

| UXGA | 1600×1200 | 4:3 |

| WUXGA | 1920×1200 | 16:10 |

| Full HD | 1920×1080 | 16:9 |

| 2K Resolution | 2048×1080 | ≈17:9 |

| QXGA | 2048×1536 | 4:3 |

| QHD | 2560×1080 | 21:9 |

| WQHD | 2560×1440 | 16:9 |

| WQXGA | 2560×1600 | 16:10 |

| QSXGA | 2560×2048 | 5:4 |

| WQSXGA | 3200×2048 | ≈15.6:10 |

| QUXGA | 3200×2400 | 4:3 |

| Ultra-Wide QHD | 3440×1440 | 21:9 |

| 4K UHD | 3840×2160 | 16:9 |

| DCI 4K | 4096×2160 | (≈17:9) |

| WQUXGA | 3840×2400 | 16:10 |

| HSXGA | 5120×4096 | 5:4 |

| WHSXGA | 6400×4096 | 25:16 |

| HUXGA | 6400×4800 | 4:3 |

| 8K Ultra HD | 7680×4320 | 16:9 |

| WHUXGA | 7680×4800 | 16:10 |

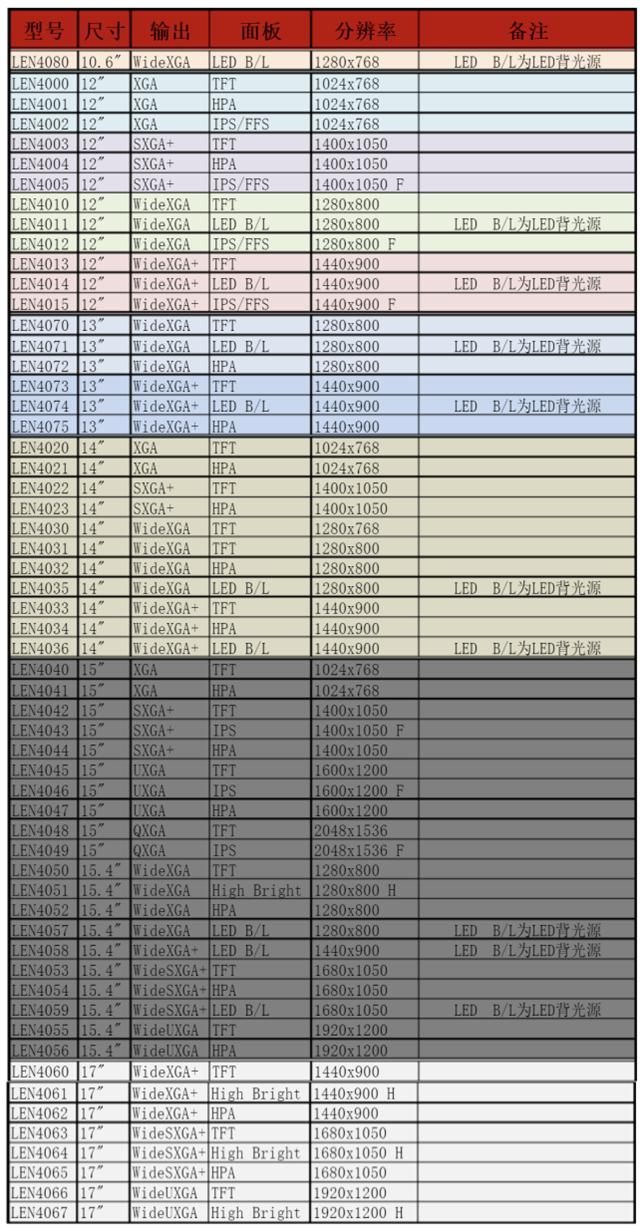

The manufacturer will also set the model number for different resolutions, the following is the resolution corresponding to the monitor model used by Lenovo.

Monitor Panel

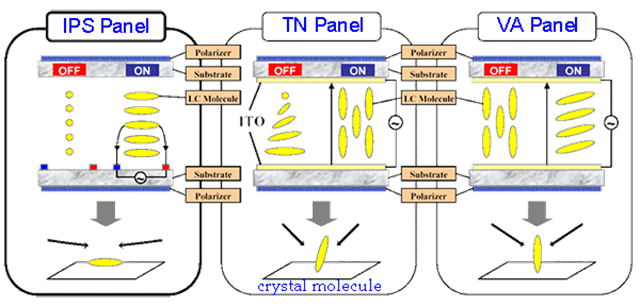

Panel is the heart of the monitor, 80% of the cost of a monitor are focused on the panel again, now there are three mainstream panels on the market, respectively, IPS, VA, TN.

IPS Panel

IPS panel is the so-called “hard screen”, the current mainstream popular panel type, the screen does not appear obvious ripples by hand, wide viewing angle, response speed and color reproduction are good. However, light leakage from IPS panels is very common, which is unavoidable, and is generally normal as long as the light leakage is not so exaggerated that it is visible to the naked eye.

Nowadays, IPS technology is developing very fast, there are Fast IPS which focuses on gaming, Nano IPS which takes into account both gaming and image quality.

VA Panel

VA panels are mainly from Fujitsu-led MVA panels and PVA panels developed by Samsung, which are Samsung’s improved products and have a higher usage rate in the market.

VA panels have a high contrast ratio and good viewing angles, but are slightly worse than IPS, and are prone to uneven color display, depending on how each brand’s monitor is optimized through software, and are currently mostly used in curved monitors.

TN Panel

TN panel is a “soft screen”, there will be “water ripples” when pressed by hand, poor viewing angle, due to the relatively low cost, generally used in entry-level monitors. However, its advantage is that it is easy to improve the response speed, and many gaming laptops will use it.

In terms of color performance, TN panels are obviously inferior to IPS and VA, and although the technology has made great progress, its display is still not as good as it should be.

Selection principle: If you have no special needs, the first choice must be IPS panel.

Display Brightness

Brightness is the degree of lightness or darkness of an object and is defined as the intensity of light per unit area in nits (nits). Nit is the unit of luminance, 1nit = 1 cd/m². Luminance is the luminous body (reflector) surface luminous (reflective) strength of the physical quantity. The human eye from a direction to observe the light source, the light intensity in this direction and the human eye to “see” the ratio of the light source area, defined as the luminance of the light source unit, that is, the luminous intensity per unit projection area. The unit of brightness is candela / square meter (cd/m2) brightness is the human perception of the intensity of light.

Nowadays, the brightness of mainstream high-end displays is generally 300-350 nits. About the choice of brightness, the higher the better, because the brightness of high can be adjusted down, the brightness of low can not be adjusted up!

Display Contrast Ratio

Contrast ratio is the ratio between the brightest and the darkest brightness that the screen can display, the higher the value, the richer and more delicate the gradient change (picture level) from the darkest to the brightest, and the better the display of shadows or dark fields (not to be a black or a bright).

As shown in the figure below, the contrast ratio of the left screen is significantly lower than the two screens on the right (while the upper right is slightly higher than the lower right).

The higher the contrast ratio, the better, the market IPS screen and TN screen is generally in the 1000:1, VA screen is generally in the 3000:1 or so. General difference of about 500 can have a more obvious perception of the difference, IPS better contrast ratio can reach 1300, poor may only 700 more.

It should be especially noted that: the contrast ratio of the monitor generally refers to the static contrast ratio, but some businesses may be marked as dynamic contrast ratio, such as 20000000:1, dynamic contrast ratio is based on static plus the function of automatically adjusting the display brightness, often up to tens of millions of:1, dynamic contrast ratio is the numbers game played by the business, and there is little reference value for comparison!

Conclusion: The higher the contrast ratio, the better, 700 ~ 900:1 pass, 900 ~ 1100 medium, 1100 ~ 1300 good, more than 1500 very good.

Monitor Refresh Rate

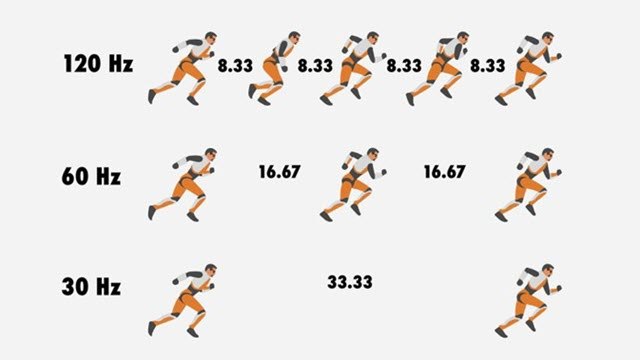

The refresh rate is the number of times the electron beam is repeatedly scanned for the image on the screen. The higher the refresh rate, the better the stability of the displayed image (screen). The high refresh rate will directly determine its price, but since the refresh rate and resolution are mutually exclusive, only a monitor with such a high refresh rate at high resolution can be said to have excellent performance.

Games with high refresh rates will produce significantly different results. Here’s what you need to know about testing, optimizing, and choosing a high refresh rate monitor. A higher refresh rate can significantly improve your gaming experience. This is especially true for fast-paced competitive games, where every frame counts. However, simply buying a 144 Hz or 240 Hz monitor is not enough to achieve a great experience. Your system must be able to support the necessary frame rates in order to take advantage of higher refresh rates.

As mentioned above, the higher refresh rate refers to how often the monitor updates the screen image. This update interval is measured in milliseconds (ms), while the monitor refresh rate is measured in hertz (Hz).

The refresh rate of a monitor is the number of times per second that the monitor draws a new image. It is measured in hertz (Hz). For example, if your monitor has a refresh rate of 144 Hz, this means that it refreshes the image 144 times per second. When combined with the high frame rates generated by the GPU and CPU, the refresh rate can lead to a smoother experience and possibly higher frame rates.

Three important components to consider in order to be able to take advantage of a higher refresh rate are

- A display with a high refresh rate.

- A high-speed CPU capable of delivering critical game instructions such as AI, physics, game logic, and rendering data.

- A high-speed GPU that can quickly execute instructions and create display images on the screen.

The display can only show images that have already been generated by the system, so it is critical that your CPU and GPU can generate images quickly. If your CPU and GPU can’t provide enough frames for the display, then no matter how well configured the display is, it won’t be able to produce a high refresh rate image.

If your monitor has a 144 Hz refresh rate but your GPU only supports 30 frames per second, high refresh rates are useless.

Display Response Time

What is response time? To explain this, let’s start with what response time is. The response time of a monitor refers to the time it takes for the monitor to go from displaying one color to converting to another, and this time is usually expressed in milliseconds (ms). At present, all monitors have response time, even CRT monitors, which are known for their lack of trailing images, but the response time of CRTs is very, very low, so it is not enough to worry about. To our common LCD monitor, for example, whether it is IPS, VA or TN panel LCD monitor, its light transmission principle is to apply voltage to the liquid crystal molecules, so that they turn or shift to achieve, and the time used to shift the position of the liquid crystal molecules is part of the response time.

The faster the response time, the faster the monitor switches colors, and the less likely we gamers are to perceive that the monitor is switching colors. If the color switching process is not fast enough, it will produce the “ghosting” that we often hear others mention, which we will talk about later.

The response time of the monitor generally refers to the gray to grey response time (GTG), IPS screen and VA screen can generally only do the fastest 4-5ms, TN screen is generally 1ms standard or even faster.

But many IPS screen, VA screen game monitor on the market are marked 1ms, please note that after this 1ms should generally have brackets or small letters: MPRT or VRB, refers to the dynamic picture response time MPRT (Moving Picture Response Time) or visual response boost VRB (Visual Response Boost). When MPRT or VRB is turned on, the screen will be very crummy (reducing screen brightness, sacrificing screen color, accelerating eye fatigue, and even causing screen crosstalk, etc.), and it’s impossible to play, so this 1ms is purely a marketing gimmick! The actual use of the hands, can have a 4-5ms response time, that is already considered quite good performance!

Of course, through the use of new high-tech materials (Nano IPS, Fast IPS), the standard grayscale response time of 1ms IPS screen also exists, but the price is obviously much higher, and the page will be specifically marked GTG 1ms. The 1ms advertised by the vendor is the fastest value among many measurements, but for the user the average value is the reference value (the actual fastest average is only 2-3ms), and after setting the response time to the fastest option, there will still be a loss of picture quality! However, the GTG 1ms IPS is still worth buying, in terms of response time and color performance is still much better than the ordinary IPS.

Summary: The faster the response time the better, within 3ms top, within 5ms excellent, within 10ms medium, >10ms not suitable for games.

Display Color Gamut

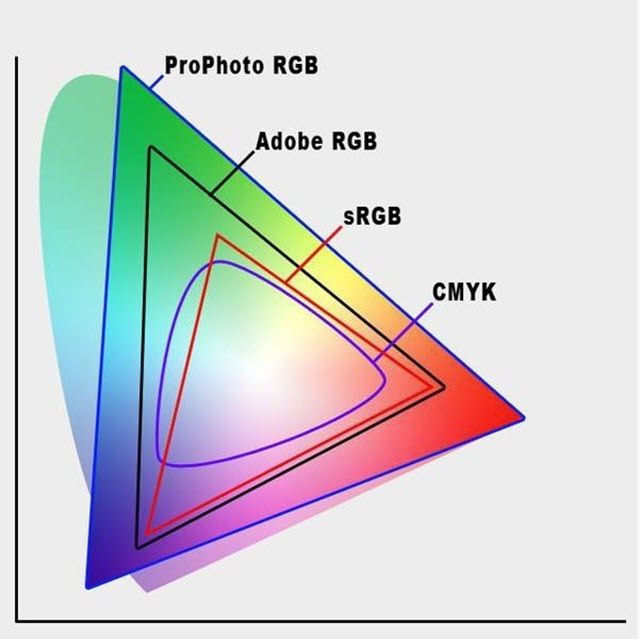

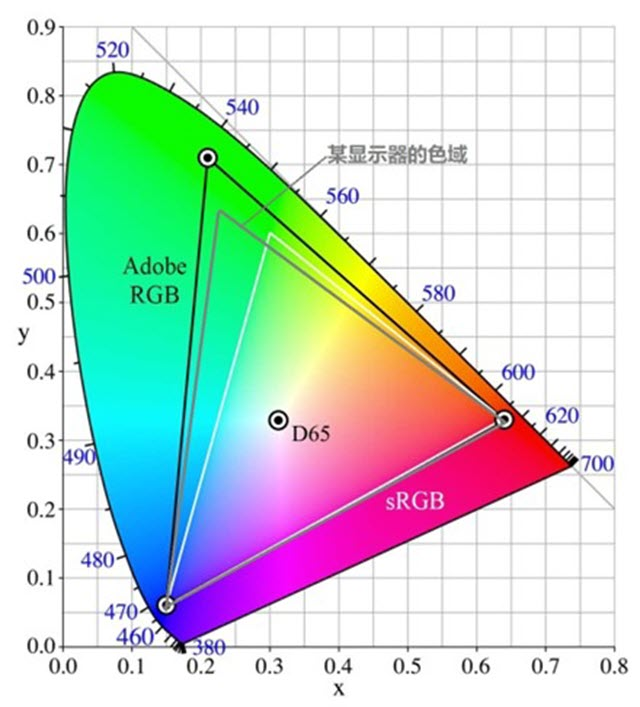

Color gamut refers to the range of color areas (color gamut is three-dimensional, but often converted to two-dimensional plane to facilitate representation), common color gamut standards such as sRGB, DCI-P3, Adobe RGB, etc., as follows CIE chromaticity chart in the color gamut Adobe RGB is broader than sRGB, displaying more and more colorful.

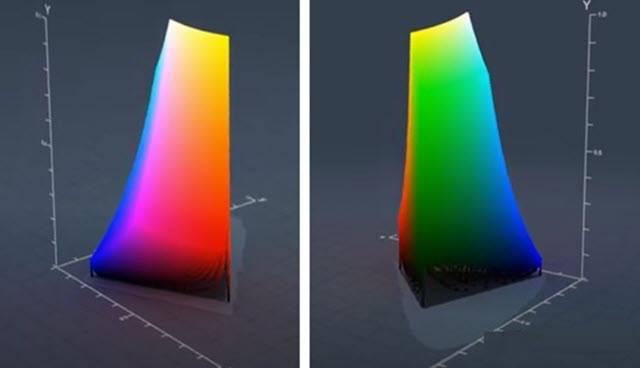

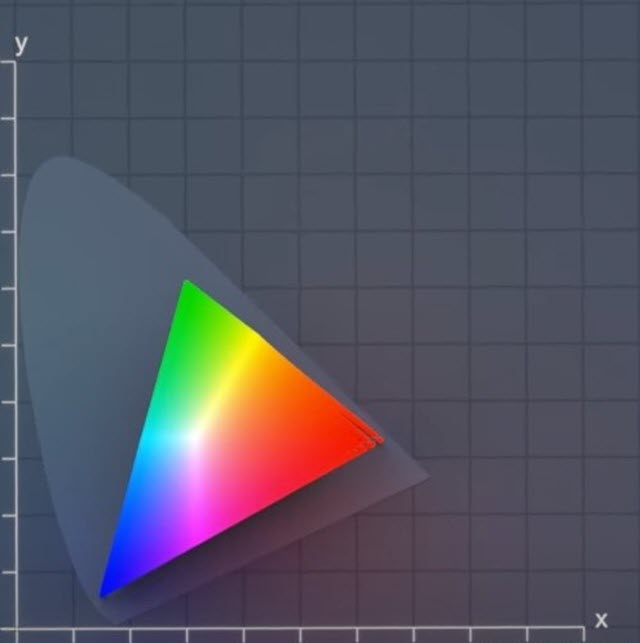

Since all colors are composed of red (R), green (G), and blue (B), the set of these colors should be a three-dimensional figure. sRGB color gamut looks like this.

The above chart may be unfamiliar to most people, but by looking at it from a different angle and changing the perspective to overhead, you can see the color gamut chart we are most familiar with.

Obviously, the triangle in Figure 2 is just a projection of the three-dimensional figure in Figure 1 in the plane, and the gray “pie” is the full color gamut, which is the set of all colors that the human eye can perceive. I didn’t realize that the sRGB gamut is so much smaller than the full gamut.

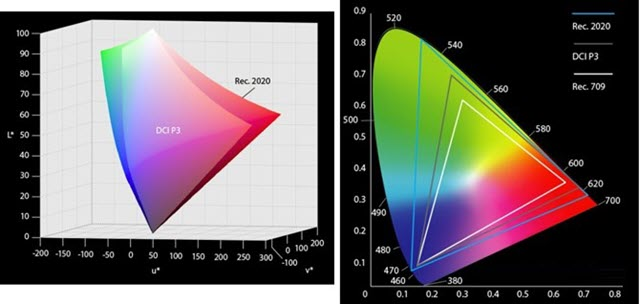

The same is true for wide color gamut, as shown in Figure 3. The pattern on the right side of Figure 3 is the projection of the left stereogram in the plane.

In the figure below, the color gamut of a monitor (gray triangle), covering most of the sRGB gamut (white triangle), assuming its coverage percentage is 98%, we can say that the [color gamut coverage] of the monitor is 98%. Because the best case scenario is complete coverage, the [gamut coverage] can only be 100% at most.

However, the area of the gray triangle is much larger than the area of the white triangle corresponding to sRGB. If the area of the gray triangle is 120% of the area of the white triangle, then we say that the monitor has 120% sRGB [color gamut volume]. Obviously, [color gamut volume] and [color gamut coverage] are two different concepts, and even if [color gamut volume] reaches 120% or even 150%, its sRGB [color gamut coverage] may still be less than 100%. If the sRGB coverage of a monitor is less than 100%, then there must be some sRGB-based colors that cannot be displayed by this monitor, i.e., its colors are missing. When a monitor labels its gamut as “120% sRGB”, there is no doubt that 120% refers to the gamut volume, and this does not reflect the size of its gamut coverage. If a monitor is labeled as “99% sRGB” and there is no indication of coverage or volume, it is hard to tell what the 99% is, and if it is only 99% sRGB color volume, then the sRGB coverage may be very low. Generally speaking, for a monitor that can really achieve 99% sRGB color gamut coverage, it will be clearly marked with “99% sRGB color gamut coverage”, and there is no need to deliberately hide the word “coverage”.

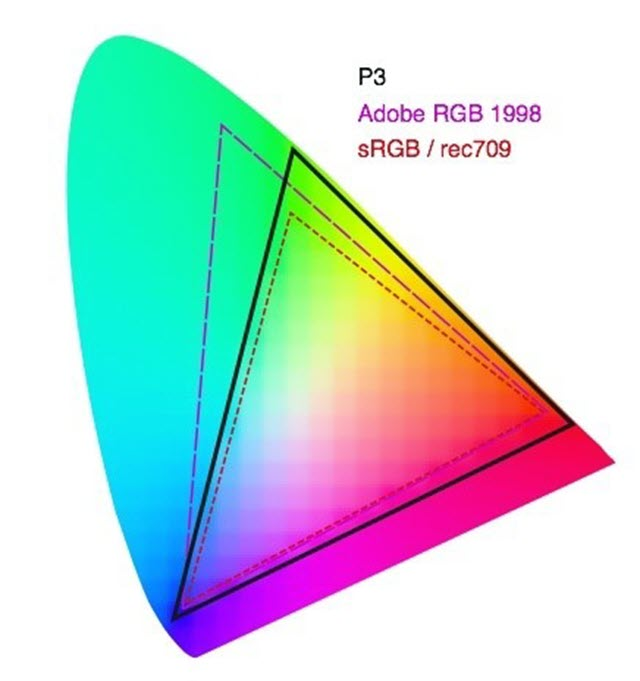

What is wide color gamut? Wide color gamut has its own strict criteria, but in short, it is a color gamut with a larger coverage/space volume than sRGB. The more common ones now are Adobe RGB and DCI-P3, both of which are projected on a flat surface as follows. The smallest triangle in the diagram is sRGB, and the other two gamut areas are obviously much larger than sRGB’s.

The birth of the Adobe RGB color gamut is quite historic. The traditional printing industry strongly opposed and questioned the sRGB standard formulated by Microsoft and Adobe and other companies, because sRGB did not completely encompass the entire CMYK color gamut space, resulting in the green color commonly found in landscape photography not being reflected in printing. Facing the joint boycott and protest of the printing giants, Microsoft did not concede, and the dispute war between them was maintained for three years, and finally, with the mediation of Adobe, the Adobe RGB color gamut was formulated, and this broader color gamut perfectly contains all the colors required for printing. So, this is the reason why many professional photographers use Adobe RGB display devices. Adobe RGB color gamut includes more cyan, the effect is closer to the printed picture, so it is suitable for the design and printing field.

DCI-P3 is a standard proposed by the American film industry, covering more greens and reds to show richer and more brilliant color effects. The DCI P3 color gamut is also widely respected by the gaming industry as large single-player games are now increasingly emphasizing the “cinematic impact” of their graphics.

As for the NTSC color gamut, NTSC is the standard for the television industry, and monitors generally do not use NTSC. nTSC is an obsolete television color standard, and now retains its significance almost exclusively as a comparison between color standards, and today no content is used as a standard for it!

Display color depth

The color depth can be simply understood as the number of colors, the larger the value, the more delicate the color, the smoother and more natural the transition, as shown in the figure below (exaggerated processing, the actual difference is certainly not so obvious).

Color depth 8bit refers to the red (R), green (G), blue (B) three primary colors each 2 of the eighth power species, that is, 256 species (red has 256 different red, green has 256 different green, blue has 256 different blue, respectively, with the number 0-255 to represent), three primary colors combined, the total number of colors is 256 × 256 × 256 = 16.7 million ( Million).

Similarly, the number of colors in 10bit is 1.07 billion, and the number of colors in 6bit is 0.26 million. The color number of 6bit is relatively small, so the color display effect is relatively poor, and there is almost no 6bit color display on the market now. The 8bit color number is 16.7M, which is more than the public’s ability to recognize, and is sufficient for use.

It should be noted that the color depth is divided into native and dithered, and the difference between the two is not much, but there is still a difference, where the native display is relatively better and more expensive. The pixel dithering technology of the display (FRC, Frame Rate Control): the algorithm makes the pixel dots switch quickly between different colors, using the visual temporary effect of the human eye, thus mixing to produce the illusion of a new intermediate color. A simple example: suppose there are only black and white, the dithering panel will switch between black and white quickly, so as to mix the original gray that is not seen by the naked eye, and by controlling the ratio of the length of the black and white display, it can then mix different shades of gray! With this technology, a 6-bit monitor can display 8-bit colors; an 8-bit monitor can display 10-bit colors. This dithered color depth may produce a slight noise and flicker (especially when displaying shadows or dark fields), and the color transition is relatively less soft and natural than the native, but it is generally difficult to detect this dithered panel alone, but if you put it together with the native and look closely, you can still see the subtle differences.

Display HDR

HDR stands for High-dynamic-range, the corresponding display technology is SDR (Standard-dynamic-range), where HDR is closer to the human eye’s visual effect and can present richer details in both bright and dark areas, without the bright areas being too bright and the dark areas being too dark.

In recent years, the HDR hype is quite hot, there is a widespread abuse of HDR for excessive publicity phenomenon, many neither HDR10 nor HDR400 fake HDR also want to take out a big blow (such as the self-proclaimed HDR-Effect, HDR-Ready technology)!

The most common HDR10 and HDR400 effects on the market are not very obvious, but it’s better than nothing. Non-HDR lovers or HDR content creators can actually ignore this feature when shopping for a monitor!

HDR10 is one of the most common and widely used HDR formats, it refers to the protocol and standard for transferring HDR content between graphics cards and monitors, which is an open standard without any copyright or certification fees. Support for HDR10 only indicates that the monitor can accept HDR source input, but it does not clarify how well the monitor outputs HDR!

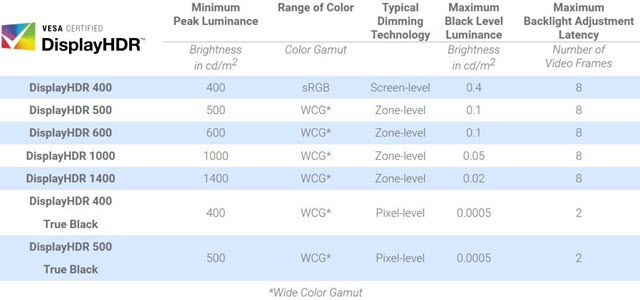

VESA’s DisplayHDR certification is the real measure of the monitor’s HDR effect, which is based on support for HDR10, and then divided into levels according to the monitor’s brightness, color gamut, color depth, dimming type, black level (black level), etc., each level is named by the peak brightness.

The lowest end of DisplayHDR is HDR400, which only requires a display with a peak brightness of no less than 400nit, native 8bit color depth, 95% sRGB (Rec. 709) color gamut, and global dimming. Note: A typical brightness value of 350nit can also be HDR400, as only a peak of 400nit is required at some point!

- Global dimming: the screen has only one backlight partition, only the whole screen can be adjusted uniformly, either the whole screen is brighter, or the whole screen is darker.

- Regional dimming: the screen backlight is divided into multiple regions, each region can independently adjust the brightness.

HDR400 certification requirements are actually not high, and slightly better ordinary monitor is not much different, but also because of the low threshold, so the market labeled VESA certification logo of the monitor is basically HDR400!

HDR500 and above, the certification requirements have been qualitatively improved: Local dimming, 10bit color depth, 90% DCI-P3 color gamut, etc. The most important of these is the area dimming, which can greatly improve the contrast and dynamic range to achieve a true HDR experience, while 10bit color depth and P3 wide color gamut can also bring better color perception. But none of these are required for HDR400 certification!

In summary, any HDR without VESA certification is basically a hooligan! And VESA certification of HDR400 can also be said to be a very misleading standard for consumers!

In addition, to achieve HDR also need to processor, graphics cards, systems, cables, sources and a series of support, any one ring error, ultimately can not see the HDR content! In fact, the vast majority of people buy back HDR10 or HDR400 monitor, almost never to open HDR!

Display interface

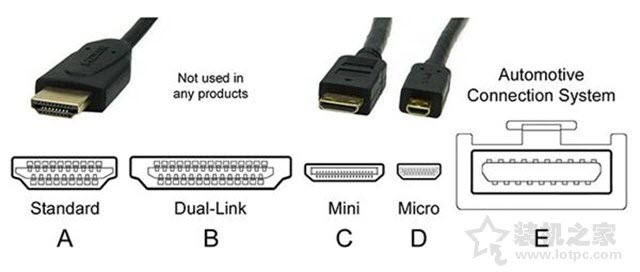

There are many types of display interfaces, when buying a host and monitor, the type of interface is a factor we need to consider, the current monitor interface has DP HDMI VGA DVI these kinds of interfaces, these interfaces are also different shapes, when we buy a host, we generally have to consider whether the host graphics card interface and monitor interface match. For example, your graphics card interface is HDMI + DP, and your monitor only VGA interface, it is embarrassing.

The current public version of the graphics card will have HDMI and DP two interfaces, but the general graphics card manufacturers will add a DVI interface on the graphics card, but at present we buy a new monitor interface complete then will also come with these three interfaces, VGA is currently eliminated, this is because the VGA transmission is an analog signal. That in addition to the shape is different, what are the major differences between these interfaces?

In terms of interface performance, the performance of the display interface is DP>HDMI>DVI>VGA.

VGA interface

VGA interface is also known as D-Sub interface. In the era of CRT monitors, the VGA interface is a must. Because CRTs are analog devices and VGA uses analog protocols, they were rightfully matched for use.The VGA interface uses a 15-pin plug-in structure, which transmits signals such as component and sync, and is the interface used by many old graphics cards, laptops and projectors. Later LCD monitors appeared, also with a VGA interface. The monitor has a built-in A/D converter that converts the analog signal to a digital signal for display on the LCD.

But another disadvantage of the VGA interface is that it supports only 1080p resolution, and fonts tend to look weak at high resolutions. At present, the VGA interface has gradually withdrawn from the stage, and now the newer monitors have basically no VGA interface.

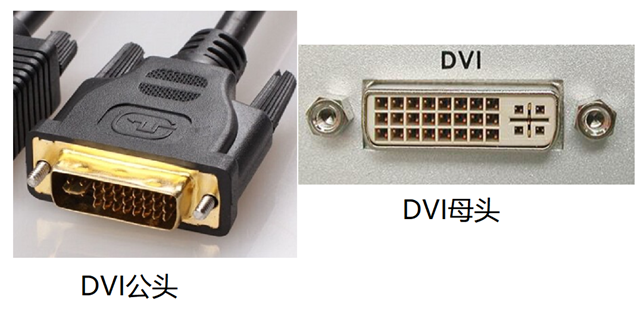

DVI interface

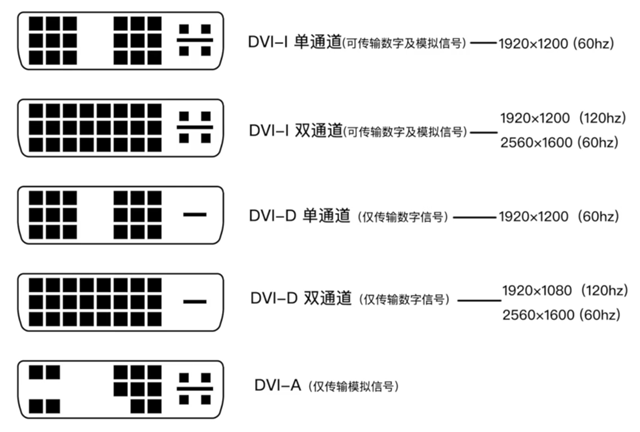

In order to make up for the shortcomings of the VGA interface, the DVI interface was introduced to support both analog and digital signal transmission, it is actually a transitional product, its more obvious disadvantage is that it does not support audio signals, limiting its popularity and development.

Digital signal transmission does not require conversion, can be directly transmitted to the display device, transmission speed, picture quality is also better. However, DVI only supports 8bit RGB signal transmission, compatibility considerations, a number of pins set aside to support analog devices, resulting in a larger interface size. The current better DVI interface can transmit 2K screen, but it is also basically the limit.

If you need a 1080P 144Hz display, DVI can still do the job, but if you need a higher resolution, you need to use the HDMI or DisplayPort interface.

HDMI interface

HDMI is capable of transmitting both high-definition graphics picture signals and audio signals, which are generally connected to a TV at home, and is resistant to interference. The current highest HDMI 2.1 standard supports 8k 60Hz and 4k 120Hz with resolutions up to 10K. Its also supports High Dynamic Range HDR and has increased bandwidth to 48Gbps.

HDMI is a digital video and audio interface technology that supports the output of audio, and it comes to replace analog signals. It is widely used in set-top boxes, televisions, monitors, projectors and other devices. Due to its high usage, it has also spawned three interface formats: the standard HDMI interface, the Micro HDMI interface and the Mini HDMI interface.

The HDMI 1.4 interface is the most common among displays today, it supports multi-channel audio transmission, provides realistic color, and can support 4K resolution, but the refresh rate is only up to 30Hz. 75Hz refresh rate 2560×1600 resolution, and 144Hz refresh rate 1920×1080 resolution, which is very suitable for competitive gaming.

In addition, HDMI 1.4 does not support 21:9 video and 3D stereo formats.

HDMI 2.0 further extends the color depth to support 4K at 60Hz refresh rate, it also adds support for 21:9 aspect ratio and 3D stereoscopic format. In addition, HDMI 2.0 allows 1440p at 144Hz and 1080p at 240Hz. both versions 1.4 and 2.0 support Adaptive Sync, AMD’s FreeSync technology.

HDMI 2.0a adds support for HDR (High Dynamic Range), while HDMI 2.0b supports the Advanced HDR10 format and the HLG standard.

HDMI 2.1 adds support for Dynamic HDR, 4K at 4K and 8K at 120Hz.

DP interface

DP interface is also a high-definition digital display interface standard, which can be connected to a computer and a monitor, as well as a computer and a home theater. DP interface can be understood as an enhanced version of HDMI, which is more powerful in terms of audio and video transmission. In the current situation, there is not much difference between DP and HDMI in terms of performance. If you use 3840*2160 resolution (4K), HDMI can only transmit up to 30 fps due to lack of bandwidth, DP has no problem.

DP interface is also an HD digital display interface standard, which is the current mainstream and used for some high-end displays, and can be seen as an upgraded version of HDMI interface, but the internal transmission is completely different from DVI and HDMI, with higher bandwidth and better performance. If you are using a 4K display, HDMI does not have enough bandwidth and can only transmit 30 fps, while DP is perfectly capable of doing so.

DisplayPort 1.2 is a must for gaming monitors with Nvidia G-Sync. In HBR2 (High Bit Rate 2) mode, DisplayPort 1.2 has an effective bandwidth of 17.28 Gbit/s, allowing for wide color gamut support and high resolution/refresh rates of up to 4K at 75Hz. Multiple displays can be daisy-chained together (DisplayPort-Out), and DisplayPort 1.2 is capable of supporting 3840 x 2160 at 4K with a 60Hz refresh rate or 1080p resolution with a 144Hz refresh rate.

The latest DisplayPort 1.4 interface adds support for HDR10 and Rec2020 color gamut as well as 8K HDR at 60Hz and 4K HDR at 120Hz by using DSC (display stream compression) encoding with a 3:1 compression ratio.

There is another derivative of the DP interface: the Mini DP interface, which is even smaller in size and was introduced by Apple.

Other interfaces

Type-C interface, which actually uses the DP standard signal transmission, has the advantage of solving the screen transmission and power supply of the monitor through one cable.

USB interface, the latest USB3.0 interface, connects to the computer host through the upstream USB interface, and users can connect to the computer host through the monitor’s downstream USB interface.

Knowledge points of monitor eye protection

With the ever increasing depth of digital information, people have to rely on computers for work, study and entertainment. Looking at a screen is inevitable, but especially when watching long-running videos, surfing the Internet and writing articles, it is easy to experience eye strain and visual impairment as well as eye pain and dryness. If we need to face a computer screen every day to protect our eye health, we need to buy an eye monitor.

However, when purchasing an eye protection monitor, people encounter the following questions.

- Why should I choose an eye protection monitor, and which groups of people are suitable for eye protection monitors?

- What features of the monitor should I look for when choosing an eye protection monitor? What are the benefits of using an eye protection monitor?

- What cost-effective eye protection monitors are available for purchase?

Here we will introduce various knowledge of eye protection monitors in detail.

1. Anti-blue light

When buying a computer, many users choose a monitor according to their needs. Such as gaming players choose to have a high refresh rate of the game monitor, users engaged in design choose a wide color gamut and positive color monitor. However, few people think about eye protection when buying a monitor. Indeed, eye protection deserves more attention. According to research, the root cause of eye damage is the large amount of blue light in the LED backlight. The short-wave blue light emitted from the screen illuminates the eyes for a long time, causing the death of retinal pigment epithelial cells and eventually leading to macular degeneration, which is an irreversible eye disease.

Since there is no medical treatment for this disease, anti-blue light awareness is essential. For example, buying a display that supports blue light filtering, certain screens have been certified by TÜV in Rheinland, Germany. The exclusive 2PfG display quality test standard detects the intensity, wavelength and flicker of blue light as the screen brightness changes. If the screen is approved by TÜV Rheinland, it is an eye-friendly screen with a blue filter and a flicker-free screen.

2. splash screen

Now there is also a problem of flickering screen. A typical LCD screen continuously flickers and dims (flickers and dims) in the same process as the brightness pulse phenomenon. When the eye is exposed to a sudden change in brightness (contrast), the pupil will become larger or smaller, and the eye ciliary muscle will frequently contract or relax to adjust the crystal body area degree, for the average user, this is a small change, although it does not cause attention, but a long time to see may make eye fatigue, in severe cases, will also exacerbate myopia.

When buying a monitor, be sure to consider the two main features of anti-blue light + flicker-free screen. One of them is that the screen does not flicker, which means that the screen does not flicker under screen brightness conditions, minimizing stress and fatigue on the human eye and protecting it from health effects. How do you detect a flickering screen monitor? This is actually very simple. For example, you can use your phone to perform a simple test on a selected target. While flicker (black striped ripples) is clearly visible on a normal monitor screen, a non-flickering screen does not flicker under any screen brightness conditions, and the picture remains clear and smooth all the time.