When talking about asynchronous programming always involves various concepts, such as process, thread, parallel, concurrency, coroutine, just started to learn programming has been unable to understand the difference between these concepts, only know according to the document write write demo, now after learning the operating system gradually on these concepts to understand some clear, so write this blog to record a little.

1. Concurrent

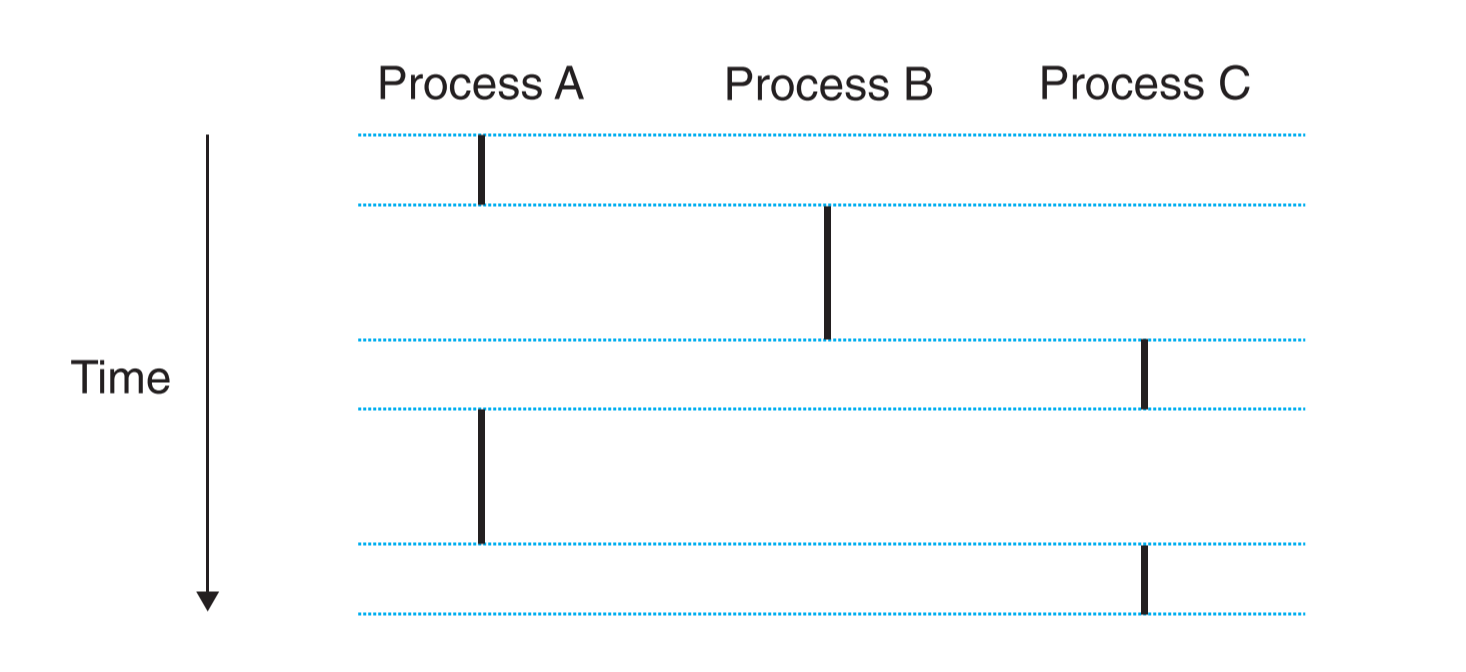

A logical flow whose execution overlaps in time with another flow is called a concurrent flow, and the two flows are said to run concurrently. More precisely, flows X and Y are concurrent with respect to each other if and only if X begins after Y begins and before Y finishes, or Y begins after X begins and before X finishes.

The above paragraph is the definition of concurrency from CSAPP. As long as two streams of data overlap in time, they are concurrent. For example, if you use the same computer to listen to music and read articles in the browser, you can say that the music player and the browser are running concurrently. It should be noted that concurrency here is not running two programs at the same time, but multiple programs running at one time, as shown in the figure below.

There is only one program that corresponds to a point on the timeline, but there are three programs running at that time, so we can say that these three programs are concurrent. A few years ago, when the CPU of a home computer had only one core, it could only run one program at the same time, and the OS kernel would schedule these programs and let them run for a while, because the scheduling speed was so fast that you would think that these programs were running at the same time.

2. Parallel

Parallelism is the simultaneous execution of multiple instructions at the same time, so parallelism requires a multi-core CPU to achieve, because multiple instructions can be executed at the same time, so it is natural to run multiple programs at the same time, so if parallelism is satisfied, concurrency must be satisfied. In other words, concurrency is processing various things at the same time, while parallelism is doing various things at the same time.

3. process

A process is an instance of a program. The operating system kernel creates a process for a program after it is executed. A process consists of program data and the stack and heap used to store the data, and is directly scheduled by the system kernel.

4. Threads

A thread is a logical stream inside a process, sharing a memory space between different threads. A process can have multiple threads and at least one thread, and like processes threads are also scheduled by the kernel. The main difference between threads and processes is that they use different resources. The following table is a summary of the resources used by threads and processes in stackoverflow.

| Per process items | Per thread items |

|---|---|

| Address space | Program counter |

| Global variables | Registers |

| Open files | Stack |

| Child processes | State |

| Pending alarms | |

| Signals and signal handlers | |

| Accounting information |

Why do we need threads when we have processes?

- different threads share a memory space between them to save resources

- because threads take up less resources than processes, they can switch faster than processes

- because threads share memory space and other resources (such as files), it is easier for threads to communicate and cooperate with each other

5. Coroutine

With coroutines, the programmer and programming language determine when to switch coroutines; in other words, tasks are cooperatively multitasked by pausing and resuming functions at set points, typically (but not necessarily) within a single thread.

Coroutine is a very popular concept recently. Just like the old days when computers only had a single core but could achieve concurrency through process scheduling, Coroutine is based on a single thread to achieve concurrency of multiple data streams through the scheduling of the program itself.

The advantage of Coroutine over threads is that it uses less resources. Although threads consume less resources than processes, they are not free, and as mentioned above about threads, each thread has its own unique stack space, and if there are too many threads, they will consume a lot of resources, and the operating system will limit the number of threads. Coroutines, on the other hand, are determined by the programming language and can have as many coroutines as you want, and all coroutine resources are shared for better resource utilization.

Another point is that threads are automatically scheduled by the OS kernel, while Coroutines are scheduled by the program or programming language, so the program itself is more flexible in scheduling Coroutines.