Packet reception process

For the sake of simplicity, we will describe the process of receiving and sending Linux network packets with a UDP packet processing process on a physical NIC, and I will try to ignore some irrelevant details.

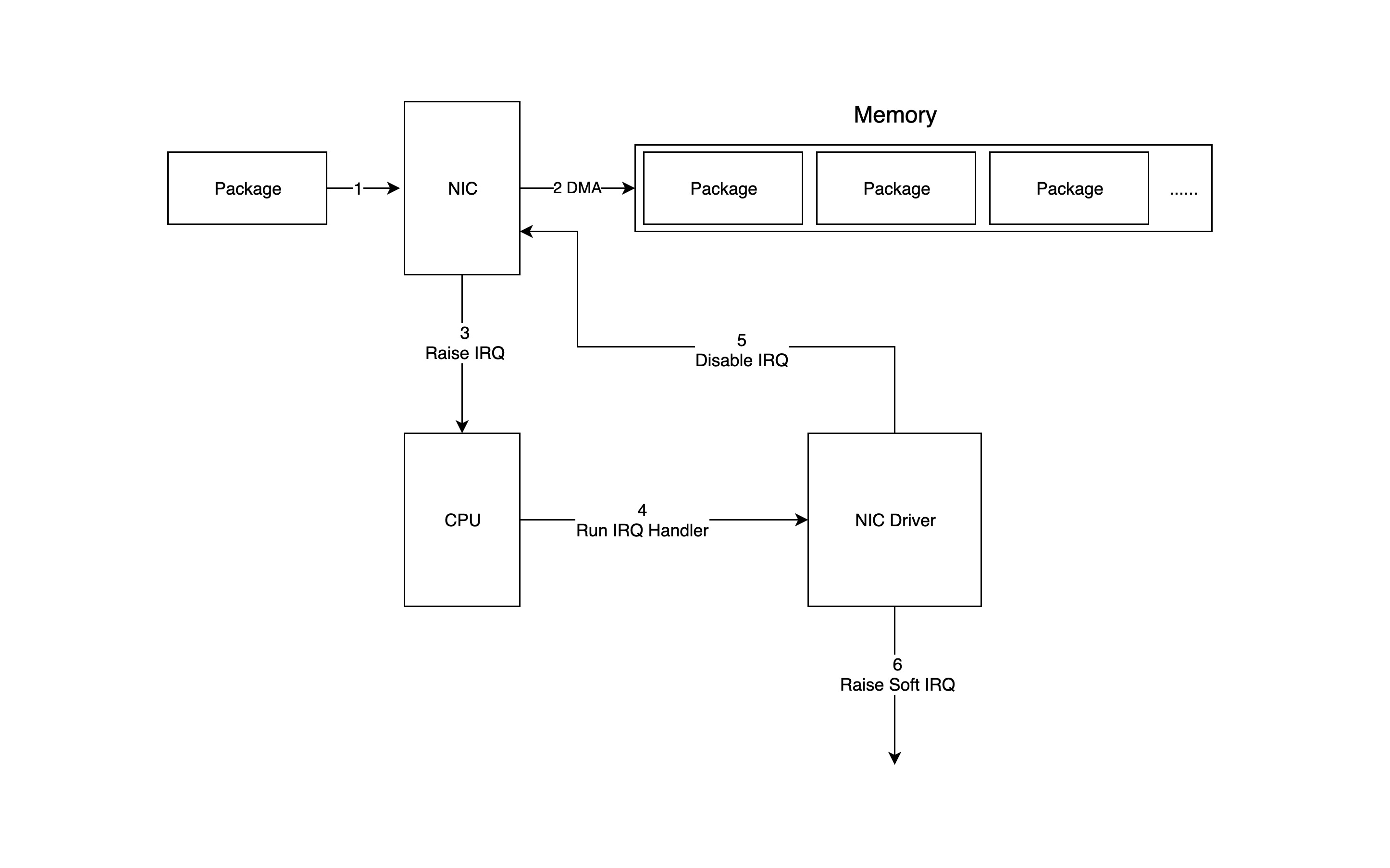

From NIC to memory

As we know, each network device (NIC) has a driver to work, and the driver needs to be loaded into the kernel at kernel boot time. In fact, logically, the driver is the intermediate module responsible for bridging the network device and the kernel network stack. Whenever the network device receives a new packet, it triggers an interrupt, and the corresponding interrupt handler is the very driver that is loaded into the kernel.

The following diagram shows in detail how packets enter memory from the network device and are processed by the driver and network stack in the kernel.

- the packet enters the physical NIC, and if the destination address is not that network device and that network device does not have promiscuous mode turned on, the packet will be discarded.

- the physical NIC writes the packet by DMA to the specified memory address, which is allocated and initialized by the NIC driver.

- the physical NIC notifies the CPU via a hardware interrupt (IRQ) that a new packet has arrived at the physical NIC and needs to be processed.

- next, the CPU calls the interrupt function that has been registered according to the interrupt table, and this interrupt function will call the corresponding function in the driver (NIC Driver).

- the driver first disables the interrupt of the NIC, indicating that the driver already knows that there is data in the memory, and tells the physical NIC to write the memory directly next time it receives a packet and not to notify the CPU, so as to improve efficiency and avoid the CPU being interrupted non-stop.

- start a soft interrupt to continue processing packets. The reason for this is that the hard interrupt handler cannot be interrupted during execution, so if it takes too long to execute, it will cause the CPU to be unable to respond to other hardware interrupts, so the kernel introduces soft interrupts, so that the time-consuming part of the hard interrupt handler can be moved to the soft interrupt handler to handle it slowly.

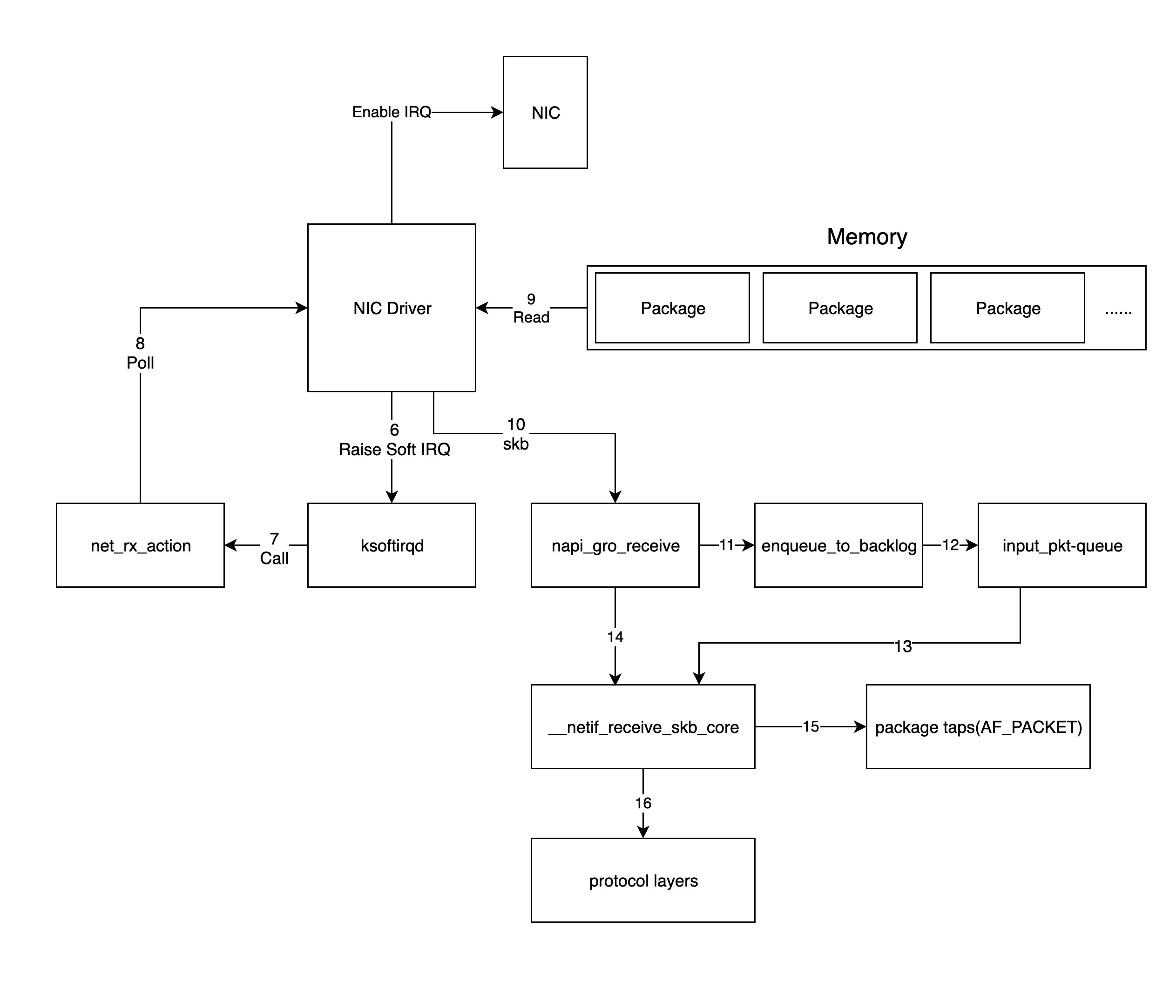

Kernel packet processing

The network device driver in the previous step will process the packet by triggering the soft interrupt handling function in the kernel network module, and the kernel processes the packet as shown in the following diagram.

-

for the soft interrupts issued by the driver in the previous step, the ksoftirqd process in the kernel will call the corresponding soft interrupt handler function of the network module, to be precise, the

net_rx_actionfunction is called here. -

net_rx_actionthen calls thepollfunction in the NIC driver to process the packets one by one. -

and the

pollfunction will let the driver read the packets written to memory by the NIC, in fact, the format of the packets in memory is known only to the driver; -

the driver converts the packets in memory into the skb(socket buffer) format recognized by the kernel network module and then calls the

napi_gro_receivefunction. -

The

napi_gro_receivefunction processes the GRO-related content, that is, it merges the packets that can be merged, so that only one call to the stack is required, and then determines whether RPS is enabled; if it is, theenqueue_to_backlogfunction will be called. -

the

enqueue_to_backlogfunction will put the packet into theinput_pkt_queuestructure and return it.Note: If

input_pkt_queueis full, the packet will be dropped, and the size of this queue can be configured withnet.core.netdev_max_backlog. -

the CPU will then process the network data in its own

input_pkt_queuein a soft interrupt context, actually calling the__netif_receive_skb_corefunction to do so. -

if RPS is not enabled, the

napi_gro_receivefunction will directly call the__netif_receive_skb_corefunction to process the network packets. -

Immediately afterwards, the CPU copies a copy of the data to the socket of type AF_PACKET (raw socket), if there is one (the packet captured by tcpdump is this packet).

-

pass the packet to the kernel TCP/IP stack for processing.

-

when all packets in memory have been processed (the

pollfunction is finished), re-enable the NIC’s hard interrupts so that the next time the NIC receives data again it will notify the CPU.

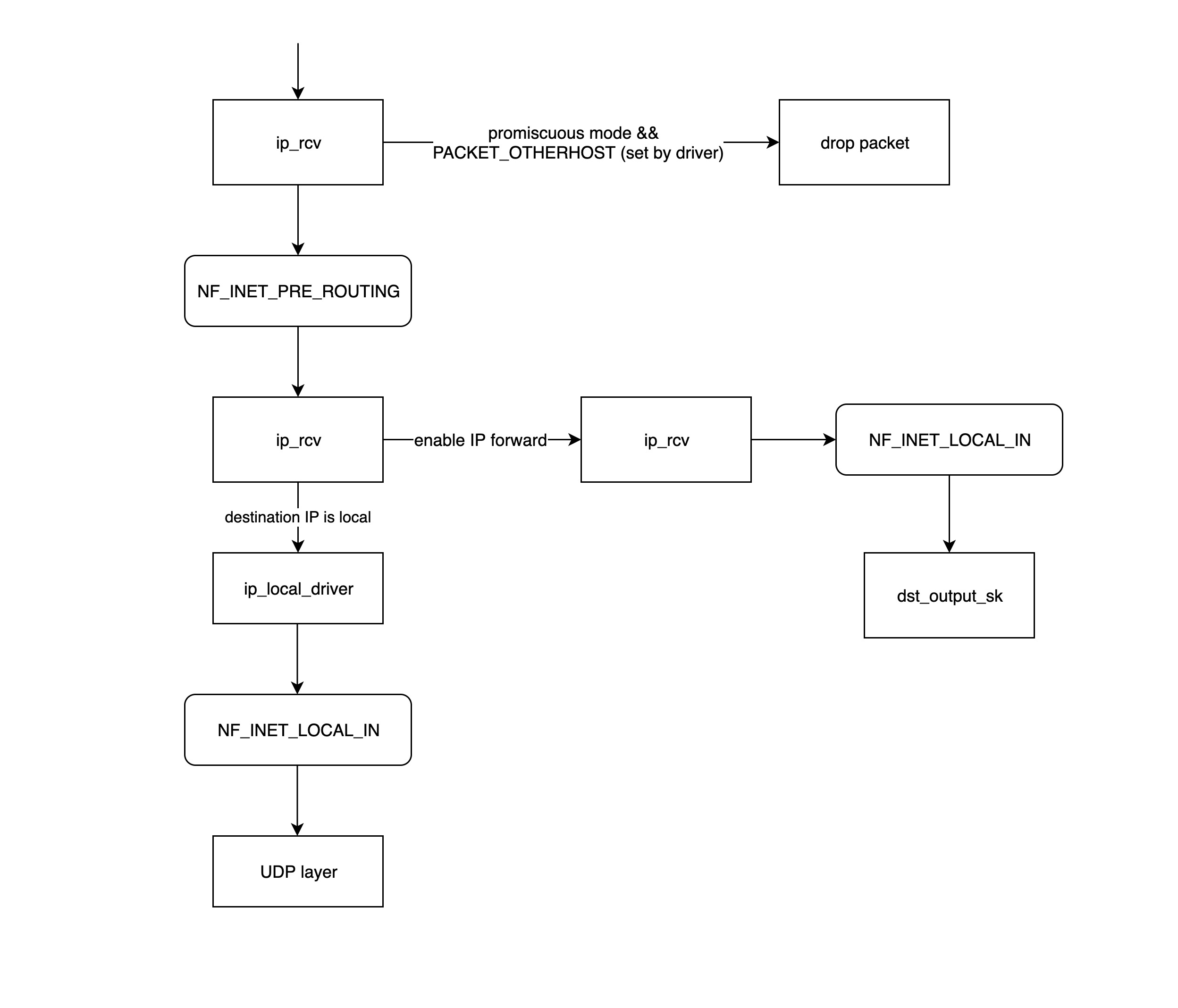

Kernel Network Protocol Stack

The packets received by the kernel TCP/IP stack at this point are actually Layer 3 (network layer) packets, so the packets will first go to the IP network layer first, and then to the transport layer for processing.

IP Network Layer

-

ip_rcvis the entry function for the IP network layer processing module, which first determines whether the packet needs to be discarded (the destination mac address is not the current NIC and the NIC is set to promiscuous mode), and if further processing is required calls the processing function in theNF_INET_PRE_ROUTINGchain registered in netfilter. -

NF_INET_PRE_ROUTINGis a hook function placed in the protocol stack by netfilter to inject some packet processing functions through iptables to modify or drop packets, and if the packet is not dropped, it will continue down the stack.The processing logic in the netfilter chain such as

NF_INET_PRE_ROUTINGcan be set viaiptables. -

routing for routing, if the destination IP is not the local IP and ip forwarding is not enabled, then the packet will be dropped; otherwise it goes to the

ip_forwardfunction for processing. -

the

ip_forwardfunction will first call the processing function registered by netfilter on the NF_INET_FORWARD chain, and if the packet is not dropped, then it will continue to call thedst_output_skfunction further on. -

the

dst_output_skfunction will call the appropriate function at the IP network layer to send the packet out, the details of this step will be described in the next section on sending packets. -

ip_local_deliverIf the above route processing finds that the destination IP is a local IP, then theip_local_deliverfunction will be called, which first calls the relevant processing function on the NF_INET_LOCAL_IN chain, and if it passes, the packet will be sent down to the transport layer.

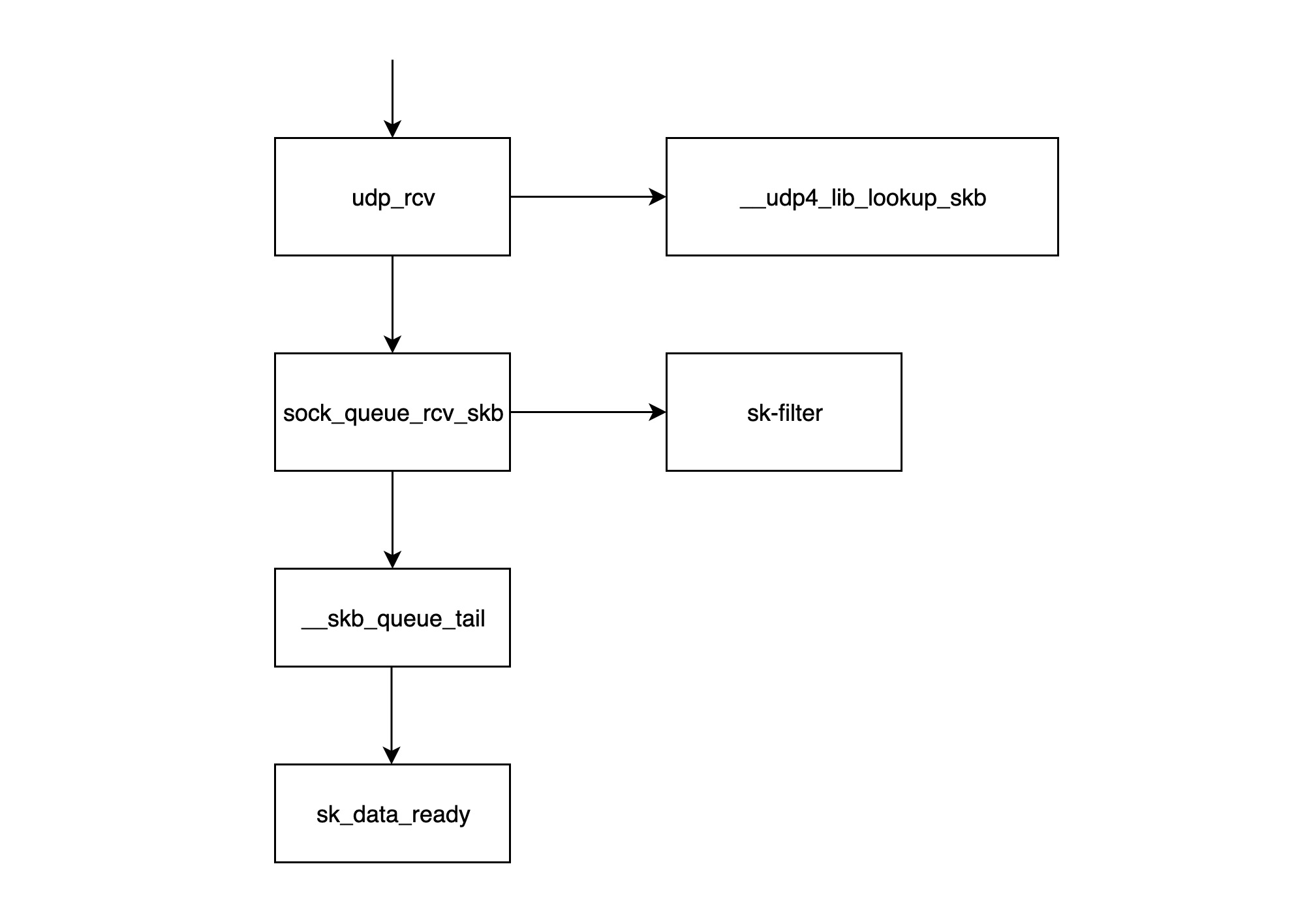

Transport layer

- The

udp_rcvfunction is the entry function of the UDP processing layer module, it first calls the__udp4_lib_lookup_skbfunction to find the corresponding socket based on the destination IP and port (the so-called socket is basically a structure consisting of ip+port), if the corresponding socket is not found, then the packet will be be discarded if the corresponding socket is not found, otherwise it continues. sock_queue_rcv_skbThis function checks if the socket’s receive cache is full and discards the packet if it is full; secondly, it callssk_filterto check if the packet is a packet that meets the conditions. packet will also be discarded if the filter is currently set on the socket and the packet does not meet the conditions.__skb_queue_tailfunction puts the packet at the end of the socket’s receive queue.sk_data_readyinforms the socket that the packet is ready;- After calling

sk_data_ready, a packet is processed and awaits to be read by the application layer;

Note: All the execution procedures described above are executed in the soft interrupt context.

The packet sending process

Logically, the sending process of a Linux network packet is the opposite of the receiving process, so we’ll still use the example of a UDP packet being sent through a physical NIC.

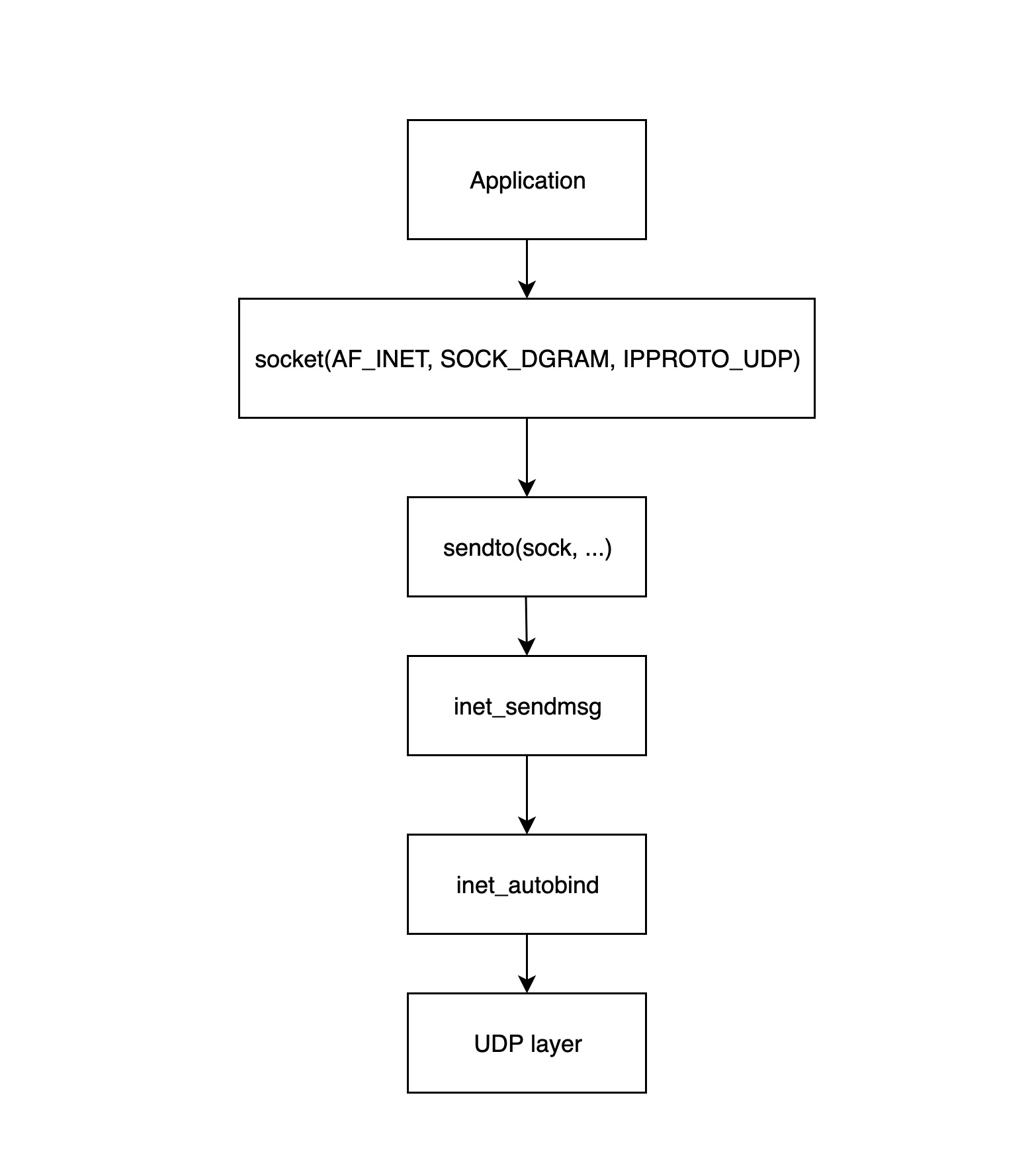

Application layer

The application layer process starts with the application calling the Linux network interface to create a socket, and this diagram below shows in detail how the application layer builds the socket and sends it to the transport layer.

socket(...)Called to create a socket structure and initialize the corresponding operator functions.sendto(sock, ...)Called by the application layer program to start sending packets; this function calls theinet_sendmsgfunction that follows.inet_sendmsgThis function mainly checks if the current socket has a bound source port, and if not, calls theinet_autobindfunction to assign one, and then calls the UDP layer function to transmit it.- The

inet_autobindfunction will call theget_portfunction to get an available port.

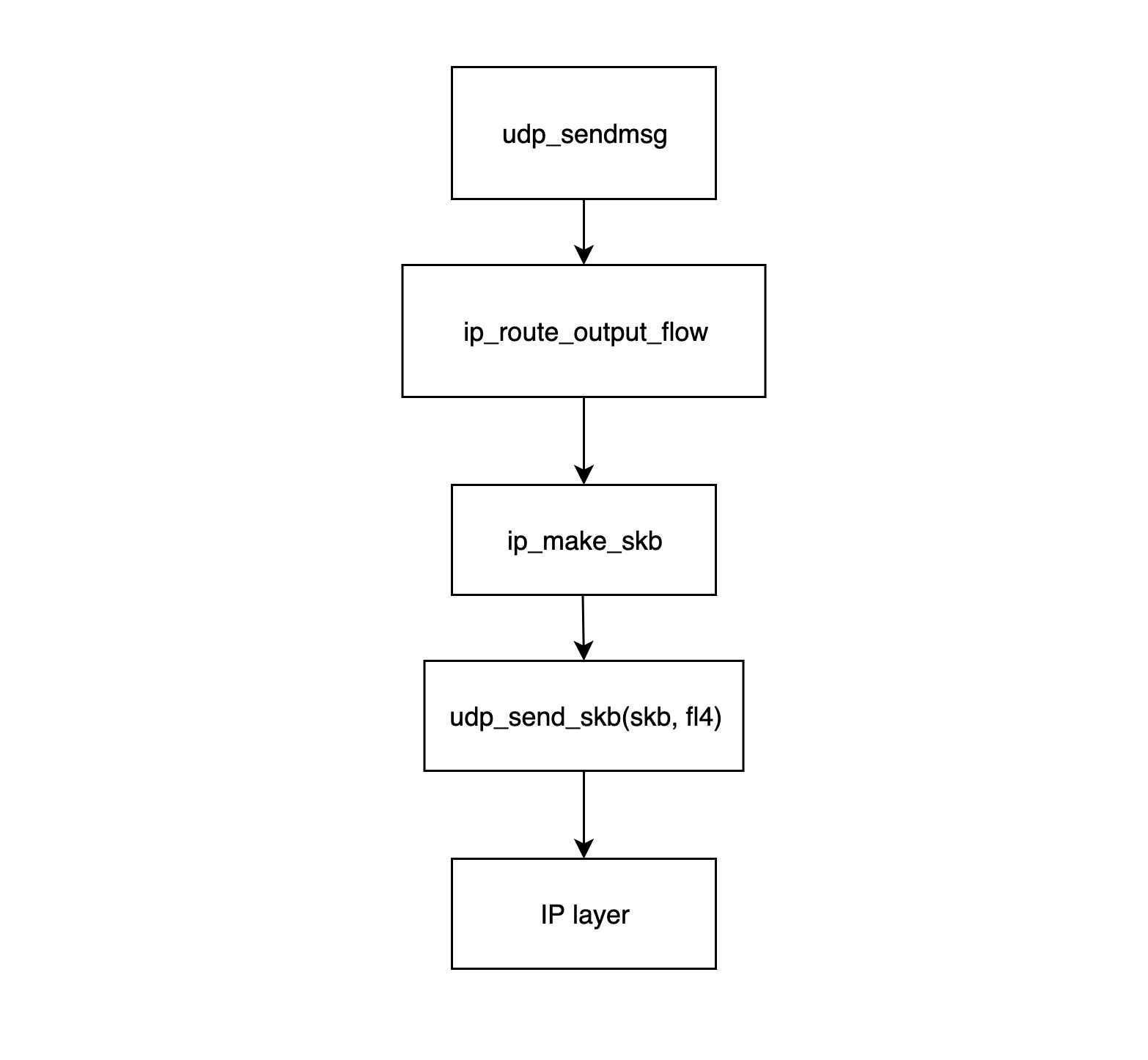

Transport layer

- The

udp_sendmsgfunction is the entry point for the UDP transport layer module to send packets. This function first calls theip_route_output_flowfunction to get the routing information (mainly the source IP and the NIC), then callsip_make_skbto construct the skb structure, and finally associates the NIC information with the skb. - The

ip_route_output_flowfunction mainly deals with routing information, it will find out from which network device the packet should be sent based on the routing table and the destination IP. If the socket is not bound to a source IP, the function will also find the most appropriate source IP for it based on the routing table. If the socket has a source IP bound, but the NIC corresponding to the source IP cannot reach the destination according to the routing table, the packet will be discarded and an error will be returned for failure to send the data. This function finally stuffs the found network device and source IP into theflowi4structure and returns it to theudp_sendmsgfunction. - The function

ip_make_skbconstructs the skb packet with the IP packet header (including the source IP information) assigned to it, and calls the__ip_append_datfunction to slice the packet and check if the socket’s send cache has been exhausted, and if it has been exhausted returns anENOBUFSerror message. - The

udp_send_skb(skb, fl4)function fills the skb with UDP packet headers and handles the checksum, and then passes it to the corresponding function in the IP network layer.

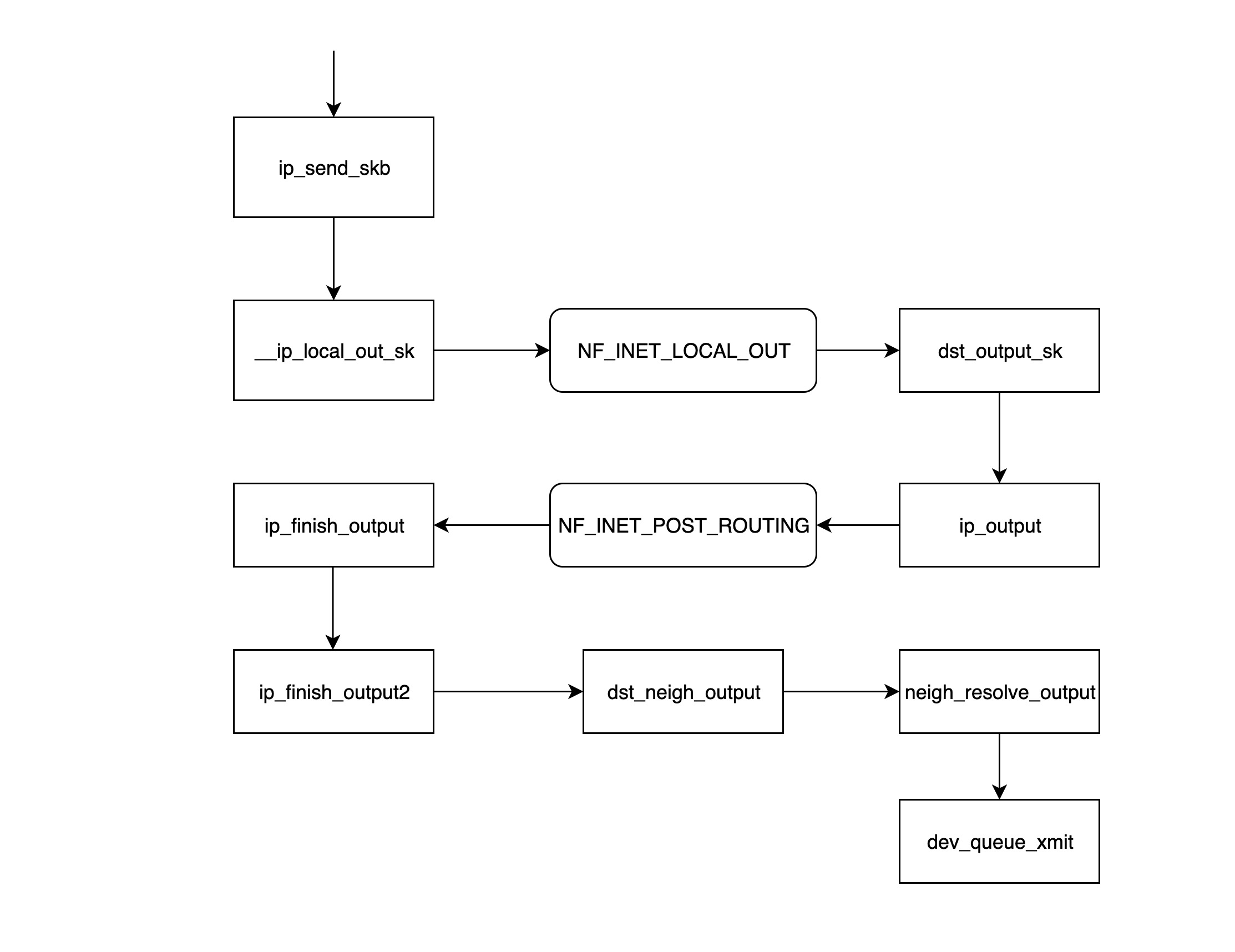

IP Network Layer

ip_send_skbis the entry function for the IP network layer module to send packets, which essentially calls the series of functions that follow to send network layer packets.__ip_local_out_skfunction is used to set the length and checksum value of the IP packet header and then call the following processing function registered on the netfilter hook chain NF_INET_LOCAL_OUT.- NF_INET_LOCAL_OUT is a netfilter hook gateway that can be used to configure the processing functions on the chain via iptables; if the packet is not discarded, it continues on down the chain.

dst_output_skThis function calls the corresponding output functionip_outputbased on the information inside skb.- The

ip_outputfunction writes the NIC information obtained from the previous layerudp_sendmsgto the skb and then calls the processing function registered on the netfilter hook chain NF_INET_POST_ROUTING; * NF_INET_POST_ROUTING is the netfilter hook chain NF_INET_POST_ROUTING. - NF_INET_POST_ROUTING is a netfilter hook gateway that can be used to configure the processing functions on the chain via iptables; in this step the original address translation (SNAT) is mainly configured, resulting in a change in the routing information for this skb.

- the

ip_finish_outputfunction determines if the routing information has changed since the previous step, and if so, thedst_output_skfunction needs to be called again (when this function is called again, it may not go to the branch where theip_outputfunction was called, but to the output function specified by the netfilter, possibly xfrm4_transport_output), otherwise it continues on. - The

ip_finish_output2function finds the next hop address in the routing table based on the destination IP, then calls the__ipv4_neigh_lookup_noreffunction to find the next hop’s neigh information in the arp table, and calls the__neigh_createfunction to construct an empty neigh structure if it is not found. - The

dst_neigh_outputfunction calls theneigh_resolve_outputfunction to get the neigh information and fill the skb with the mac address inside the information, and then calls thedev_queue_xmitfunction to send the packet.

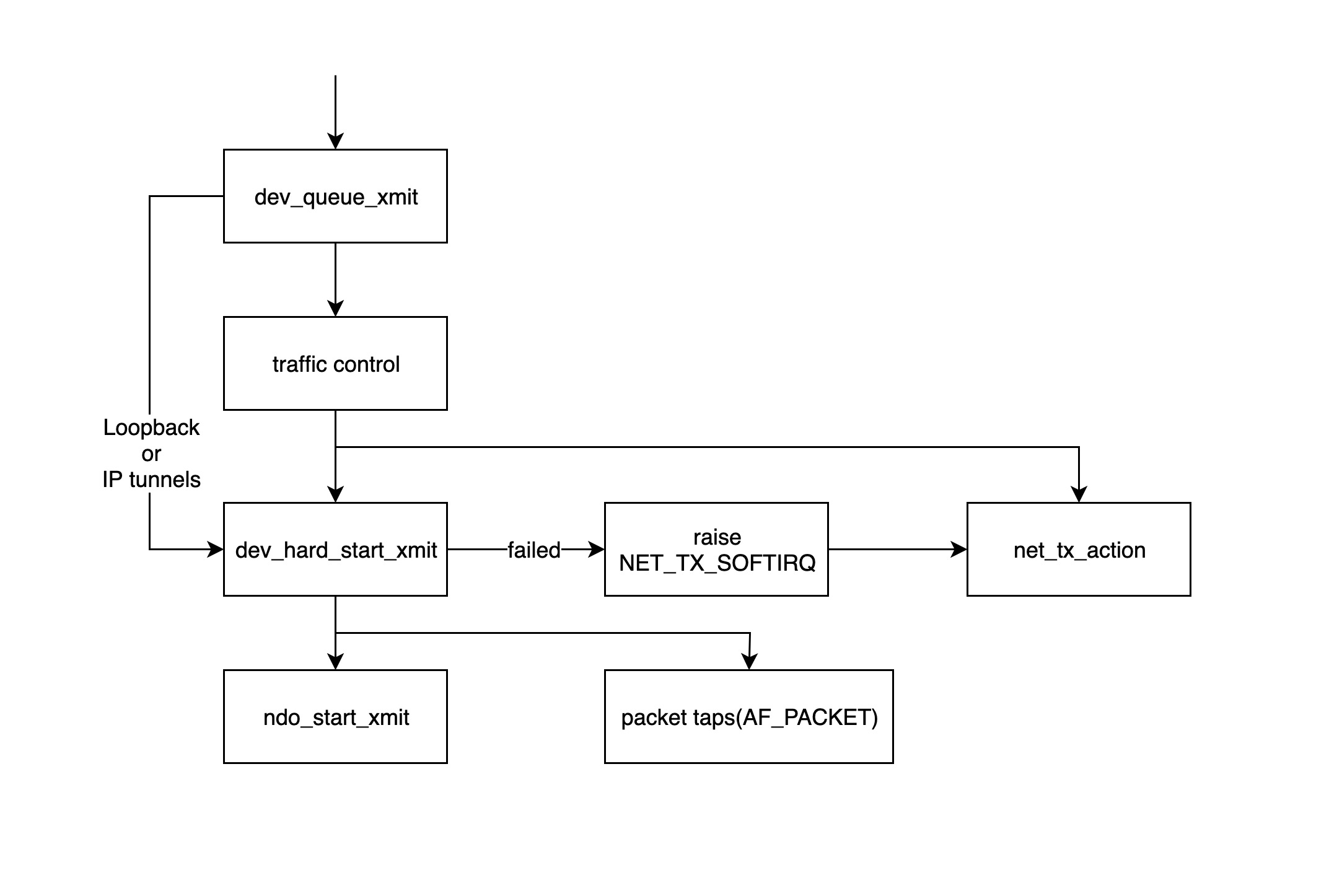

kernel processing packets

- The

dev_queue_xmitfunction is the entry point for the kernel module to start processing the sent packets, this function will first get the corresponding qdisc of the device, if not (e.g. loopback or IP tunnels), thedev_hard_start_xmitfunction will be called directly, otherwise the packets will go through the traffic control module for processing. - traffic control module mainly filters and sorts packets, if the queue is full, packets will be dropped, please refer to: http://tldp.org/HOWTO/Traffic-Control-HOWTO/intro.html

- The

dev_hard_start_xmitfunction first copies a copy of the skb to “packet taps” (from which the tcpdump command gets its data), then calls thendo_start_xmitfunction to send the packet. If thedev_hard_start_xmitfunction returns an error, the function calling it puts the skb in a place and throws a soft interrupt NET_TX_SOFTIRQ to the soft interrupt handlernet_tx_actionfunction to retry the process later. - The

ndo_start_xmitfunction is bound to the processing function of the data sent by the specific driver.

Note:

ndo_start_xmitfunction will point to the specific NIC driver to send packets, after this step, the task of sending packets to the network device driver, different network device drivers have different ways of handling, but the general process is basically the same.

- put skb into the NIC’s own transmit queue

- notify the NIC to send the packet

- send an interrupt to the CPU after the NIC finishes sending

- clean up the skb after receiving the interrupt

Summary

Understanding the process of receiving and sending Linux network packets, we can know where to monitor and modify packets, and in which cases packets may be dropped. In particular, understanding the location of the corresponding hook functions in netfilter will help us understand the usage of iptables, and will also help us better understand the network virtual devices under Linux.