We all know that Linux manages memory in pages. Whether it is loading data from disk into memory or writing data from memory back to disk, the operating system operates in pages. Even if we write only one byte of data to disk, we need to swipe the entire data from the entire page to disk.

Linux supports both normal-sized memory pages and large memory pages (Huge Page). The default size of memory pages on most processors is 4KB, and although some processors use 8KB, 16KB, or 64KB as the default page size, 4KB pages are still the mainstream of the default memory page configuration of the operating system; in addition to the normal memory page size, different processors also contain large pages of different sizes.

The 4KB memory page is actually a historical legacy, having been determined in the 1980s and retained until today. Although today’s hardware is much richer than in the past, we still use the prevailing memory page size. Those who have installed a machine should be familiar with the memory sticks here as shown in the following figure.

In today’s world, a 4KB memory page size may not be the best choice, and 8KB or 16KB may be a better choice, but this is a tradeoff that has been made in the past in specific scenarios. Instead of getting too hung up on the 4KB number in this article, we should pay more attention to several factors that determine this outcome so that when we encounter similar scenarios we can consider the best choice for the moment in terms of these aspects, and in this article we will cover the following two factors that affect memory page size, which are.

- A page size that is too small introduces a larger page table entry increasing the lookup time and additional overhead of the TLB (Translation lookaside buffer) when addressing.

- Excessive page size wastes memory space, causes memory fragmentation, and reduces memory utilization.

The above two factors were fully considered when designing memory page sizes in the last century, and a 4KB memory page was finally chosen as the most common page size for operating systems. We will then detail their impact on operating system performance as described above.

Page table entries

Virtual memory in Linux, what each process can see is a separate virtual memory space, the virtual memory space is only a logical concept, the process still needs to access the physical memory corresponding to the virtual memory, the conversion from virtual memory to physical memory requires the use of each process holding a page table.

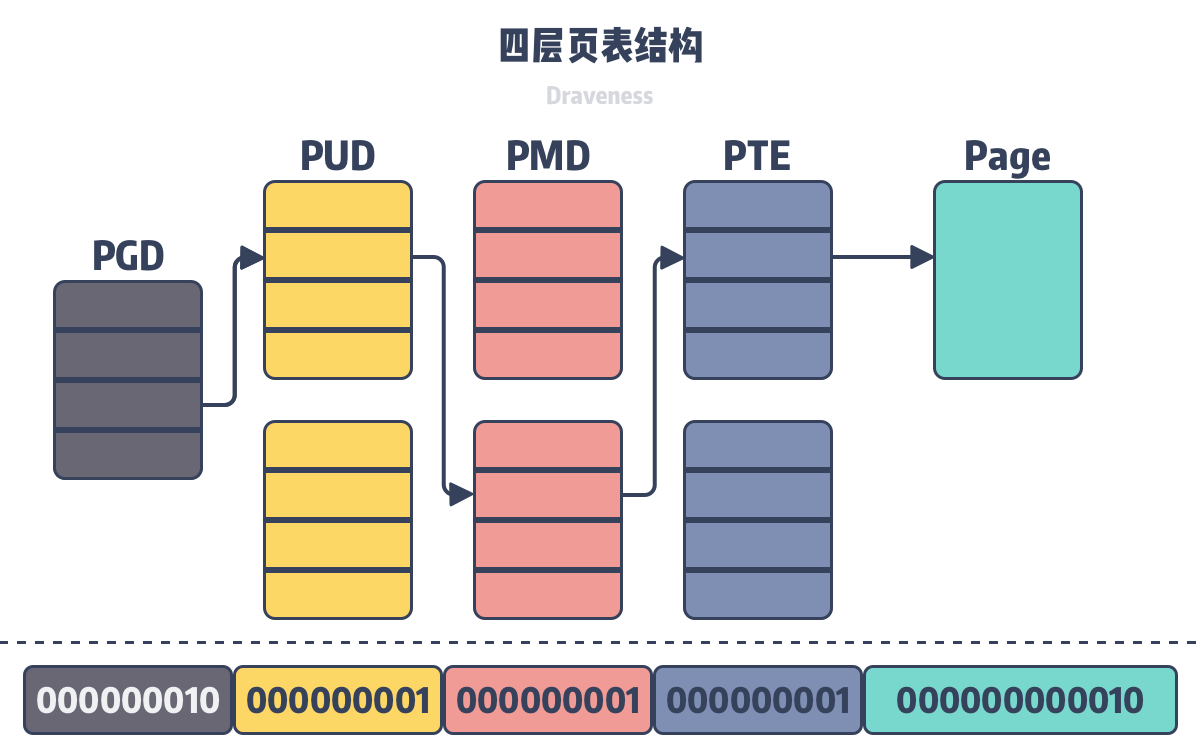

In order to store the 128 TiB virtual memory mapping data in 64-bit operating systems, Linux introduced a four-layer page table in 2.6.10 to assist in virtual address translation, and a five-layer page table structure in 4.11. More layers of page table structures may be introduced in the future to support 64-bit virtual addresses.

In the four-level page table structure shown above, the operating system uses the lowest 12 bits as the page offset, and the remaining 36 bits are divided into four groups to indicate the index of the current level in the previous level, and all virtual addresses can be looked up to the corresponding physical address using the above-mentioned multi-level page table.

Because the virtual address space of the operating system is of a certain size, the whole virtual address space is evenly divided into N memory pages of the same size, so the size of the memory pages will eventually determine the hierarchical structure and the specific number of page table entries in each process, and the smaller the size of the virtual pages, the more page table entries and virtual pages in a single process.

Since the current virtual page size is 4096 bytes, the 12 bits at the end of the virtual address can represent the address in the virtual page. If the virtual page size drops to 512 bytes, the original four-tier page table structure or five-tier page table structure will become five or six tiers, which will not only add additional overhead for memory accesses, but also increase the memory size occupied by page table entries in each process.

Fragmentation

Because memory mapping devices work at the level of memory pages, the OS considers the smallest unit of memory allocation to be a virtual page. Even if a user program requests just 1 byte of memory, the OS will request a virtual page for it, as shown below. If the size of a memory page is 24KB, then requesting 1 byte of memory will waste ~99.9939% of the space.

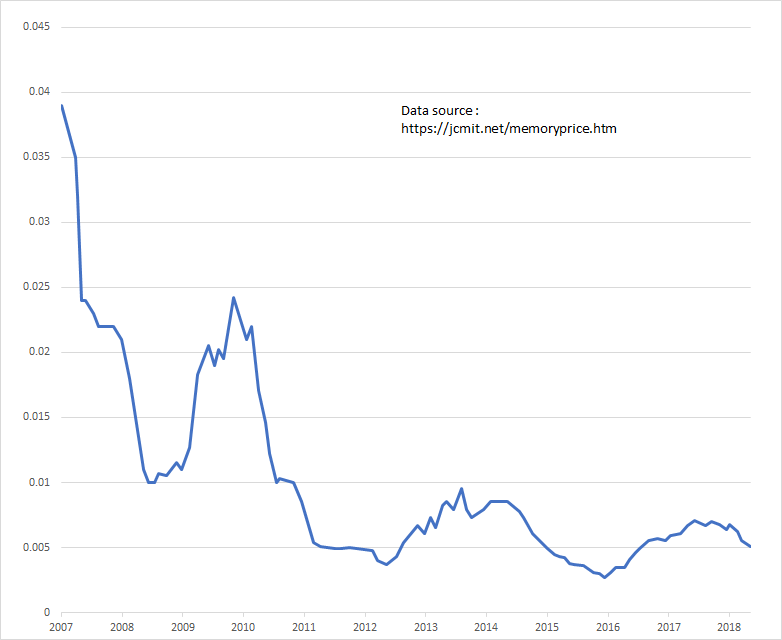

As the size of memory pages increases, memory fragmentation becomes more severe. Smaller memory pages reduce memory fragmentation in memory space and improve memory utilization. Memory resources were not as abundant in the last century as they are today, and in most cases memory was not a resource that limited the operation of programs, and most online services required more CPU than more memory. However, in the last century memory was actually a scarce resource, so improving the utilization of scarce resources was something we had to consider:

While memory sticks in the 1980s and 1990s were only 512KB or 2MB and ridiculously expensive, several gigabytes of memory are very common today, so while memory utilization is still very important, fragmented memory is no longer a critical issue to address today as memory prices have dropped dramatically.

In addition to memory utilization, larger memory pages also add additional overhead to memory copying because of the write-time copy mechanism on Linux. When multiple processes share the same block of memory, a copy of the memory page is triggered when one of the processes modifies the shared virtual memory, and the smaller the OS memory page, the smaller the additional overhead from write-time copying.

Summary

As we mentioned above, a 4KB memory page was the default setting decided in the last century. From today’s perspective, this is probably already the wrong choice, as architectures such as arm64, ia64, etc. can already support memory pages of 8KB, 16KB, etc. As memory becomes less expensive and the system’s memory becomes larger, larger memory may be a better choice for the OS, let’s revisit two elements that determine memory page size.

- A page size that is too small introduces larger page table entries increasing the lookup time and additional overhead of the TLB (Translation lookaside buffer) during addressing, but also reducing memory fragmentation in the program and improving memory utilization.

- Excessive page size wastes memory space, causes memory fragmentation and reduces memory utilization, but reduces page table entries in the process and the addressing time of the TLB.

This similar scenario is also more common when we do system design. To take a not particularly appropriate example, when we want to deploy services on a cluster, the resources on each node are limited, and the resources occupied by a single service may affect the resource utilization of the cluster or the additional overhead of the system. If we deploy 32 services with 1 CPU in the cluster, then we can fully utilize the resources in the cluster, but such a large number of instances will result in a large additional overhead; if we deploy 4 services with 8 CPUs in the cluster, then the additional overhead of these services, although small, may leave a lot of gaps in the nodes. At the end, let’s look at some more open related issues, and interested readers can think carefully about the following questions.

- What are the differences and connections between sectors, blocks, and pages in Linux?

- How is block size determined in Linux? What are the common sizes?