1. Background

1.1 Problems currently encountered with Jenkins

- Unstable orchestration engine

Jenkins is an orchestration engine written in Java that stops The World (STW) on Full GC. During large builds, STW can cause Jenkins to be unable to process new requests.

- Large builds stall

Jenkins uses disk files to store data, and each pipeline, each build, takes up a file directory and generates a large number of files. Usually the number of pipelines is limited, but when builds reach the 10,000+ level, the impact of IO on Jenkins can be felt.

- High cost of developing plugins

Although Jenkins already has many plugins, the CICD system still has a need to develop plugins in the face of the huge variety of internal systems. To develop Jenkins plugins, you need to master the Java language and learn the Jenkins plugin mechanism. Developing plug-ins is a matter of using the Jenkins runtime as an entry point to extend it. First, find the class to extend in the Jenkins Packages documentation, based on the functionality that needs to be extended. Then, you extends the extended class in the main class of the plugin to implement your own business logic.

- Poor Concurrency Performance

Due to the inherent limitations of Jenkins, you cannot run multiple copies on Kubernetes. The amount of concurrency in Kubernetes-based Jenkins is significantly bottlenecked when the build concurrency reaches up to about 400, and further improvement requires a large optimization upgrade at the architectural level.

1.2 Claims for CICD

- Cross-network

Services go to the cloud, but code cannot leave the company. Need to assemble on the cloud while building container images on the intranet.

- Can execute pipeline at scale

CICD provides a one-time runtime. CICD is an automated system, and more executions means more labor time saved. In the future, CICD will host more and more scenarios. Cluster installation, certificate patrol…

- Zero-downtime operation and maintenance

Previously, maintenance of the orchestration engine was focused on the early hours of the morning, as each restart of Jenkins took several minutes, during which time the CICD system could not provide services.

- Short delivery time and continuous iteration

Designing a large and perfect system was not the original intention; we wanted to quickly validate the idea, put it into use, and then keep iterating quickly to optimize and improve the system.

2. Selection Comparison

2.1 What are the characteristics of a good CICD

A good CICD tool should have the following characteristics:

- Outer DSL is simple and easy to master - User

- Inner DSL is efficient and easy to maintain - Developer

- Ecological, with many reusable atoms - Ecosystem

The UDE allows you to rate a CICD tool, and here is a comparison of some common CIs:

- Jenkins

Outer is a Groovy written Jenkinsfile file, Inner is a Java written Jenkins. uD is not good, Jenkins is hard to maintain but has a huge plugin, E is a big plus.

- GitLab CI

Outer is a .gitlab-ci.yml description file written in Yaml, Inner is a parsing engine written in Ruby and uses a Runner written in Go. u is good, quick to get started, have written some documentation before, GitLab. d is not good, Ruby is average, fewer and fewer people know how to do it. e is worse, although there is a template like E is worse, although there is a template like Jenkins share library to provide atomic level reuse, but the reuse rate across teams is very low, not conducive to building community ecology.

- Tekton

Outer is a PipelineRun description written by Yaml, and Inner is a Controller written by Go that constantly executes orchestration processes on Kubernetes Pods. In terms of plugins, the Tekton community currently offers over a hundred plugins for reuse.

2.2 Tekton vs Jenkins

In recent orchestration engine market share surveys, Jenkins has been used for more than half of the years in a row, a result of Jenkins’ nearly 20-year accumulation and head siphoning effect. But after entering the cloud-native era, the infrastructure changed and Jenkins didn’t keep up well, so Jenkins X abandoned Jenkins and switched to Tekton as the default orchestration engine. The cost of developing your own orchestration engine is too high, so here’s a comparison of Jenkins and Tekton.

| Functions | Jenkins | Tekton |

|---|---|---|

| programming languages | Java | Golang |

| development plugin languages | Java | Shell, Yaml |

| Pipeline description languages | Groovy, Shell | Yaml, Shell |

| Plugin ecology | lots of plugins, LDAP, GitLab | not enough |

| Number of plugins | 1500+ | 100+ |

| compatibility between plugins | may have conflicts, can not just upgrade | fully compatible |

| Secondary development | package Api | combination Task |

| Whether highly available | Integration with Gearman, master-slave model | reliance on Kuberntes for high availability |

| Single-instance concurrent build size | several hundred concurrent | relies on Kuberntes’ Pod management capabilities, which can be very large |

| data storage | local disk | Etcd |

| Does it support auto-triggering | Support | Support |

| Does it have commercial support | No | No |

3. Tekton-based solutions

3.1 What components are included in Tekton

- Pipeline

The base module for CI/CD workflows, used to create tasks, pipelines.

- Triggers

Event triggers for CI/CD workflows that can be used to automatically trigger pipelines based on events.

- CLI

Command line tool for managing CICD workflows.

- Dashboard

A generic pipeline web management tool.

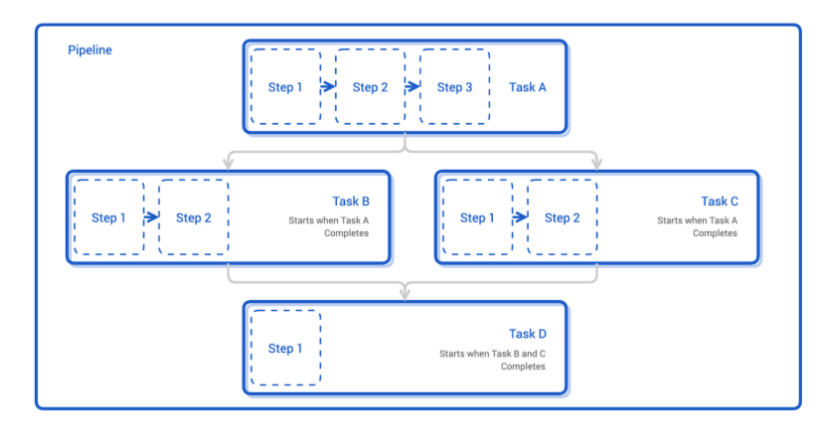

3.2 What does the Tekton pipeline consist of

The above is a diagram of a Pipeline. A Pipeline is typically composed of multiple Tasks, one with an independent Pod runtime environment. These Tasks are executed in series and in parallel. Within each Task, there are several Steps, which are executed serially. A Step has an independent Container runtime environment. Here is an example of a pipeline executing a simple script.

3.3 Support for Multi-Cluster Builds

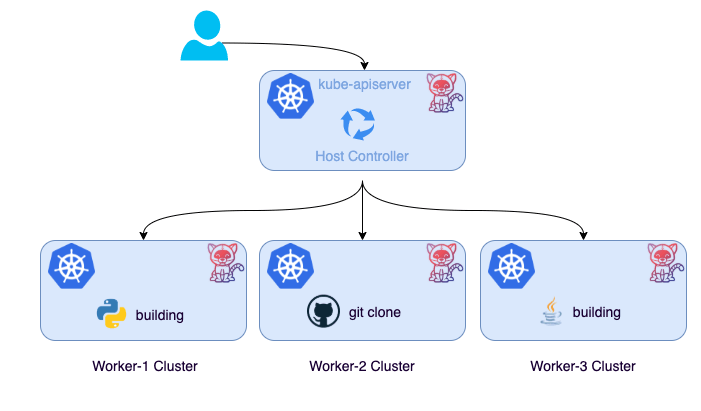

Tekton has abstracted all resource CRDs, which means we can easily distribute pipeline resources across multiple clusters with open source components such as KubeFed v2, Karmada, and Open Cluster Management.

As shown above, we create all pipeline resources in host clusters for metadata management. The resources are then distributed across the different clusters using an open source multi-cluster management scheme. Each cluster is a separate build environment, which effectively distributes the load pressure from the CICD pipeline.

According to the current resource planning, the server resources on the company’s intranet are very limited, so we need to use the resources on the cloud for assembly as much as possible. host cluster and some worker clusters are on the public network, while the clusters performing CI builds must be on the intranet.

Here we need to tunnel the network between the host cluster and the worker cluster. Tunneling through the network is an operation that jeopardizes the security of the intranet, so it is not feasible to use open source multi-cluster components to achieve this. But we didn’t stop there, this design inspired us.

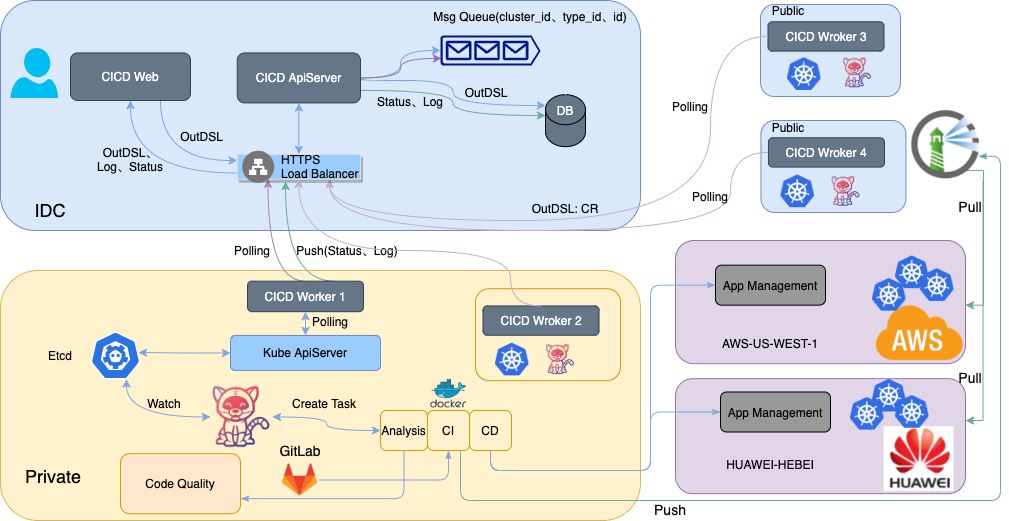

3.4 Architecture of the implementation

As shown above, it is an architecture that we have implemented so far and intend to continue to optimize. It is divided into three main parts:

- web

provides the user interface to edit and describe the pipeline graphically.

- apiserver

provides the web API interface and the interface for workers to pull tasks.

- worker

Pulling pipeline tasks from the current cluster, executing them and pushing the results

The user creates a pipeline on the web side, saves it in the DB through Apiserver, and generates a synchronization event. worker pulls the synchronization task from Apiserver in the message queue by polling, and then executes it in the current cluster. After the execution is completed, the result of the execution and the related logs are pushed to Apiserver and saved in DB. Finally, the user can view the execution results directly from the DB on the page.

It is worth noting that multiple worker services can be run on a cluster, and the requirement for the cluster is only to be able to connect to Apiserver, so it is possible to access the worker cluster on both public network and intranet for building.

4. Summary and outlook

4.1 Feature-rich and performance-optimized

A good product is one that is constantly polished in response to demand. We will continue to collect your requirements for CICD and improve the CICD system.

- Approval Functionality

Process control is one of the essential features of CICD. With runAfter, you can control the order of execution and dependencies between task tasks, but the Tekton community does not provide a solution for the approval function. We provide two solutions for the approval function, which are being designed and implemented.

- Access to physical institution building

As we are currently serving web and backend project image builds, we do not provide access to physical machines for now. However, we have considered the solution and are only waiting for user requirements.

- Sub-pipeline

Sub-pipeline allows to split a pipeline into multiple, different sub-pipelines can be executed in different worker clusters, while allowing better control of the process.

- Pipeline Cluster Management

The current backend of the pipeline supports multiple clusters, but the frontend does not provide an entry point for setting them up at the moment. The support for multiple cluster construction is one of the highlights of this design, and we hope to provide users with self-service access, self-service management, and self-service use as soon as possible.

4.2 CICD system with more functions

In addition to this specific design implementation, I’d like to talk about my understanding of the CICD system. Usually, we think of CICD systems as being used only for building and publishing. But in fact, CICD provides a runtime that corresponds to Serverless. This runtime can host many application scenarios, and even replace some SaaS. Here are two scenarios.

- Delivery

With a Kubernetes cluster, we can use Helm to deliver applications. But how do you deliver Kubernetes? How do you deliver services to VMs/bare metal servers? The answer is pipelining.

Service providers encapsulate their services through Task plug-ins and make them available to integrators. The integrator orchestrates various services through a pipeline and provides a solution for customer delivery.

- Automated Operations and Maintenance

- Fault handling

- Adding and deleting nodes

- Request for resources

- Service changes …