After the HTTP/2 standard was published in 2015, most major browsers also supported the standard by the end of that year. Since then, with the advantages of multiplexing, header compression, server push, HTTP/2 has been favored by more and more developers.

Unknowingly, HTTP has already reached its third generation. Tencent also follows the technology trend, and many projects are gradually using HTTP/3.

In this article, we talk about the principle of HTTP/3 and the way of business access.

1. HTTP/3 Principle

1.1 History of HTTP

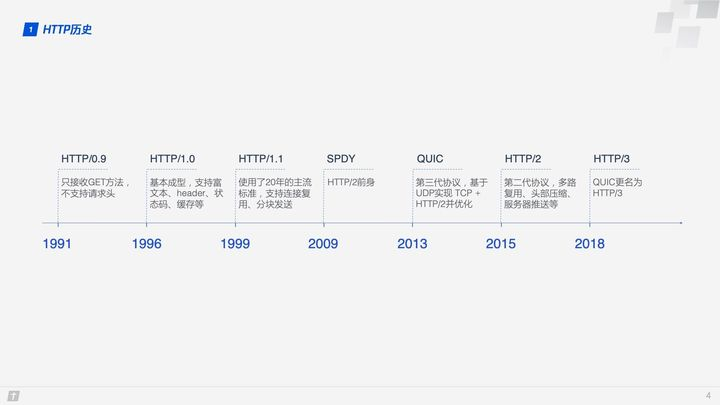

Before we introduce HTTP/3, let’s take a brief look at the history of HTTP and understand the background of how HTTP/3 came to be.

With the development of network technology, HTTP/1.1 designed in 1999 could no longer meet the demand, so Google designed the TCP-based SPDY in 2009, and later the SPDY development team pushed for SPDY to become an official standard, but it was not passed in the end. However, the development team of SPDY was involved in the whole process of HTTP/2 development and referred to many designs of SPDY, so we generally think SPDY is the predecessor of HTTP/2. Both SPDY and HTTP/2 are based on TCP, which has a natural disadvantage in efficiency compared to UDP, so in 2013 Google developed a UDP-based transport layer protocol called QUIC, which is called Quick UDP Internet Connections, hoping that it can replace TCP and make web page transmission more efficient. Later on proposed, the Internet Engineering Task Force officially renamed QUIC-based HTTP (HTTP over QUIC) as HTTP/3.

1.2 QUIC Protocol Overview

TCP has always been the dominant protocol in the transport layer, while UDP has been quiet and has long been perceived as a fast but unreliable transport layer protocol.

But sometimes, from a different perspective, the disadvantages can also be advantages.

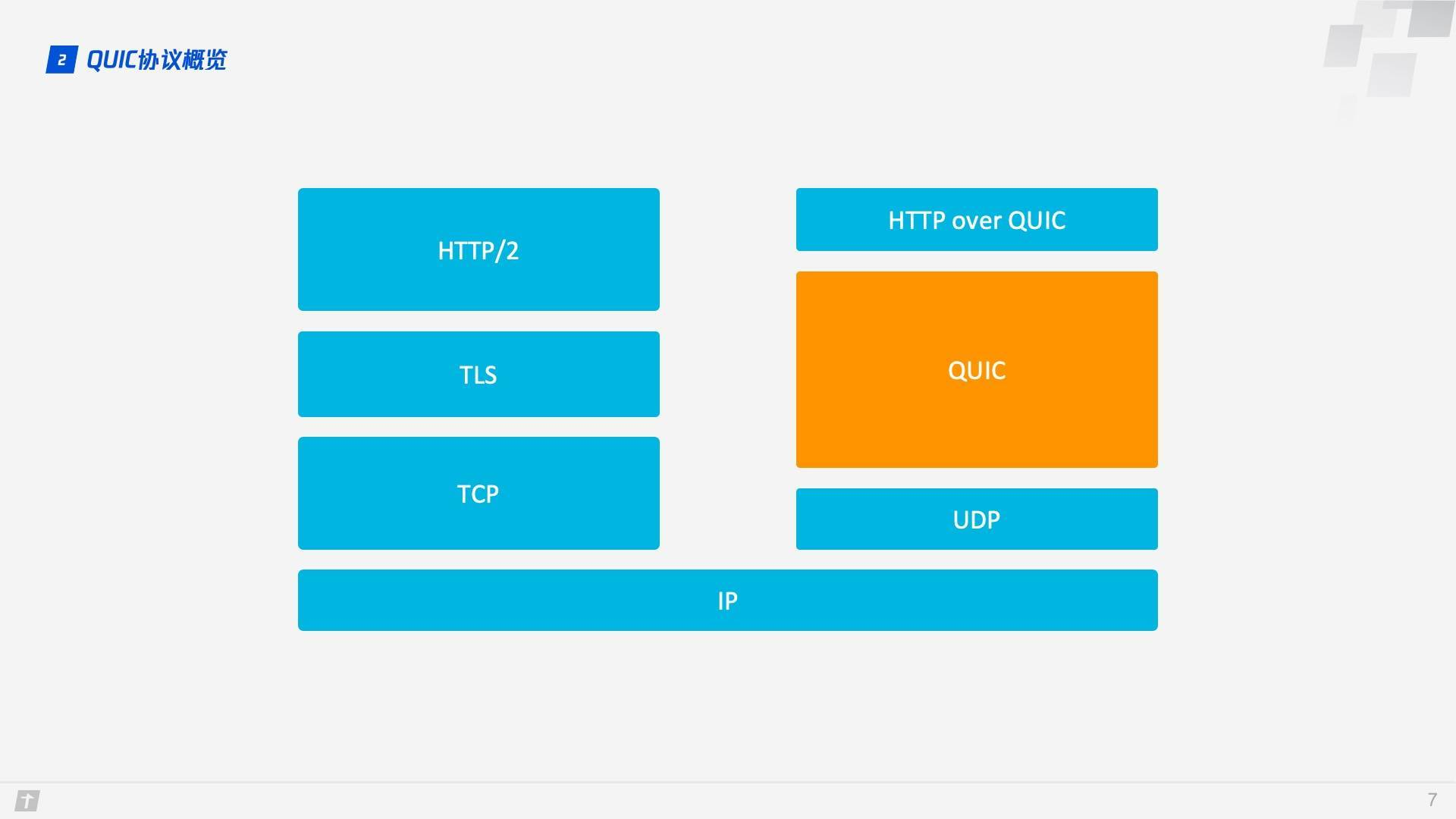

QUIC (Quick UDP Internet Connections) is based on UDP, which is fast and efficient. QUIC also integrates the advantages of TCP, TLS and HTTP/2, and optimizes them. The relationship between them can be clearly illustrated in a diagram.

QUIC is a transport layer protocol used to replace TCP, SSL/TLS, and there is an application layer on top of the transport layer, we are familiar with application layer protocols such as HTTP, FTP, IMAP, etc. These protocols can theoretically run on QUIC, where the HTTP protocol running on QUIC is called HTTP/3 This is what is meant by “HTTP over QUIC i.e. HTTP/3”.

So if you want to understand HTTP/3, you can’t get around QUIC. Here are a few important features that will give you a deeper understanding of QUIC.

1.3 Zero RTT connection establishment

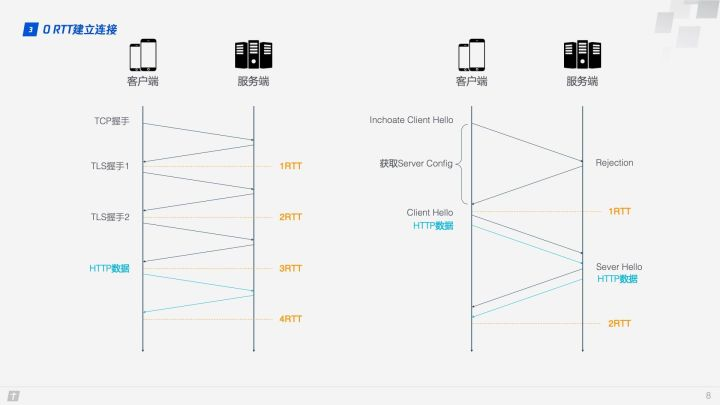

The difference between HTTP/2 and HTTP/3 connection establishment can be visualized in a diagram.

HTTP/2 connections require 3 RTT, and 2 RTT if we consider session reuse, i.e., caching the symmetric keys calculated from the first handshake.Further, if TLS is upgraded to 1.3, then HTTP/2 connections require 2 RTT, and considering session reuse requires 1 RTT. Some may argue that HTTP/2 does not necessarily require HTTPS, and that the handshake process can be simplified.This is true, the HTTP/2 standard does not need to be based on HTTPS, but virtually all browser implementations require HTTP/2 to be based on HTTPS, so an encrypted HTTP/2 connection is essential. HTTP/3 requires only 1 RTT for the first connection, and 0 RTT for subsequent connections, meaning that the first packet sent by the client to the server comes with the request data, which is beyond the reach of HTTP/2. So what’s the rationale behind this? Let’s look at the QUIC connection process in detail.

- When connecting for the first time, the client sends Inchoate Client Hello to the server, which is used to request a connection.

- The server generates g, p, a, calculates A according to g, p and a, and then puts g, p, A into Server Config and sends Rejection message to the client.

- After receiving g, p, A, the client generates b, calculates B according to g, p, b, and calculates the initial key K according to A, p, b. After B and K are calculated, the client will encrypt the HTTP data with K and send it to the server together with B.

- After receiving B, the server generates the same key as the client based on a, p, and B, and decrypts the received HTTP data with this key. For further security (forward security), the server updates its own random number a and public key, generates a new key S, and sends the public key to the client via Server Hello. Together with the Server Hello message, there is the HTTP return data.

- After the client receives the Server Hello, it generates a new key S that is consistent with the server, and all subsequent transmissions use S encryption.

Thus, QUIC spends a total of 1 RTT from requesting a connection to formally sending HTTP data, and this 1 RTT is mainly to obtain Server Config, and if the client caches Server Config for the later connection, then it can send HTTP data directly, realizing 0 RTT to establish the connection.

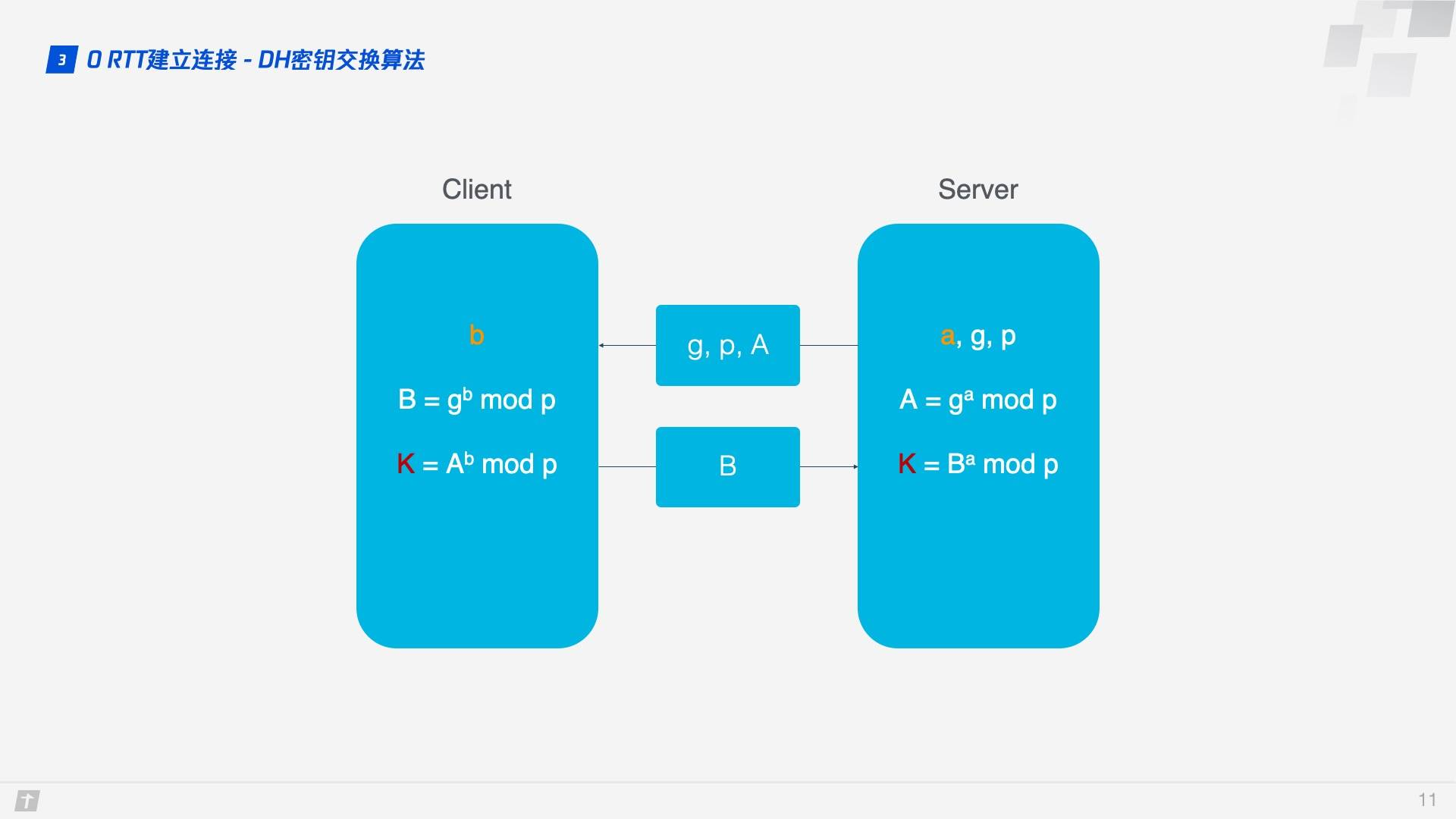

DH key exchange algorithm is used here, the core of DH algorithm is that the server generates a, g, p 3 random numbers, a holds itself, g and p to be transmitted to the client, and the client will generate b this 1 random number, through DH algorithm client and server can calculate the same key. In this process a and b are not involved in network transmission, which is much more secure. Since p and g are large numbers, even if p, g, A and B transmitted in the network are hijacked, it is impossible to break the key by the current computer computing power.

1.4 Connection Migration

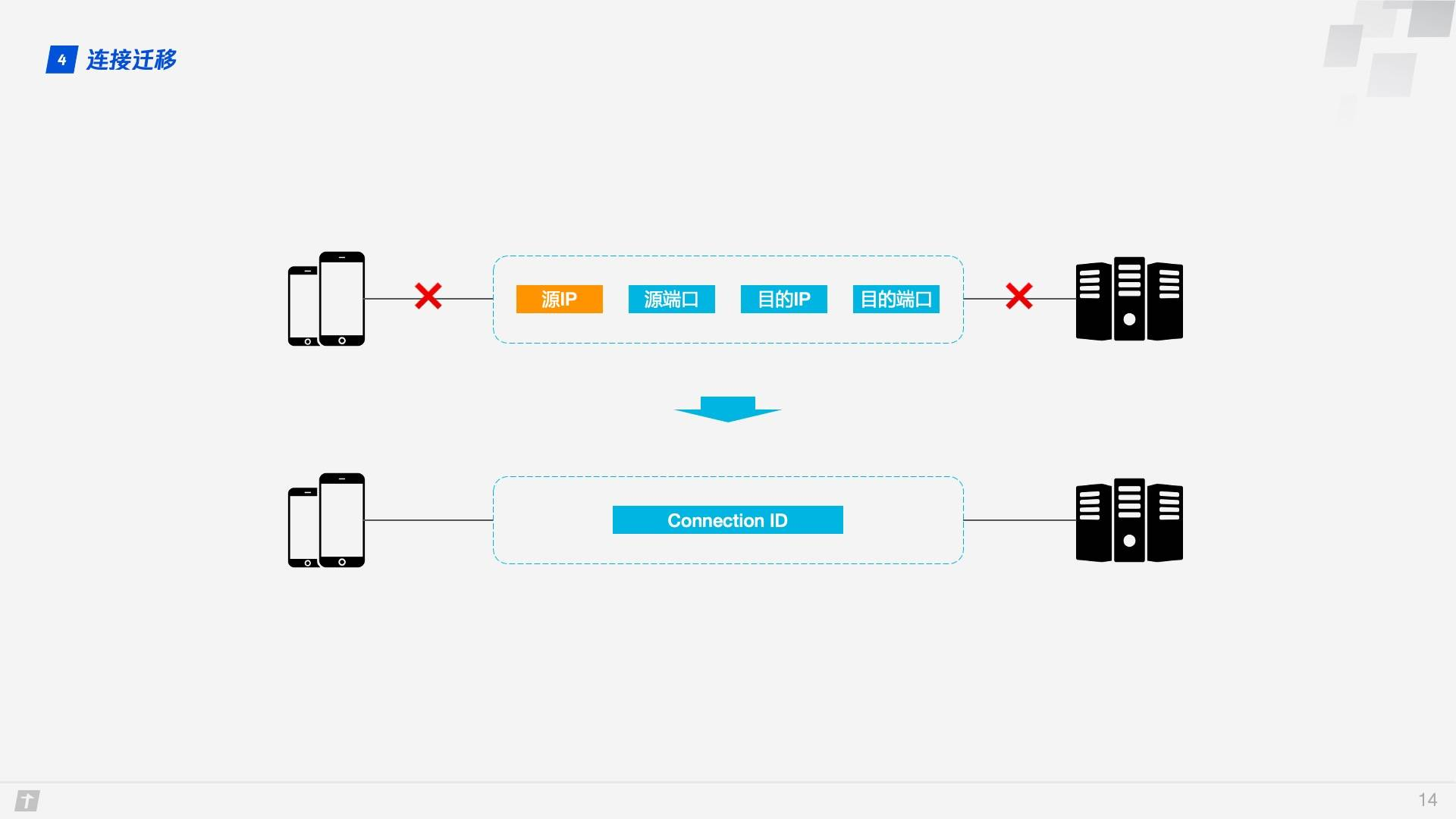

TCP connections are based on quadruples (source IP, source port, destination IP, destination port), and at least one factor changes when switching networks, causing the connection to change. When the connection changes, if the original TCP connection is still used, it will cause the connection to fail and you will have to wait for the original connection to time out and re-establish the connection, so we sometimes find that when switching to a new network, the content takes a long time to load even though the new network is in good condition. If implemented properly, a new TCP connection is established as soon as a network change is detected, and even then, it still takes several hundred milliseconds to establish a new connection.

QUIC’s connection is unaffected by the quaternion, and when these four elements change, the original connection is maintained. So how does this work? The reason is simple: instead of using quaternions as identifiers, QUIC connections use a 64-bit random number, called the Connection ID, which maintains the connection even if the IP or port changes, as long as the Connection ID remains the same.

1.5 Head of line blocking/multiplexing

HTTP/1.1 and HTTP/2 both have Head of line blocking, so what is it?

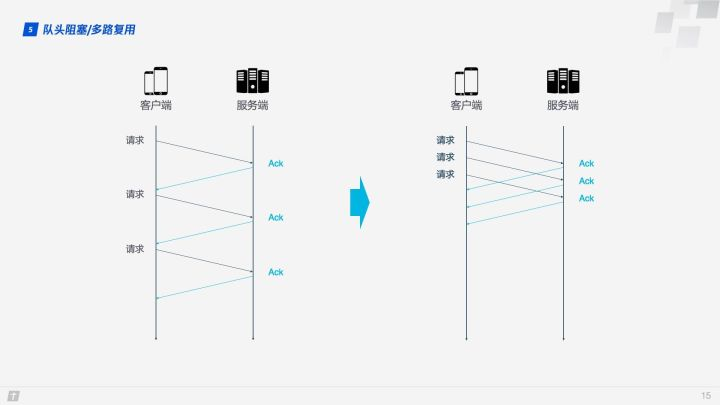

TCP is a connection-oriented protocol, which means that an ACK message needs to be received after a request is sent to confirm that the other party has received the data. If each request has to be made after receiving the ACK message of the last request, then it is undoubtedly very inefficient. Later, HTTP/1.1 introduced Pipelining, which allows a TCP connection to send multiple requests at the same time, thus greatly improving transmission efficiency.

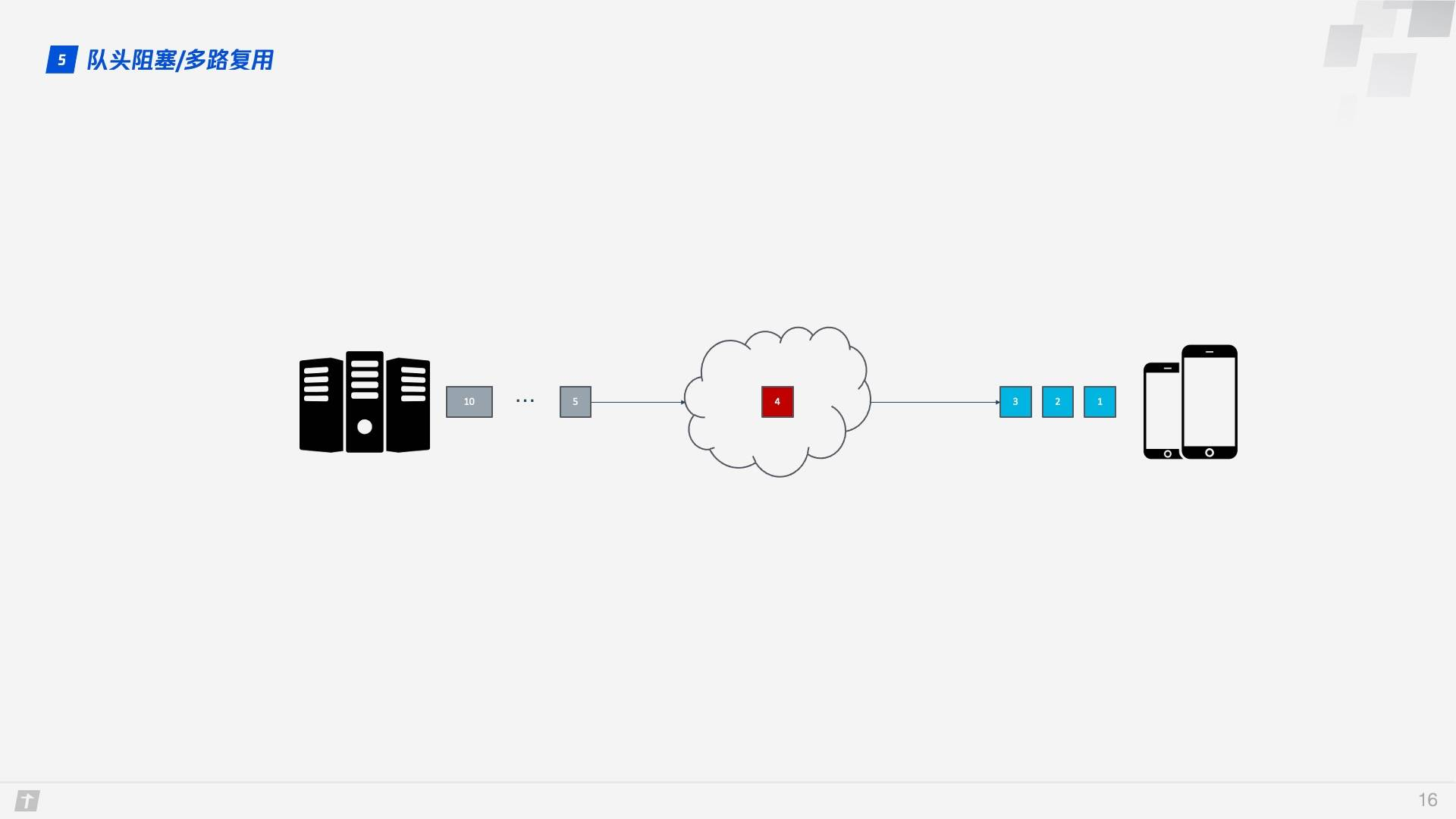

In this context, let’s talk about the HTTP/1.1 Head of line blocking. In the figure below, a TCP connection is transmitting 10 requests at the same time, where the 1st, 2nd and 3rd requests have been received by the client, but the 4th request is lost, then the next 5 - 10 requests are blocked and need to wait for the 4th request to be processed, thus wasting bandwidth resources.

As a result, HTTP generally allows 6 TCP connections per host, which makes fuller use of bandwidth resources, but the problem of Head of line blocking in each connection still exists.

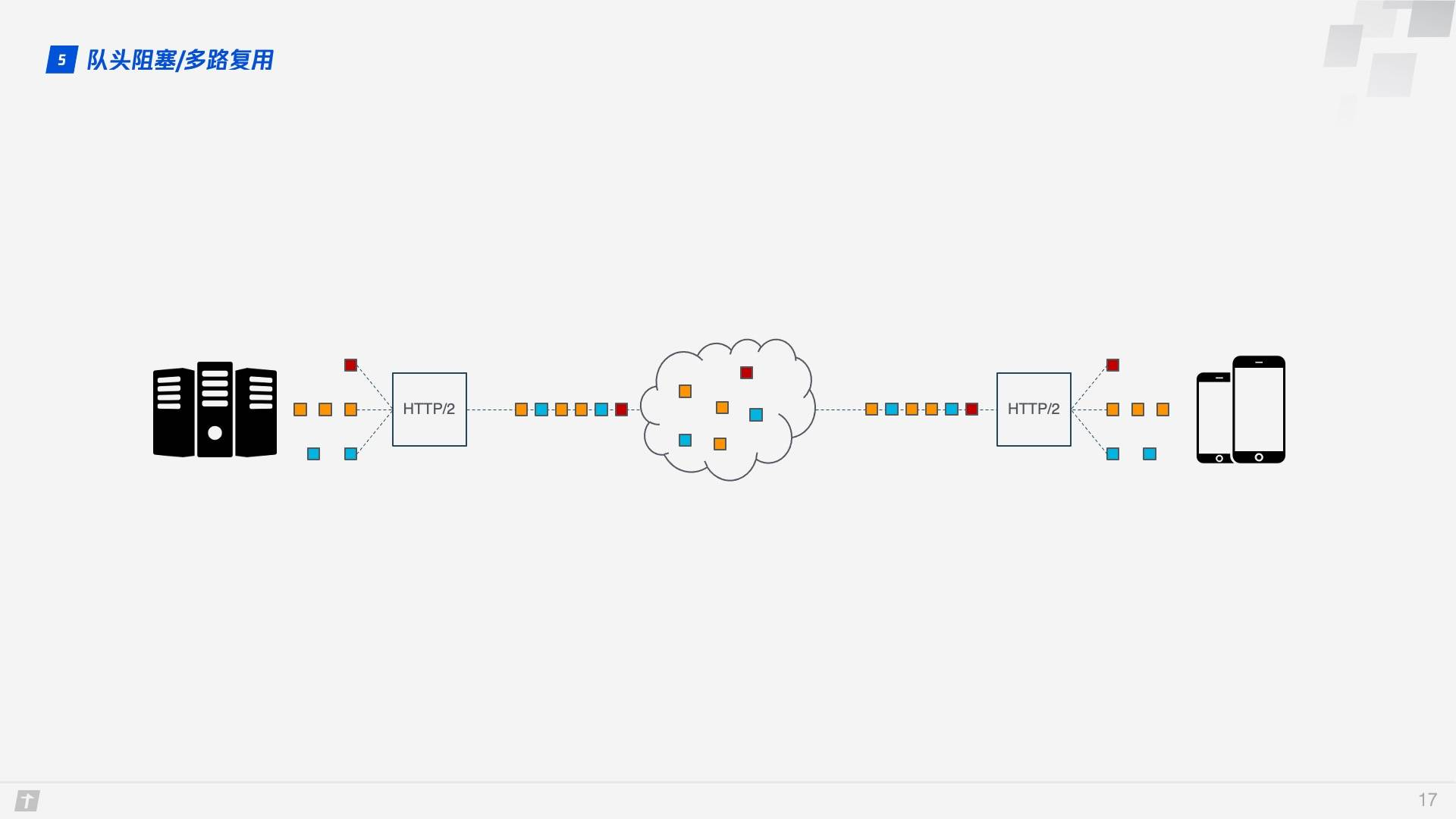

HTTP/2’s multiplexing solves the above Head of line blocking problem. Unlike HTTP/1.1 where only all packets of the previous request are transmitted before the next request can be transmitted, in HTTP/2 each request is split into multiple frames and transmitted simultaneously over a TCP connection, so that even if one request is blocked, it does not affect the other requests. As shown in the figure below, different colors represent different requests, and blocks of the same color represent the frames in which the requests are split.

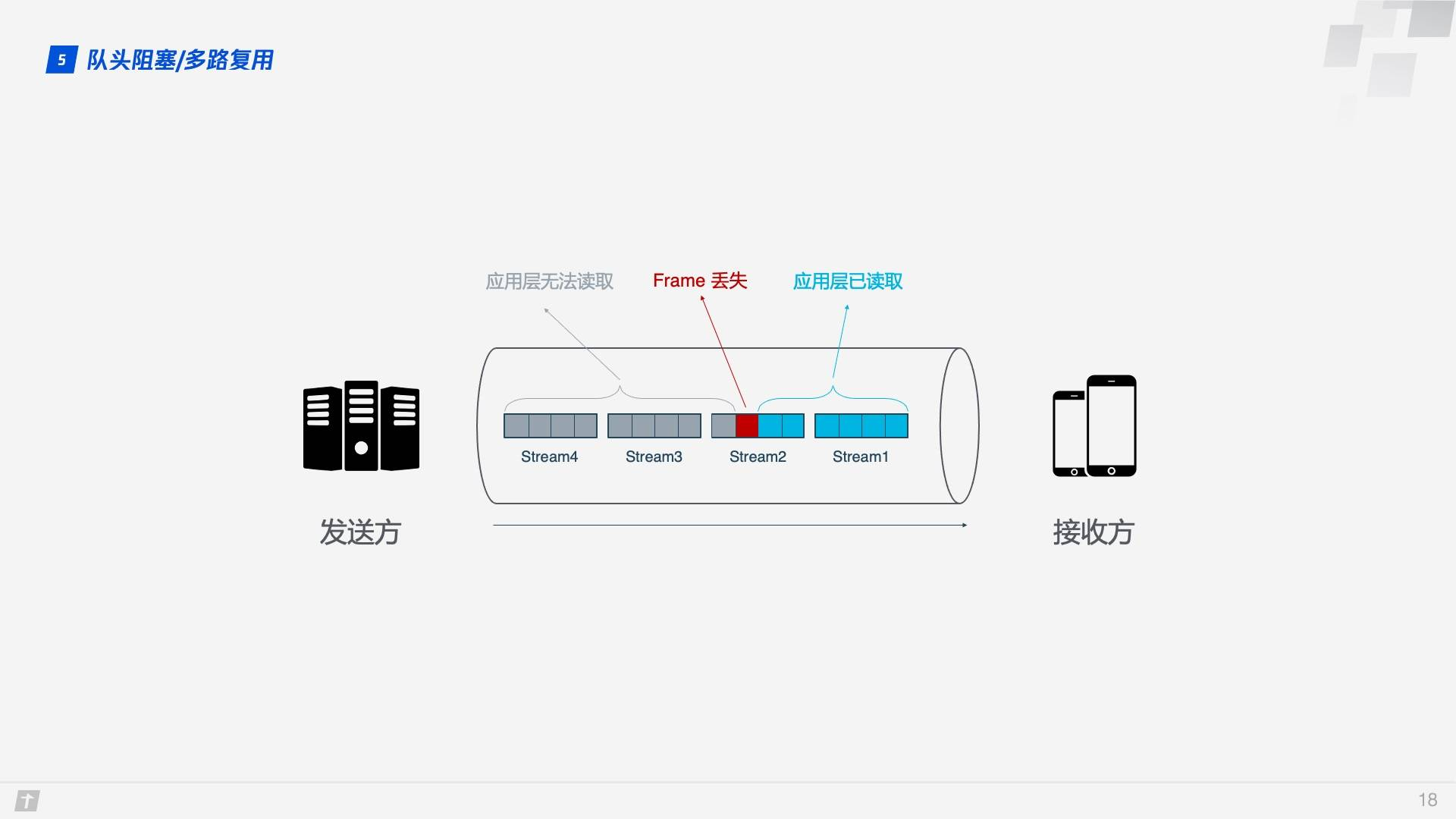

While HTTP/2 solves the blocking problem at the granularity of “requests”, the TCP protocol underlying HTTP/2 itself suffers from Head of line blocking. Each request in HTTP/2 is split into multiple frames, and the frames of different requests are combined into Streams, which are logical transport units over TCP, so that HTTP/2 achieves the goal of sending multiple requests over a single connection. This is the principle of multiplexing. Let’s look at an example where four Streams are sent simultaneously over a TCP connection, where Stream1 is delivered correctly and the third Frame in Stream2 is lost. TCP processes data in a strict order, with the first Frame sent being processed first. This requires the sender to resend the third frame, and Stream3 and Stream4 arrive but cannot be processed, so the entire connection is blocked.

In addition, since HTTP/2 must use HTTPS, and the TLS protocol used in HTTPS also has the problem of queue head blocking, TLS organizes data based on Record, encrypts a bunch of data together (i.e., a Record), and then splits it into multiple TCP packets after encryption. Generally, each Record is 16K and contains 12 TCP packets, so if any one of the 12 TCP packets is lost, then the whole Record cannot be decrypted.

Head of line blocking causes HTTP/2 to be slower than HTTP/1.1 in weak network environments that are more prone to packet loss!

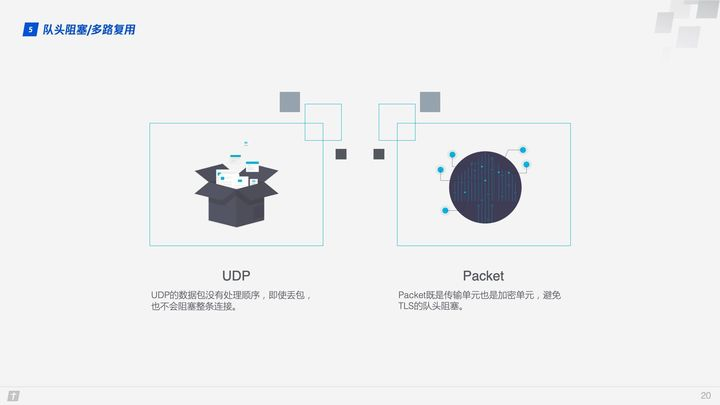

So how does QUIC solve the Head of line blocking problem? There are two main points.

- The transmission unit of QUIC is Packet, and the encryption unit is also Packet, so the whole encryption, transmission and decryption are based on Packet, which can avoid the Head of line blocking problem of TLS.

- QUIC is based on UDP, and UDP packets are not processed in order at the receiving end. Even if a packet is lost in the middle, it will not block the whole connection, and other resources will be processed normally.

1.6 Congestion Control

The purpose of congestion control is to prevent too much data from flooding into the network all at once, causing the network to exceed its maximum load.QUIC’s congestion control is similar to TCP and improves on it. So let’s start with a brief introduction to TCP congestion control.

TCP congestion control consists of four core algorithms: slow start, congestion avoidance, fast retransmission, and fast recovery, and once you understand these four algorithms, you will have a general understanding of TCP congestion control.

- Slow start: the sender sends 1 unit of data to the receiver, after receiving confirmation from the other side, 2 units of data will be sent, then 4, 8 …… in order to grow exponentially, this process is constantly testing the degree of network congestion, beyond the threshold will lead to network congestion.

- Congestion avoidance: the exponential growth cannot be infinite, after reaching some limit (slow-start threshold), the exponential growth becomes linear.

- Fast retransmission: the sender sets a timeout timer for each transmission, after which it is considered lost and needs to be retransmitted.

- Fast recovery: on top of the fast retransmission above, the sender also starts a timeout timer when retransmitting data, and enters the congestion avoidance phase if an acknowledgement message is received, or returns to the slow-start phase if it still times out.

QUIC reimplements the Cubic algorithm of the TCP protocol for congestion control and makes a number of improvements on top of it. Some of the features of QUIC’s improved congestion control are described below.

1.6.1 Hot-plugging

If you want to modify the congestion control policy in TCP, you need to do it at the system level. QUIC modifies the congestion control policy only at the application level, and QUIC dynamically selects the congestion control algorithm according to different network environments and users.

1.6.2 Forward Error Correction(FEC)

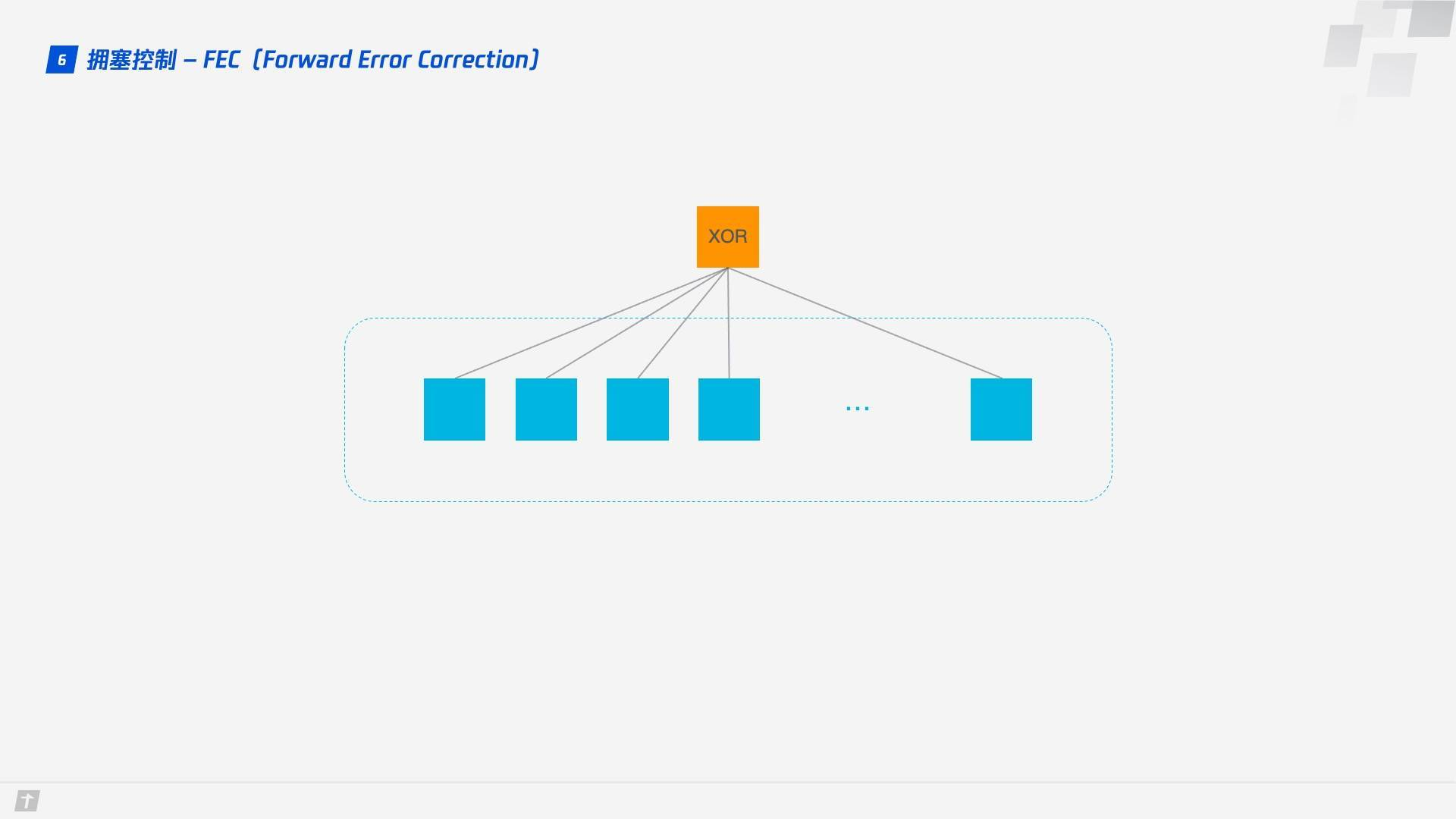

QUIC uses Forward Error Correction (FEC) techniques to increase the fault tolerance of the protocol. After a segment of data is cut into 10 packets, each packet in turn performs a heteroskedastic operation, the result of which is transmitted as a FEC packet together with the packet. If unfortunately a packet is lost during transmission, the data of the lost packet can be deduced from the remaining 9 packets and the FEC packet, which greatly increases the fault tolerance of the protocol.

This is a solution in line with the current stage of network technology, where bandwidth is no longer the bottleneck of network transmission, but round-trip time is, so the new network transmission protocol can appropriately increase data redundancy and reduce retransmission operations.

1.6.3 Monotonically Increasing Packet Number

TCP uses Sequence Number and ACK to confirm the orderly arrival of messages for reliability, but this design is flawed.

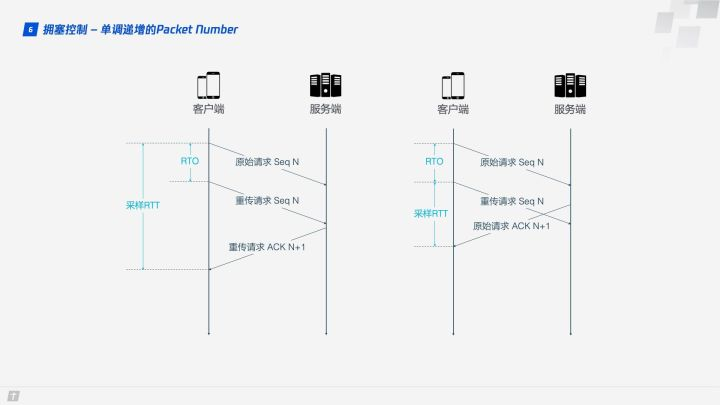

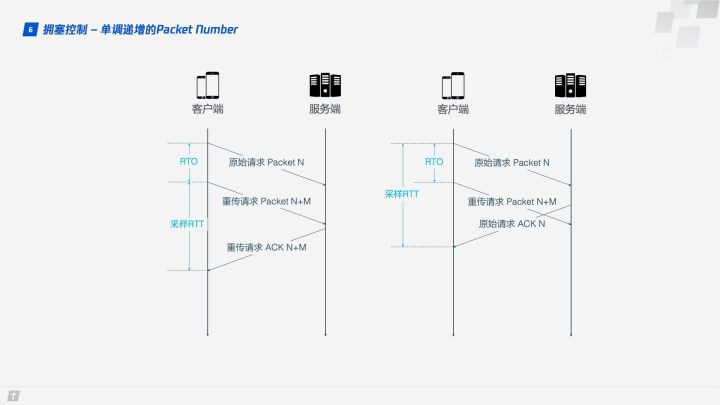

After a timeout occurs, the client initiates a retransmission and later receives an ACK acknowledgment message, but since the original request and the retransmission request receive the same ACK message, the client is frustrated and does not know whether the ACK corresponds to the original request or the retransmission request. If the client thinks it is the ACK of the original request, but it is actually the case in the left figure, the calculated sample RTT is large; if the client thinks it is the ACK of the retransmission request, but it is actually the case in the right figure, the sample RTT will be small again. There are several terms in the figure, RTO refers to the timeout retransmission time (Retransmission TimeOut), and we are familiar with RTT (Round Trip Time) is very similar. Sampling RTT will affect the RTO calculation, the accurate grasp of the timeout time is very important, long or short is not appropriate.

QUIC solves the ambiguity problem above. Unlike Sequence Number, Packet Number is strictly monotonically increasing, so that if Packet N is lost, the retransmission Packet will not be identified by N, but by a number larger than N, such as N + M. This makes it easy for the sender to know whether the ACK corresponds to the original request or the retransmission request when it receives the acknowledgment message.

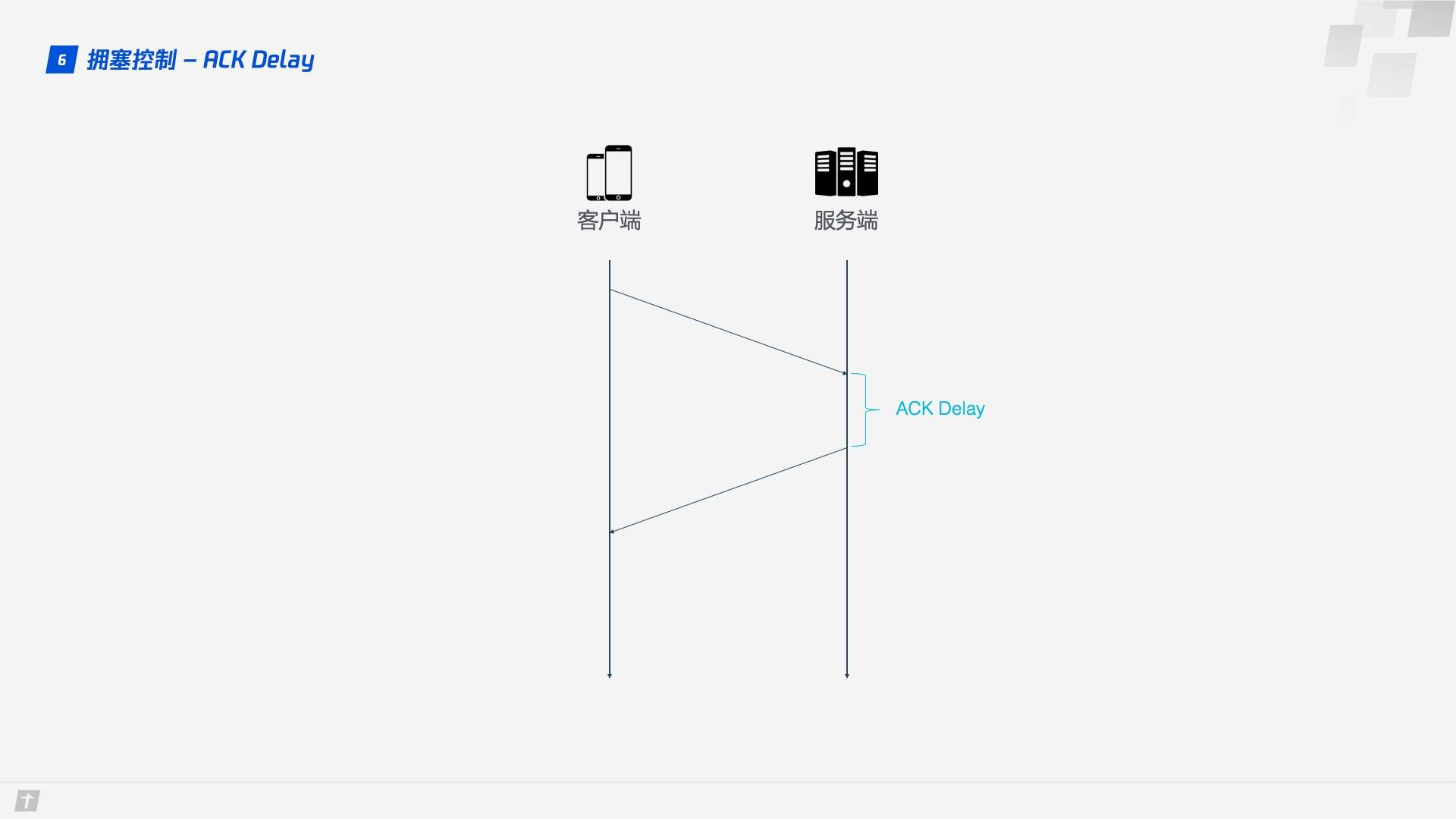

1.6.4 ACK Delay

TCP calculates RTT without taking into account the delay between the receipt of data by the receiver and the sending of the acknowledgement message, as shown in the figure below, which is the ACK Delay. quic takes this delay into account to make the RTT calculation more accurate.

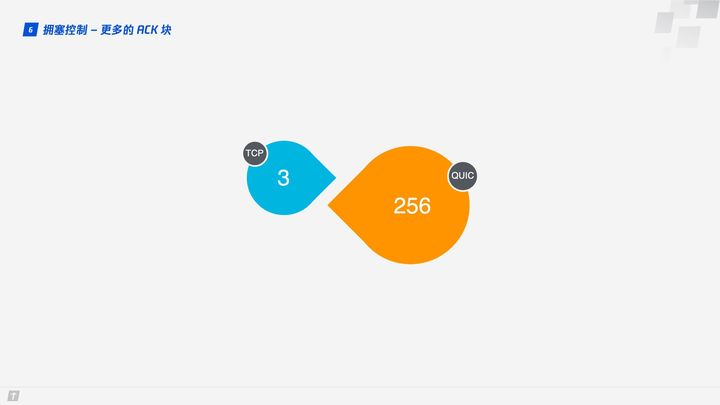

1.6.5 More ACK Blocks

In general, the receiver should send an ACK reply after receiving a message from the sender to indicate that the data was received. However, it is too cumbersome to return an ACK reply for every data received, so it usually does not reply immediately, but replies after receiving multiple data. TCP SACK provides up to 3 ACK blocks, but in some scenarios, such as downloads, the server only needs to return data, but TCP is designed to “politely” return an ACK for every 3 packets received, and QUIC can piggyback up to 256 ACK blocks. In networks with severe packet loss, more ACK blocks can reduce the amount of retransmissions and improve network efficiency.

1.7 Flow Control

TCP performs flow control on each TCP connection. Flow control means that the sender should not send too fast and let the receiver have time to receive, otherwise it will lead to data overflow and loss. TCP’s flow control is mainly implemented by sliding window. As you can see, congestion control mainly controls the sending strategy of the sender, but does not take into account the receiving capability of the receiver, and flow control is a complement to this capability.

QUIC only needs to establish a connection on which multiple Streams are transmitted at the same time, as if there were a road with a warehouse at each end and many vehicles delivering supplies in the road. There are two levels of traffic control in QUIC: Connection Level and Stream Level, as if we want to control the total traffic on the road so that there are not so many vehicles coming in at once that the goods are too late to be processed, nor can we have one vehicle delivering many goods at once that the goods are too late to be processed.

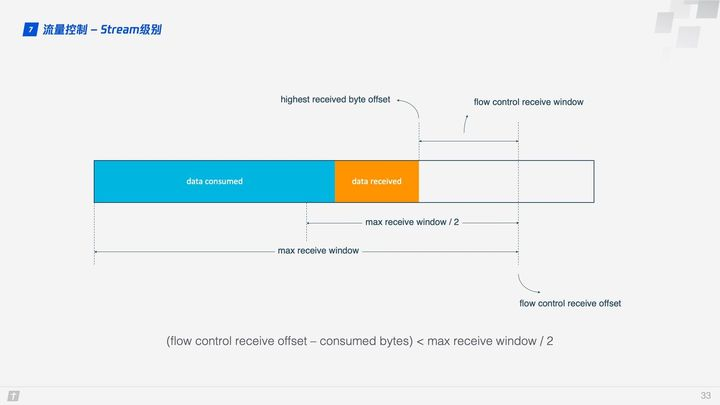

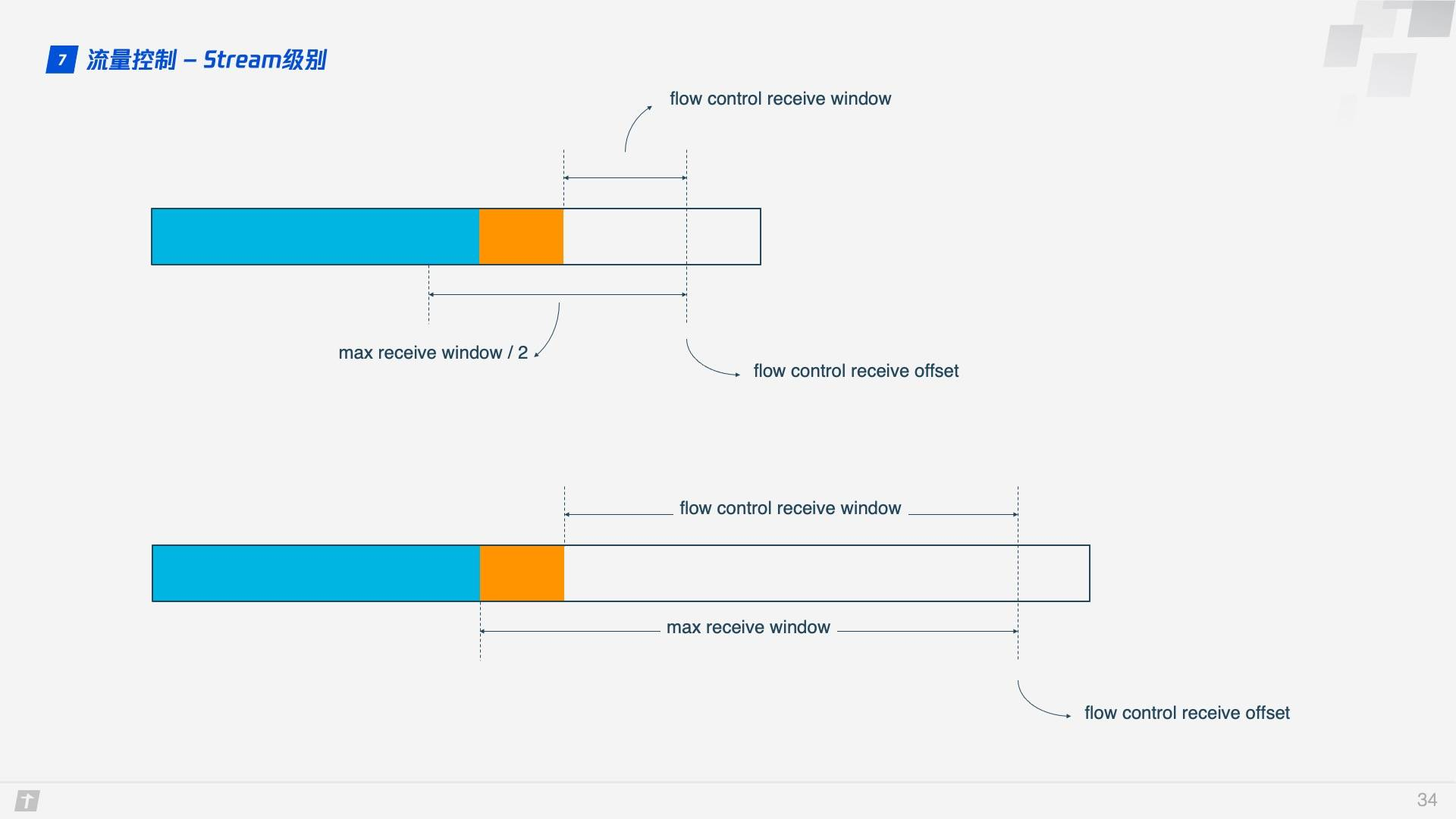

So how does QUIC achieve flow control? Let’s look at the flow control of a single Stream. when the Stream is not yet transmitting data, the receive window (flow control receive window) is the maximum receive window (flow control receive window), and as the receiver receives the data, the receive window shrinks. Among the received data, some data has been processed and some data has not yet had time to be processed. As shown in the figure below, the blue block indicates processed data and the yellow block indicates unprocessed data, the arrival of this data makes Stream’s receive window shrink.

As more data is processed, the receiver has the ability to process more data. When (flow control receive offset - consumed bytes) < (max receive window / 2) is satisfied, the receiver sends a WINDOW_UPDATE frame telling the sender that you can send more data. The flow control receive offset is then shifted, the receive window is increased, and the sender can send more data to the receiver.

Stream level is limited to prevent the receiver from receiving too much data, and it is more necessary to use the Connection level flow control. Once you understand Stream flow, you can also understand Connection flow control well.

In Stream, flow control receive window = max receive window - highest received byte offset, while for Connection: receive window = Stream1 receive window + Stream2 receive window + … + StreamN receive window.

2. HTTP/3 Practices

2.1 X5 Kernel and STGW

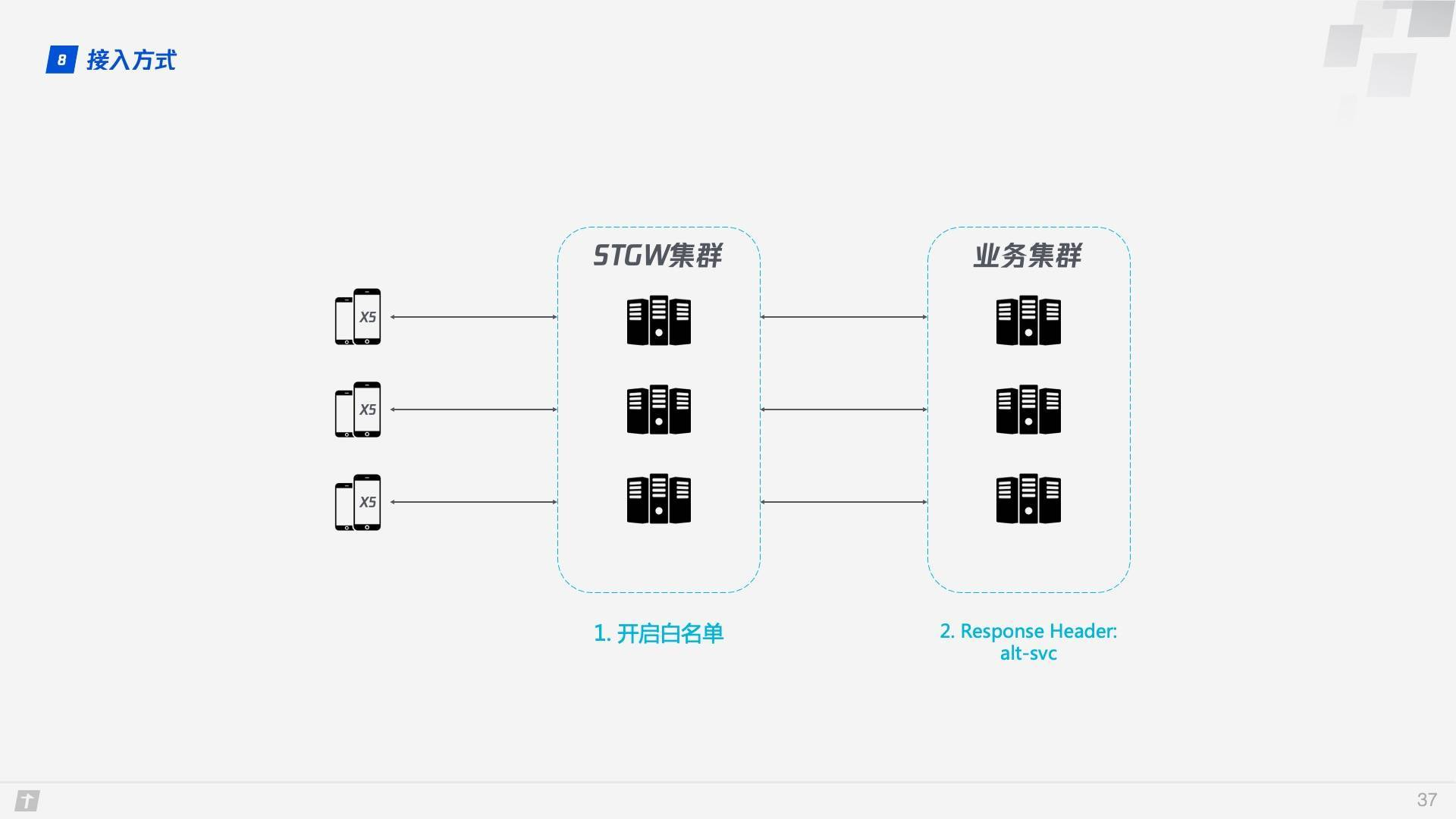

X5 kernel is a browser kernel developed by Tencent for Android, which is a unified browser kernel developed to solve the problems of high adaptation cost, insecurity and instability of traditional Android browser kernels, etc. STGW stands for Secure Tencent Gateway. Both of them have supported QUIC protocol since two years ago.

So how do we access QUIC as a business running on X5? Thanks to X5 and STGW, the changes required to access QUIC are minimal and require only two steps.

- Enable whitelisting on STGW to allow service domains to access the QUIC protocol.

- Add alt-svc attribute to the Response Header of the service resource, example: alt-svc: quic=":443"; ma=2592000; v=“44,43,39”.

When using QUIC, STGW has the obvious advantage of having STGW communicate with clients that support QUIC (in this case X5), while the business backend still uses HTTP/1.1 to communicate with STGW, and the cached information needed for QUIC, such as Server Config, is maintained by STGW.

2.2 Negotiating Escalation and Racing

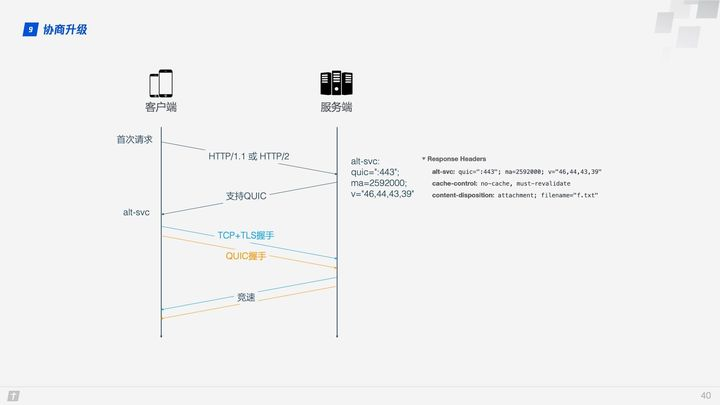

The service domain is added to the STGW whitelist and the alt-svc attribute is added to the Response Header of the service resource, so how does QUIC establish the connection? There is a critical step here: negotiating the upgrade. The client is not sure if the server supports QUIC and may fail if it rashly requests a QUIC connection, so it needs to go through a negotiation upgrade process to decide whether to use QUIC.

On the first request, the client will use HTTP/1.1 or HTTP/2. If the server supports QUIC, the alt-svc header is returned in the response data, telling the client that the next request can go QUIC. alt-svc contains mainly the following information.

- quic: the port to listen on.

- ma: the valid time, in seconds, during which QUIC is promised to be supported.

- version number: QUIC iterates quickly, and all supported version numbers are listed here.

After confirming that the server supports QUIC, the client initiates both a QUIC connection and a TCP connection to the server, compares the speed of the two connections, and then chooses the faster protocol, a process called a “race to the bottom”, which is usually won by QUIC.

2.3 QUIC performance

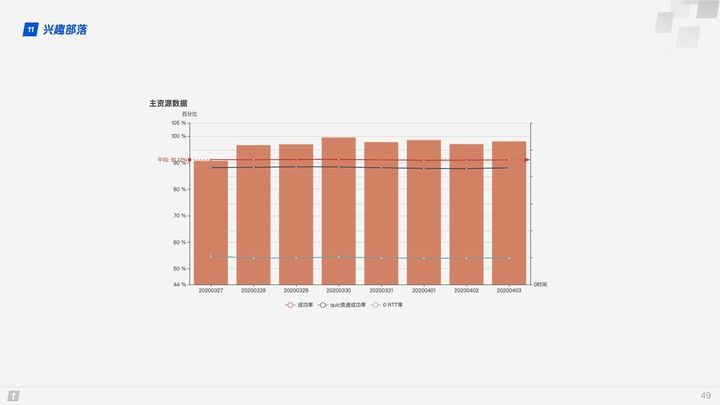

The success rate of QUIC in establishing connections is above 90%, and the race success rate is close to 90%, with a 0 RTT rate of around 55%.

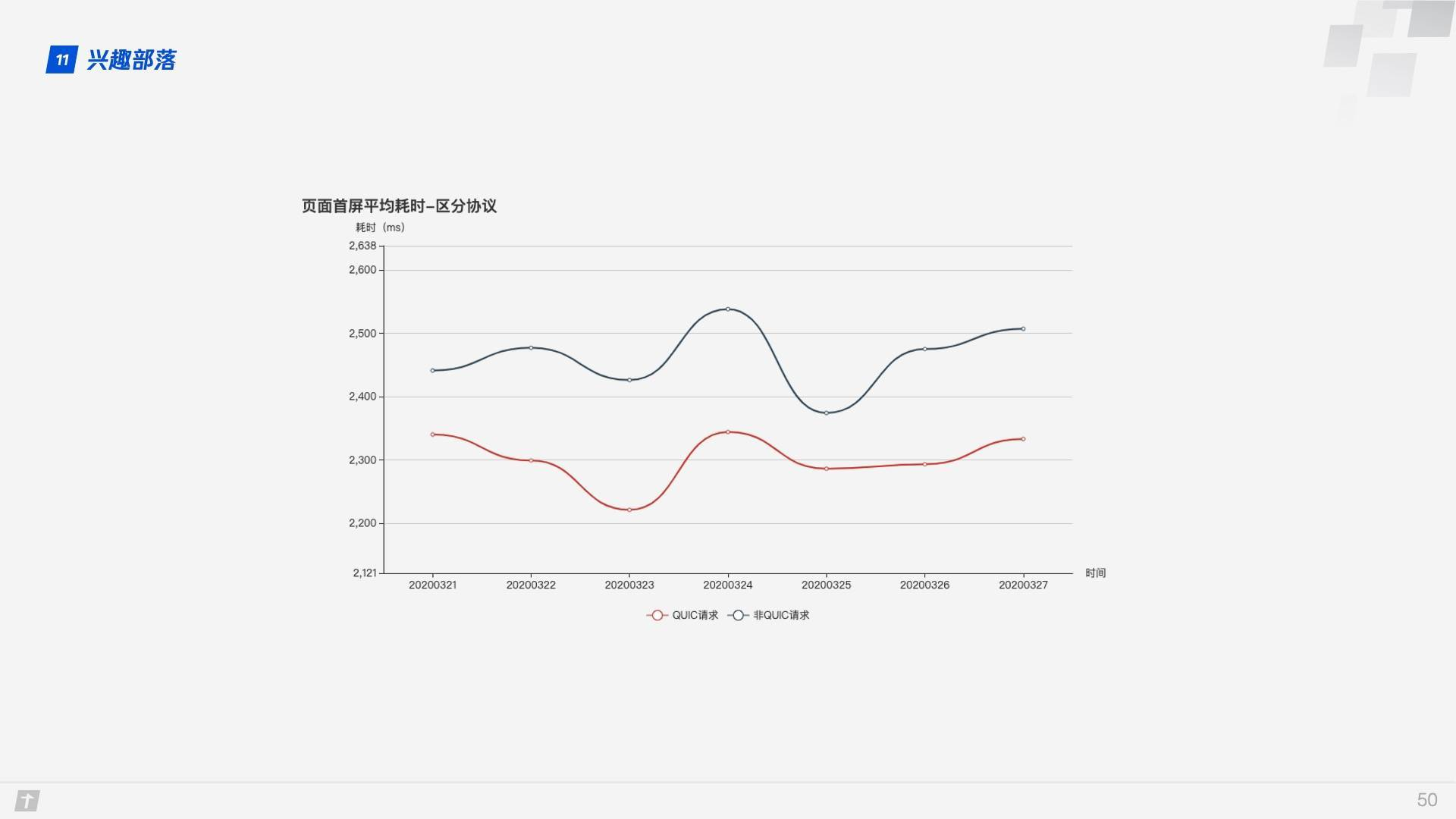

The first screen of the page takes 10% less time when using the QUIC protocol than the non-QUIC protocol.

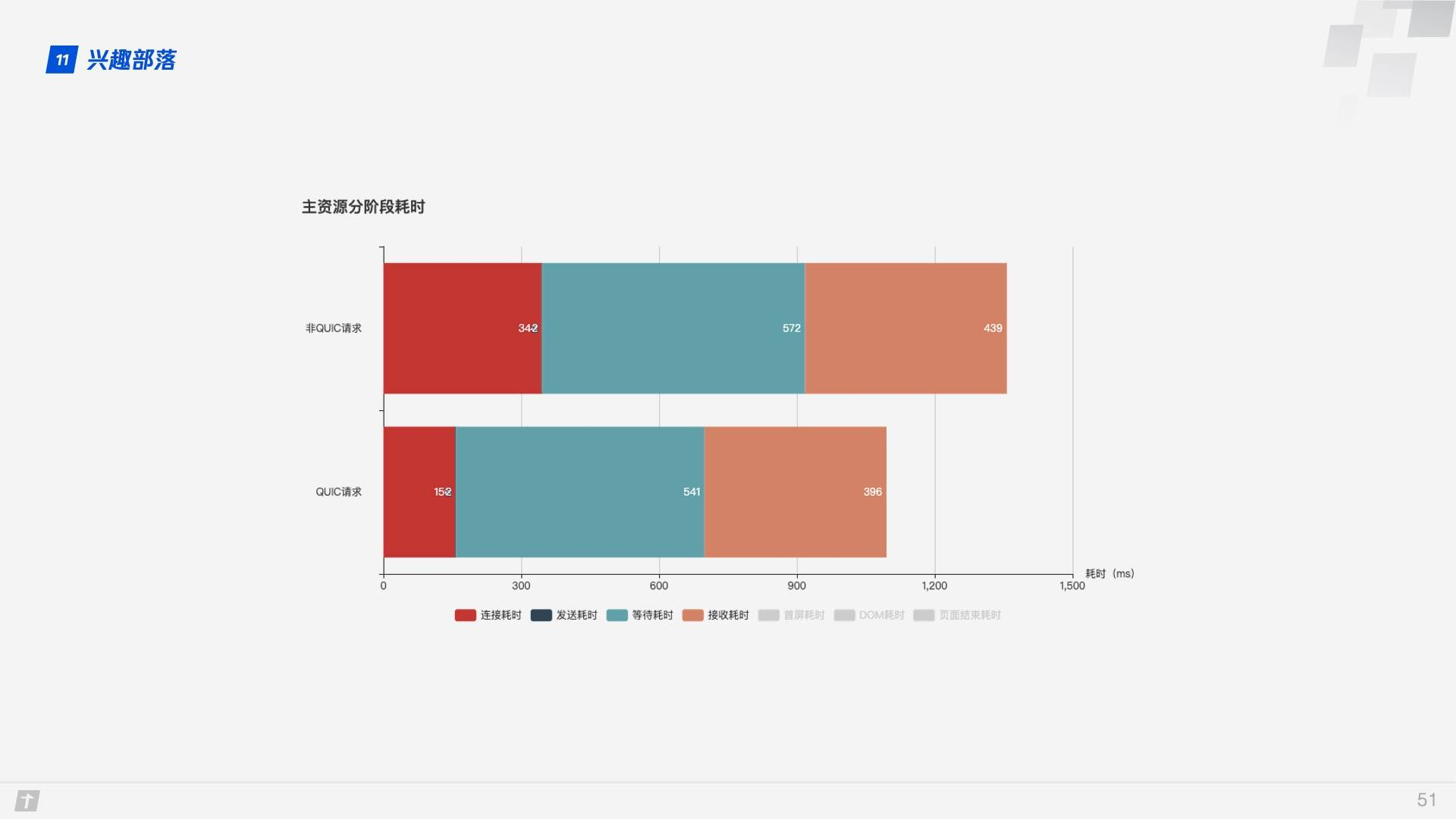

Looking at the different phases of resource acquisition, the QUIC protocol saves more time in the connection phase.

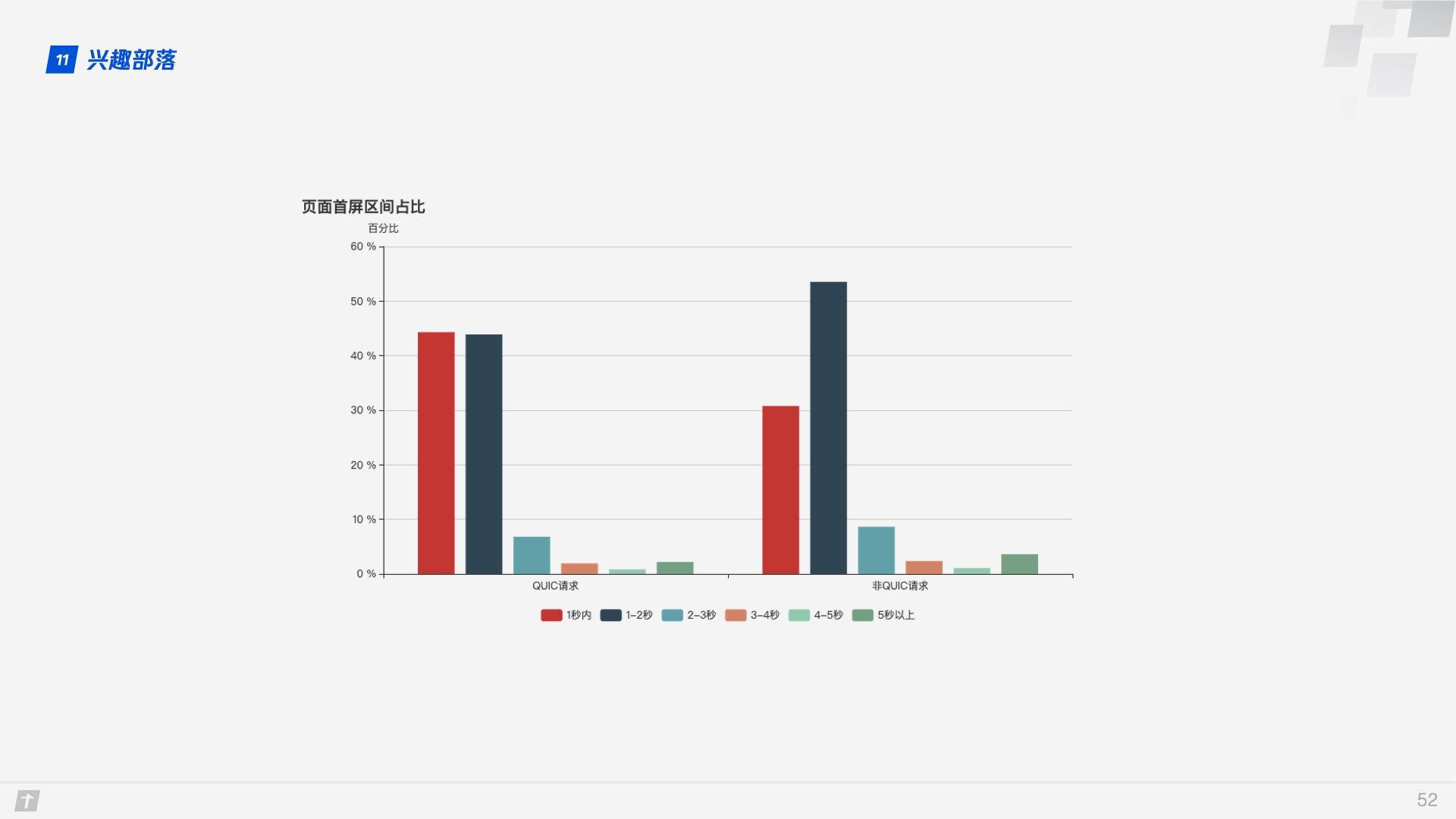

As you can see from the graph of the first screen interval, with the QUIC protocol, the first screen time within 1 second is significantly higher, at around 12%.

3. Summary

QUIC drops the TCP and TLS baggage and implements a secure, efficient, and reliable HTTP communication protocol based on UDP, with lessons learned and improvements to TCP, TLS, and HTTP/2. With excellent features such as 0 RTT connection establishment, smooth connection migration, largely eliminated queue head blocking, improved congestion control and traffic control, QUIC achieves better results than HTTP/2 in most scenarios.

Previously, Microsoft announced that it had open sourced its own internal QUIC library, MsQuic, and would fully recommend the QUIC protocol as a replacement for TCP/IP.

The future of HTTP/3 is in sight.